Tree-Based Access

Part of the Data Access and Storage Facilities, a cluster of components within the system focuses on uniform access and storage of structured data of arbitrary semantics, origin, and size. Some additional components build on the generic interface and specialise to data with domain-specific semantics, including document data and semantic data.

This document outlines their design rationale, key features, and high-level architecture as well as the options for their deployment.

Overview

Access and storage of structured data can be provided under a uniform model of labelled trees and through a remote API of read and write operations over such trees.

A tree-oriented interface is ideally suited to clients that abstract over the domain semantics of the data. In particular, the interface is primarily intended for a wide range of data management processes within the system. A generic interface may also be used by domain-specific clients, if its flexibility and the completeness of the associated tools avoid the limitations of more specific interfaces, particularly those that do not align with standard protocols and data models.

The tree interface is collectively provided by a heterogeneous set of components. Some services expose the tree API, and use a variety of mechanisms to optimise data transfer in read and write operations, including pattern languages, URI resolution schemes, in-place updates, and data streams. Other services build on the tree API to publish and maintain passive views over the data.

The services have dynamically extensible architectures, i.e. rely on independently developed plugins to adapt their APIs to a variety of back-ends within or outside the system. Existing plugins adapt the APIs to document repositories that expose OAI interfaces and to sources of semantic data that SPARQL interfaces. A distinguished plugin stores the data locally to service endpoints, providing a scalable storage solution for the system.

When connected to remote data sources, the services may be widely replicated and their replicas know how to leverage the Enabling Services to scale horizontally to the capacity of the remote back-ends. The same plugin mechanism yields a storage solution, in that a distinguished plugin is used to store and access trees locally to individual service endpoints.

Finally, a rich set of libraries implement the relevant models (trees, patterns, streams, documents) and provide high-level façades to remote service APIs.

Key features

The subsystem provides for:

- uniform model and access API over structured data

- The model is based on edge-labelled and node-attributed trees, and the API is based on a the read and write operations exposed by the Tree Manager services, a suite a stateful WS Services whose instances model data sources that are accessible through the API.

- fine-grained access to structured data

- The read operations can match and prune trees based on a sophisticated pattern language. They can also resolve whole trees or arbitrary nodes from URIs in a dedicate scheme which are based on local node identifiers. The write operations can perform updates in place, applying the tree model to the changes themselves (delta trees). Both read and write operations can work on individual trees as well as arbitrarily large tree streams.

- dynamically pluggable architecture of model and API transformations

- The Tree Manager services implement the interface against an open-ended number of data sources, from local sources to remote sources, including those that are managed outside the boundaries of the system. The implementation relies on two-way transformations between the tree model and API of the service and those of the target sources. Transformations are implemented in plugins, i.e. libraries developed in autonomy from the services, and at much lower costs, which extend their capabilities at runtime (dynamic deployment). Plugins may implement the API partially (e.g. for read-only access) and employ best-effort strategies in adapting individual operations to the target sources.

- uniform modelling and access API over document data

- The generic tree model can be specialised to describe document data. The gCube Document Model (gDM) is used for document management processes within the system, providing rich, aggregated descriptions of document content, metadata, annotations, parts, and alternatives. Based on the gDM, a dedicated plugin of the Tree manager services mediates access to arbitrary OAI repositories or groups of one or more collections found in such repositories;

- uniform modelling and access API over semantic data

- The generic tree model can be specialised to describe semantic data. This yields tree-oriented views over RDF graph data, which can then be fed to to general-purposes system processes. A dedicated plugin of the Tree manager maintains these views over arbitrary SPARQL endpoints;

- load-scalable access to remote data sources

- The Tree Manager services may be widely replicated within the system, and their replicas can autonomously balance their state by: i) publishing records of their local activity (activation records) in the Directory Services, ii) subscribing with those services for notifications of records published by other replicas, and iii) processing the notified records so as to replicate the activations of the other replicas . The Directory Services act then as infrastructure-wide load balancers for the state-balanced replicas, pointing clients to least-loaded replicas first.

- fast and size-scalable storage of structured data

- A distinguished plugin of the Tree Manager services, the Tree Repository, stores trees locally to service endpoints, using graph database technology to avoid model impedance mismatches and to offer full coverage and efficient implementations of the service API.

- flexible viewing mechanisms over structured data

- The View Manager services are stateful Web Services that build on the tree pattern language to define “passive” views of data sources accessible through the Tree manager services. Views can be published and their summary properties maintained under generic regimes, but the services can be dynamically extended with plugins that customise the management regime for specific classes of views.

- rich tooling for client and plugin development

- A rich set of libraries support the development of clients and plugins in Java, offering embedded DSLs for the manipulation of trees, patterns, streams, and documents. The libraries also simplify access to remote service endpoints through high-level facades over their remote APIs.

Design

Philosophy

Discovery, indexing, transformation, transfer, presentation are key examples of data management subsystems that abstract in principle over the domain-specific semantics of the data. Other, equally generic system functions are based in turn on those subsystems, most noticeably search over indexing and process execution over data transfer. It is precisely in this generality that lies the main value proposition of the system as an enabler of data e-Infrastructures.

Directly or indirectly, all the processes mentioned above require access to the data. Like in small-scale systems, it is a requirement of good design that they do so against a uniform interface that aligns with their generality and encapsulates them from the variety of network locations, data models, and access APIs that characterise individual data sources.

Providing this interface is essentially an interoperability requirement. For consistency and uniform growth, the requirement is addressed in a dedicated place of the system’s architecture, i.e. for an open-ended number of subsystems rather than within individual subsystems.

The subsystem described in this document fills that space, providing the required interface and the associated development tools.

Architecture

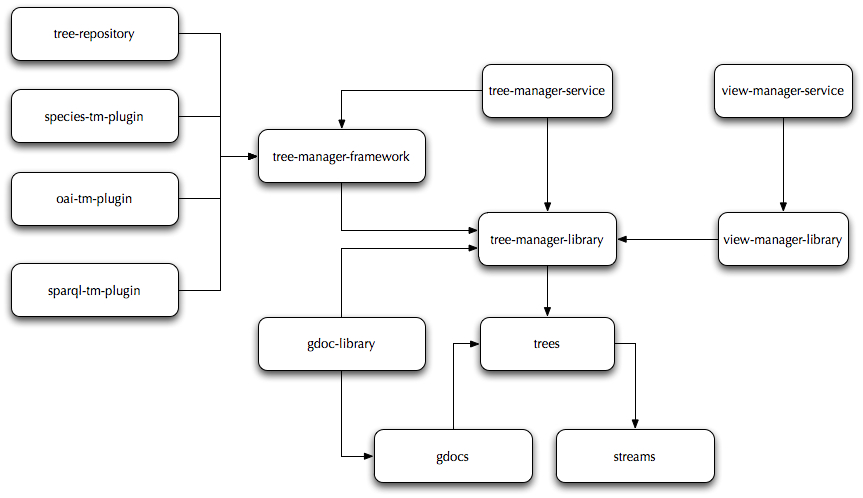

Tree-based access and storage are collectively provided by the following components:

- tree-manager-service: a suite of stateful Web Services that expose a tree-oriented API of read and write operations and implement it by delegation to dynamically deployable plugins for target data sources within and outside the system;

- tree-manager-library: a client library that implements a high-level facade to the remote APIs of the Tree manager services;

- view-manager-service: a suite of stateful Web Services that use tree patterns to define and maintain passive views over data sources that can be accessed through the Tree Manager services;

- view-manager-library: a client library that implements a high-level facade to the remote APIs of the View Manager services;

- trees: a library that contains the implementation of the tree model and associated DSL, the tree pattern language and associated DSL, the URI protocol scheme, and tree bindings to and from XML and XML-related technologies;

- streams: A library that contains the implementation of a DSL for stream conversion, filtering, and publication;

- gdocs: a client library that implements the gDM and provides facilities to create, change, or inspect documents in data sources that remotely accessible through the API of the Tree Manager services.

- gdoc-library: a client library that implements high-level façades to the remote APIs of the Tree Manager services while specialising their inputs and outputs to the documents of the gDM.

- tree-manager-framework: a client library that implements a framework of local classes and interfaces for third-party development of plugins for the Tree Manager services;

- tree-repository: a plugin of the Tree Manager services that stores trees in graph stores embedded or maintained locally to service endpoints (Neo4j stores);

- oai-tm-plugin: a plugin of the Tree Manager services that defines and maintains gDM views of data sources that expose an OAI interface;

- sparql-tm-plugin: a plugin of the Tree Manager services that defines and maintains tree views of data sources that expose a SPARQL interface;

- species-tm-plugin: a plugin of the Tree Manager services that defines and maintains tree views of biodiversity data sources exposed by the Species Manager services.

- fishfinder-tm-plugin: a plugin of the Tree Manager services that defines and maintains tree views of factsheets produced by the FIGIS group and exposed by the FIGIS APIs.

The following diagram illustrates the dependencies between the components of the subsystem:

Deployment

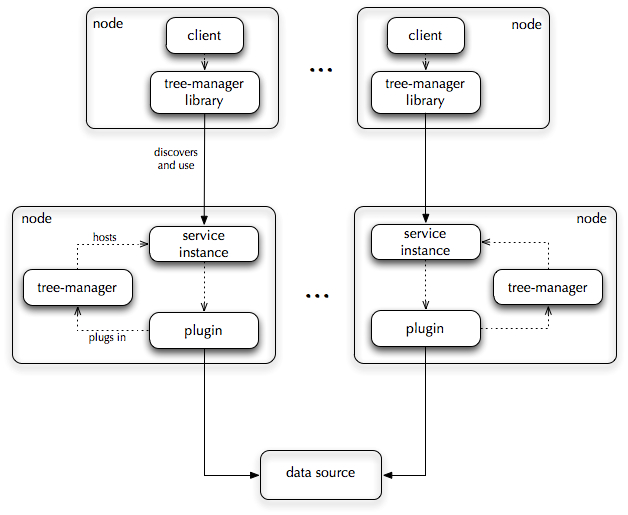

All the components of the subsystem can be deployed on multiple hosts, and deployment can be dynamic at each host. Specifically:

- services can have multiple endpoints;

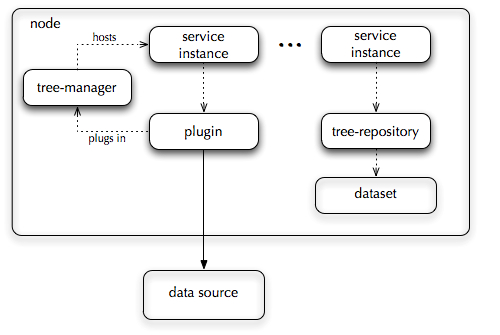

- an endpoint may be co-deployed with multiple plugins;

- the same plugin can be co-deployed with multiple endpoints;

There are no temporal constraints on the co-deployment of services and plugins. A plugin may be deployed on any given before or, more meaningfully, after the service.

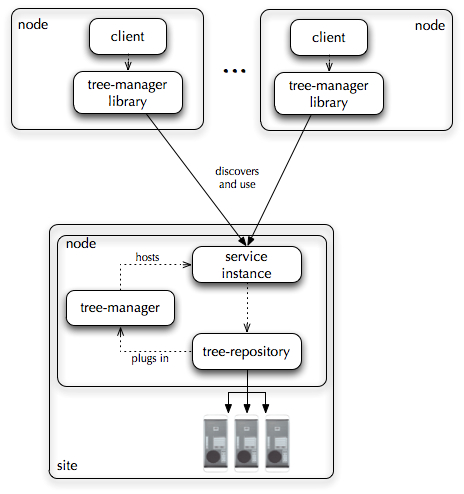

The only absolute constraint on deployment is that the Tree Manager services be co-deployed. The same holds for the View Manager services.

Since the services are stateful, a single endpoint may generate and maintain a number of service instances:

- service instances of the Tree Manager services model accessible data sources. There are separate instances for read access and/or write access to sources;

- service instances of the View Manager services model view definitions over readable data source, hence over read-only instances of the Tree Manager services;

Service deployment schemes that seek to maximise capacity will take into account the usual implications of stateful services, whereby the capacity of a service instance:

- decreases as the number of instances increases;

- decreases as the load of co-hosted instances and endpoints increases;

Endpoints of the Tree Manager services deserve their own considerations however:

- all service instances may execute streamed operations that rely on memory buffers for increased performance. Based on frequency and the average stream size, memory requirements may be higher than average;

- all service instances manage subscriptions and emit notifications which add to the workload generated by requests for data access and storage;

- service instances that target remote data sources (mediator instances) will hold to system resources for unpredictable length on average, based on the throughput of the target sources. Significant variability is expected across sources.

- service instances that store data locally (anchored instances) will require local storage accordingly. Furthermore, the performance of the graph database technology used by such instances is proportional to the memory that can be allocated to it.

Overall, services instances are expected to consume more resources than average (anchored instances), or to retain resources for longer than average (mediator instances). As such, they are expected to reduce more than averagely the capacity of other instances and co-deployed services, and to be similarly affected by them.

A deployment scheme that maximises capacity will tend to create less instances at given endpoints and will reduce co-deployment of other services. Furthermore:

- to increase performance of anchored instances, the scheme will particularly seek isolation for high-demand instances that store datasets locally.

- to increased data scale for anchored instances, the scheme may consider data sharding across a cluster, though deployment becomes static.

- to increase capacity for mediator instances, as well as increase resource sharing, the scheme may replicate endpoints and rely on the state balancing mechanisms built in the service. The upper bound in this case remains the capacity of the remote data sources.

Large deployment

A deployment scheme for the Tree Manager services may address issues of scale with respect to access demand and with respect to data size.

A scheme for high demand seeks to increase the capacity of service instances. As we discussed above, isolation increases the capacity of instances at individual nodes. The scheme will thus deploy the services on a greater number of nodes. For mediator instances, replication across nodes increases the collective capacity all instances that access the same remote data source. The upper bound in this case is the capacity of the source.

A scheme for large data size concentrates on anchored instances and relies on data sharding across a cluster. This supported is by the graph storage technology that underlies such instances but foregoes dynamic deployment.

Small deployment

A deployment scheme for the Tree Manager services may also be more conservative in terms of resource consumption in order to maximise their sharing across services.

In this case, the scheme will deploy The Tree Manager services on fewer nodes and alongside other services at each node. Service instances will thus cluster more densely around the available endpoints. Due to the lesser degree of instance isolation, instance capacity will decrease and with it the performance of anchored resources. The scheme is indicated for low-demand resources.

Use Cases

Well suited Use Cases

The subsystem is particularly suited to support data management processes that abstract over the domain semantics of the data. The system relies on many such processes to offer, among others, general-purpose indexing, search, transformation, transfer, and presentation functionality.

The development of any plugin of the Tree Manager services immediately extends the ability of the systems to deliver those functions over a new type of data, or over data that originates from a new class of data sources.

This fosters the uniform and rapid growth of the system, and maximises the impact of development efforts. Furthermore, the dynamic architectures of the services lower these efforts with the simpler programming model required by plugin development. As a result, development is more easily opened to third parties.

Less well suited Use Cases

The generic interface to structured data which is provided by the subsystem will often differ substantially from the native interface provided by data sources. In particular, one or more of the following may hold true at the same time:

- the tree interface may offer less powerful selection mechanisms than the native interface. This is the case for example, when the native interface is a full-fledged query interface, rather than a data access interface. The tree interface supports data selection based on sophisticated tree pattern matching, but it does not allow queries that relate different properties of the data or aggregate over it;

- the tree interface may offer more powerful selection mechanism than the native interface. For example, the latter may not support lookups of single items, or it may not enumerate its contents.

the tree interface may offer more powerful data transfer mechanisms than the native interface. For example, the latter may not support streamed operations.

At the cost increased development complexity, some of the issues above may be dealt by appropriate plugin design. However, the solutions may be partial, or result in QoS that are below real-time requirements.

While the required QoS may be met for sources that are static or change infrequently - by importing their contents in anchored instances - clients that work with domain and applications semantics may find little incentive to access data through a generic interface. This is particularly the case when the native interface aligns with formal or de-facto standards that offer more traction and well established tooling within the target domains. Competing with such standards is non-goal for the design of the subsystem.