Statistical Manager

A cross usage service aiming to provide users and services with tools for performing Data Mining operations. This document outlines the design rationale, key features, and high-level architecture, as well as the deployment context.

Overview

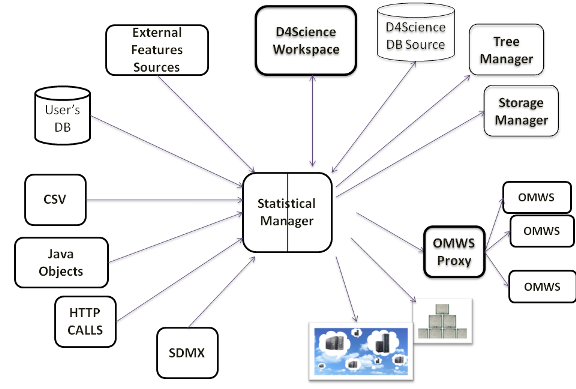

The goal of this service is to offer a unique access for performing data mining or statistical operations on heterogeneous data. These can reside on client side in the form of csv files or they can be remotely hosted, as SDMX documents or, furthermore, they can be stored in a database.

The Service is able to take such inputs and execute the operation requested by a client interface, by invoking the most suited computational infrastructure, choosing among a set of available possibilities: executions can run on multi-core machines, or on different computational infrastructures, like the d4Science, Windows Azure, CompSs and other options.

Algorithms are implemented as plug-ins which makes the injection mechanism of new functionalities easy to deploy.

Design

Philosophy

This represents a unique endpoint for those clients or services which want to perform complex operations without going to investigate the details of the implementation. Currently the set of operations which can be performed has been divided into:

- Generators

- Modelers

- Transducers

- Evaluators

Further details are available at the Ecological Modeling wiki page, where some experiments are shown along with explanations on the algorithms.

Architecture

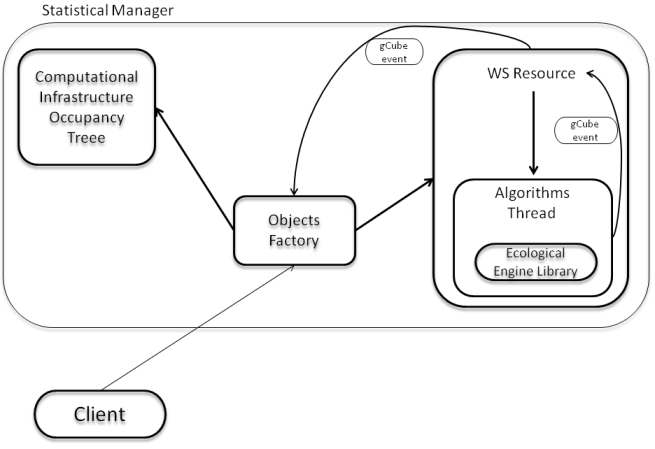

The subsystem comprises the following components:

- Ecological Engine Library: a container for several data mining algorithms as well as evaluation procedures for the quality assessment of the modeling procedures. Algorithms follow a plug-in implementation and deploy;

- Computational Infrastructure Occupancy Tree: an internal process which monitors the occupancy of the resources to choose among when launching an algorithm.

- Algorithms Thread: an internal process which puts in connection the algorithm to execute with the most unloaded infrastructure which is able to execute it. Infrastructures are weighted even according to the computational speed; the internal logic will choose the fastest available;

- WS Resource: an internal gCube process which takes care of all the computations asked by a single user\service. The WS Resource communicates with the other components by means of gCube events;

- Object Factory: a broker for WS Resources and a link between the users' computations and the Occupancy Tree process.

A diagram of the relationships between these components is reported in the following figure:

Deployment

All the components of the service must be deployed together in a single node. This subsystem can be replicated on multiple hosts and scopes, this does not guarantee a performance improvement because the performance are directly associated to the combination of the algorithms with the computational infrastructures. There are no temporal constraints on the co-deployment of services and plug-ins. Every plug-in must be deployed on every instance of the service. This subsystem requires at least 2GB of memory to run properly, the presence of multiple cores on the machine is preferred.

The Service will automatically take the available data sources and infrastructure by asking to the d4Science Information System for the scope it is running into.

Small deployment

Use Cases

Well suited Use Cases

The subsystem is particularly suited to support abstraction over statistical and data mining processes. Every data mining algorithm of evaluation procedure on data can be easily integrated in this subsystem developing a plug-in.

The development of any plug-in for the Statistical Manager immediately extends the ability of the system to process new kinds of data.

List of Algorithms

The list of currently available algorithms can be found here