Difference between revisions of "Spatial Data Processing"

| Line 1: | Line 1: | ||

| − | == | + | == Overview == |

Geospatial Data Processing takes advantage of the OGC Web Processing Service (WPS) as web interface to allow for the dynamic deployment of user processes. In this case the WPS chosen is the [http://52north.org/communities/geoprocessing/wps/index.html 52° North WPS], allowing the development and deployment of user “algorithms”. Is dimostrated that such “algorithms” can be developed to be processed exploiting the powerful and distributed framework offered by [http://hadoop.apache.org/mapreduce/ Apache™ Hadoop™ MapReduce] | Geospatial Data Processing takes advantage of the OGC Web Processing Service (WPS) as web interface to allow for the dynamic deployment of user processes. In this case the WPS chosen is the [http://52north.org/communities/geoprocessing/wps/index.html 52° North WPS], allowing the development and deployment of user “algorithms”. Is dimostrated that such “algorithms” can be developed to be processed exploiting the powerful and distributed framework offered by [http://hadoop.apache.org/mapreduce/ Apache™ Hadoop™ MapReduce] | ||

Thus was born '''''WPS-hadoop'''''. | Thus was born '''''WPS-hadoop'''''. | ||

| − | == | + | == Key Features == |

WPS-hadoop offers a web interface to access the algorithms from external HTTP clients through three different kind of requests, made available to 52 North WPS: | WPS-hadoop offers a web interface to access the algorithms from external HTTP clients through three different kind of requests, made available to 52 North WPS: | ||

| Line 14: | Line 14: | ||

| − | == | + | == Design == |

Extending the AbstractAlgorithm class we have created a new abstract class called HadoopAbstractAlgorithm where the Business Logic, hidden to the developer, is used to execute the process creating a Job for the hadoop framework. | Extending the AbstractAlgorithm class we have created a new abstract class called HadoopAbstractAlgorithm where the Business Logic, hidden to the developer, is used to execute the process creating a Job for the hadoop framework. | ||

| Line 21: | Line 21: | ||

| − | === | + | === Develop a custom process === |

The custom process class has to extend HadoopAbstractAlgorithm which allows you to specify the Hadoop Configuration parameters (e.g. from XML files), the Mapper and Reducer classes, Input Paths, Output Path, all the operations needed before to run the process and the way to retrieve the results. | The custom process class has to extend HadoopAbstractAlgorithm which allows you to specify the Hadoop Configuration parameters (e.g. from XML files), the Mapper and Reducer classes, Input Paths, Output Path, all the operations needed before to run the process and the way to retrieve the results. | ||

By using HadoopAbstractAlgorithm, you need to fill these simple methods: | By using HadoopAbstractAlgorithm, you need to fill these simple methods: | ||

Revision as of 16:37, 23 April 2012

Overview

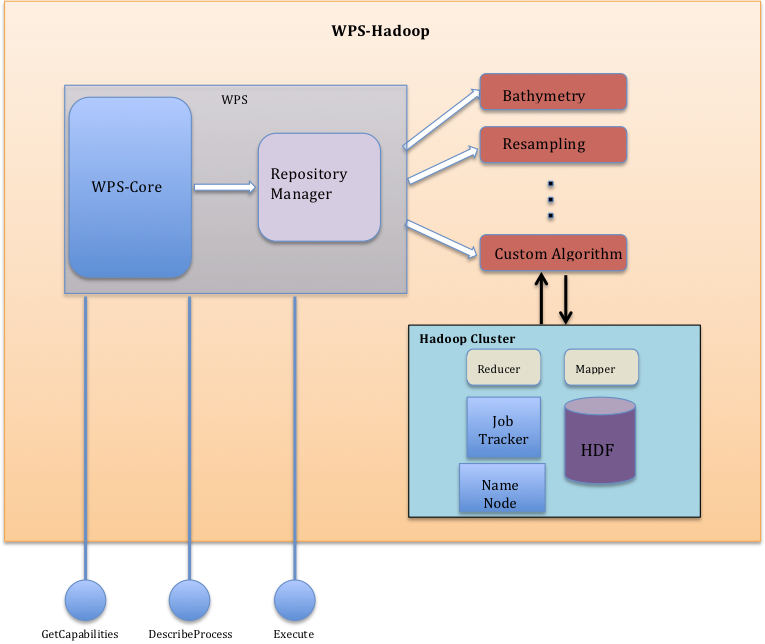

Geospatial Data Processing takes advantage of the OGC Web Processing Service (WPS) as web interface to allow for the dynamic deployment of user processes. In this case the WPS chosen is the 52° North WPS, allowing the development and deployment of user “algorithms”. Is dimostrated that such “algorithms” can be developed to be processed exploiting the powerful and distributed framework offered by Apache™ Hadoop™ MapReduce

Thus was born WPS-hadoop.

Key Features

WPS-hadoop offers a web interface to access the algorithms from external HTTP clients through three different kind of requests, made available to 52 North WPS:

- The GetCapabilities operation provides access to general information about a live WPS implementation, and lists the operations and access methods supported by that implementation. 52N WPS supports the GetCapabilities operation via HTTP GET and POST.

- The DescribeProcess operation allows WPS clients to request a full description of one or more processes that can be executed by the service. This description includes the input and output parameters and formats and can be used to automatically build a user interface to capture the parameter values to be used to execute a process.

- The Execute operation allows WPS clients to run a specified process implemented by the server, using the input parameter values provided and returning the output values produced. Inputs can be included directly in the Execute request, or reference web accessible resources.

Design

Extending the AbstractAlgorithm class we have created a new abstract class called HadoopAbstractAlgorithm where the Business Logic, hidden to the developer, is used to execute the process creating a Job for the hadoop framework.

Develop a custom process

The custom process class has to extend HadoopAbstractAlgorithm which allows you to specify the Hadoop Configuration parameters (e.g. from XML files), the Mapper and Reducer classes, Input Paths, Output Path, all the operations needed before to run the process and the way to retrieve the results. By using HadoopAbstractAlgorithm, you need to fill these simple methods:

- protected Class<? extends Mapper<?, ?, LongWritable, Text>> getMapper()

This method returns the class to be used as Mapper;

- protected Class<? extends Reducer<LongWritable, Text, ?, ?>> getReducer()

This method returns the class to be used as Reducer (if exists);

- protected Path[] getInputPaths(Map<String, List<IData>> inputData)

This method allows to the business logic to know the exact input path(s) to pass to the Hadoop framework;

- protected String getOutputPath()

This method allows to the business logic to know the exact output path to pass to the Hadoop framework;

- protected Map buildResults()

This method is called by the business logic method to pass build output that the WPS does expect;

- public void prepareToRun(Map<String, List<IData>> inputData)

This method has to be filled by all the operations to do before to run the Hadoop Job (e.g. WPS input validation);

- protected JobConf getJobConf()

This method allows the user to specify all the configuration resources for (from) Hadoop framework (e.g. XML conf files).