Difference between revisions of "GRS2"

(→gRS) |

|||

| Line 14: | Line 14: | ||

In modern systems the situation frequently appears where the producers output is already available for access through some protocol. The gRS has a very extendable design as to the way the producer will serve its payload. A number of protocols can be used, ftp, http, network storage, local filesystem, and many more can be implemented very easily and modularly. | In modern systems the situation frequently appears where the producers output is already available for access through some protocol. The gRS has a very extendable design as to the way the producer will serve its payload. A number of protocols can be used, ftp, http, network storage, local filesystem, and many more can be implemented very easily and modularly. | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

| − | |||

== Records and Record Pools == | == Records and Record Pools == | ||

Revision as of 16:43, 13 December 2010

gRS

Introduction

The goal of the gRS framework is to enable point to point producer consumer communication. For this to be achieved and connect collocated or remotely located actors the framework protects the two parties from technology specific knowledge and limitations. Additionally it can provide upon request value adding functionalities to enhance the clients communication capabilities.

In the process of offering these functionalities to its clients the framework imposes minimal restrictions on the clients design. Providing an interface similar to commonly used programming structures such as a collection and a stream, client programs can easily incorporate it.

Furthermore, since one of the gRS main goals is to provide location independent services, it is modularly build to allow a number of different technologies to be used as transport and communication mediation depending on the scenario used. For communication through trusted channels a clear TCP connection can be used for fast delivery. Authentication of communication parties is build in to the framework and can be utilized on communication packet level. In situations where more secure connections are required, the full communication stream can be fully encrypted. In cases of firewalled communication, an http transport mechanism can be easily incorporated and supplied by the framework without a single change in the clients code. In case of collocated actors, in memory references can be directly passed from producer to consumer without either of them changing their behavior in any of the described cases.

In a disjoint environment where the producer and consumer are not customly made to interact only with each other or even in the case that the needs of the consumer change from invocation to invocation, a situation might appear where the producer generates more data than the consumer needs even at a per record scope. The gRS enables fine grained transport mechanisms at level not just of a record, but also at the level of record field. A record containing more than one fields may be needed entirely by its consumer or at the next interaction only a single field of the record may be needed. In such a situation a consumer can retrieve only the payload he is interested in without having to deal with any additional payload. Even at the level of the single field, the transported data can be further optimized to consume only just the needed bytes instead of having to transfer a large amount of data which will at the end be disposed without being used.

In modern systems the situation frequently appears where the producers output is already available for access through some protocol. The gRS has a very extendable design as to the way the producer will serve its payload. A number of protocols can be used, ftp, http, network storage, local filesystem, and many more can be implemented very easily and modularly.

Records and Record Pools

Record Pool

The main data store of the gRS framework and main synchronization point is the record pool. Any record pool implementation must conform to a specific interface. Different implementations of the record pool may use different technologies to store their payload. Currently there is one available implementation that keeps all the records stored in an in memory list. The records are consumed in the same order they are produced although consumers can choose to consume them in a random order. Additionally to the list based storage of the records, a map based interface is also provided for easy access of records based on their ids.

A record pool is associated with a number of management observing, monitoring and facilitating a number of operations that can be performed on the pool.

- DiskRecordBuffer – A disk buffer that can be used as temporary storage for records and fields as will be explained in later sections.

- HibernationObserver – An observer of events of specific type that manage temporal storage and retrieval from a disk buffer. More will be explained in later sections.

- DefinitionManager – A common container class that manages definitions of records and fields associated with the pool as will be explained in later sections.

- PoolState – A class holding events that can be emitted by the pool or forwarded through the pool. More will be detailed in later sections.

- PoolStatistics – A class holding and updating usage statistics of the record pool as will be explained in later sections.

- LifeCycleManager – Policies that govern the lifetime of the record pool can be defined and implemented through this class as will be detailed in later sections.

The pool instantiation is handled internally by the framework and is done through a set of configuration parameters. This parameters include the following information.

- Transport directive – Whenever a pool is transported to a remote location, a directive can be set to describe the transport method preferred for the pool's items. This directive will be used for all its records and their fields unless some record or field has specifically requested some other type of preferred transport. The available directives are full and partial. The former implies that all the records and fields payload will be immediately made available to the remote site once they will be transferred. The later will not send any payload initially for any of the fields unless the field attributes specify that some prefetching is to be done.

- Pool Type – The pool type property indicates the type of pool to be instantiated. Since the pool instantiation is done internally through the framework the available pools must be known to the framework to perform their instantiation and handle differently cases that may require different parametrization or configuration. The only currently available pool type is the memory list pool.

- Pool Synchronization – When a record pool is consumed remotely to avoid continuous unnecessary communication between remote locations, a synchronization interval can be set. This interval is used by the synchronization protocol as will be explained in later sections. Depending on the protocol used this interval can be used or not based on the protocols implementation and synchronization capabilities. The synchronization hint will be available for the protocols that need to use it.

- Incomplete Pool Synchronization factor – During synchronization of remote pools situations will arise where one of the two parties will be requesting information that the other party will not be able to respond immediately to. In such cases one may need to alter the synchronization interval hint to require more frequent or less frequent communication. This is performed through the factor provided through this parameter.

There are two cases that can be distinguished for a record pool consumption. The first is the degenerated one where the producer and consumer are collocated and within the boundaries of VM. This means that they have a shared memory address space and references to shared classes can be used. In this situation for optimization purposes the reference to the authored record pool can simply be passed to the consumer. The consumer sees directly the record pool the producer is authoring, accesses the references the producer creates and no marshaling is performed. The synchronization is performed directly through events producer and consumer emit and there is a single instance of all the pool's configuration and management classes. The second case is that of a different address space. This may imply the same physical machine but a different process, or a completely different host altogether. In that case the reference to the authored record pool cannot be shared and a new instance must be created. In this case a synchronization protocol stands between the two instances of the record pool and based on the produced events handles transparently the synchronization of the two instances. The two instances of the record pool are created with the same configuration parameters and therefor have exactly the same behavior. The mediation of the remote pool and their synchronization is detailed in depth in later sections.

Buffering

The gRS is meant to mediate between to sides. A producing and a consuming side. Either of the two sides can have inherent knowledge on their processing requirements and their production / consumption needs. A pr4oducer for example may know that in order to produce the next record he will require some processing that takes a curtain amount of time. A consumer may likewise know that the processing of its input will require some amount of time. In order to better optimize their communication they may deem necessary that some amount of data will always be there ready for the other party to have available. The Producer may request that at all times no matter how many records the Consumer has actually requested this far, there will be some amount of records produced by him to be readily available in case the consumer requests for them. The consumer respectively may want to specify that some number of records will already be available to his side whether or not he has already specifically requested them or not so that when he requests them he will not have to wait until they are transferred to him.

This is achieved though buffering policies that may be applicable to either, both or none of the two sides. Each one of the actors can specify the buffering policy it want to have applied. The framework will try to honor the actor's request by having always the number of records specified available for him in case of the Consumer, or already produced in case of the Producer. The Buffer policy is configurable through the BufferPolicyConfig configuration class. There are three configurable values the configuration class exposes.

- Use buffering – Whether or not the client wants to have buffering enabled or not. If the buffering is disabled the producer will have to specifically request when and how many more items he wants to have delivered to him and wait until they are produced (depending on the Producer behavior) and then transferred to him (depending on the mediating proxy and the producer and consumer relative position)

- Number of records to have buffered – The number of records the buffer policy will try to keep for each side. For the Writer side, this means that the buffer policy will emit items requested events to the client to always keep him ahead of the number of items the Reader has requested by the specified number. For the Reader side it means that the policy will request more items from the Writer than the number that the consumer has actually requested. Since while this process takes place the producer and consumer are also active, depending on the clients usage the buffering policy may not be able to be fully complied with. This means that at some point the number of items that the producer will have produced will be greater or less than what the Buffering policy needs, and / or that the consumer will have available more or less items than the ones that the policy needs. The policy will make a best effort attempt to stay within the bounds specified.

- Flexibility Factor – Having an absolute number of items as a buffering policy attribute means that there might be cases that a lot of traffic can be produced from the Reader to the Writer and from the Writer to the Producer client. If for example the Reader has requested a buffering of 20 records ahead of what he is consuming, the moment he will request the next record, the request will be served by the buffered records and a request for a new item will be immediately send to the Writer. This means a new event for each reader consumption. Analogous examples can be made for the Writer's side. The flexibility factor allows for some softening of the policy. It can be specified that some percentage of the buffer can be allowed to be emptied without necessarily immediately requesting to be filled. For example a flexibility factor of 0.5 will let the buffer become half empty until an event is emitted to cover the half missing buffer.

Another interesting point is the one of event flooding. The buffer becomes half empty and an event is emitted. Before the needed items are supplied the consumer requests for another record. The buffering policy is consulted and decides that half+1 items are now needed. This process continues and until the initially requested items have been delivered, the Reader has already requested a large number of items quite possibly a great deal far from the number actually needed. To protect it self from this situation, whenever a Reader or a Writer is instructed by the BufferPolicy to request for some records, he temporarily suspends the buffer until the requested items have been made available. That way until then the client works with the number of items still available in the buffer without producing unnecessary requests until the initially requested number of records have arrived in which case the buffer will recheck what is now needed and may produce a new request event.

Definition Management

All records that are added to a record pool must always contain a definition. A record definition (RecordDef) contains information that enable the framework to handle the record in a uniform manner regardless of its actual implementation. The contents of a record definition is discussed in a different section. Similarly, every field that may be contained in a record must also contain a field definition (FieldDef) for the same purpose.

The common case as expected is that most record pools will contain largely similar in their definition records. Similarly each record is expected largely similar field in their definition. Having even though each record and each field of a record define their own definitions is an obvious waste of resources and an overhead that can be easily dealt with. Each record pool contains a DefinitionManager. This class is the placeholder of all definitions of records and fields within that pool.

Record definitions and field definitions can be added and removed from the manager. Every time a definition is added to the manager, the existing definitions are checked. If the definition is found to be already contained in the manager's collection, a reference counter for this definition is incremented and a reference to this already stored definition is returned to the caller. This reference is then stored in the respective record or field thereby sharing the definition with all other elements that have the same definition. In case a definition must be disposed, instead of destroying the actual definition the operation is passed to the manager which will locate the referenced definition and decrease its reference counter. In case the reference counter has reached 0, the definition can be disposed.

This approach although it serves its purpose very well and very efficiently, has two implications. Firstly, after a record has been added to a pool and its definition shared with the rest of the records available, its definition cannot be modified. This of course although it is a side affect is not really a limitation as this restrictions must be imposed anyway from the framework. After a record is added to a pool it is immediately available to the pool's consumers. Changing any of its properties does not necessarily mean that the consumer will be notified of this change as he might have already consumed it and moved on. The second implication is the need to be able to identify when two definitions are considered equal. Equality is handled at the level of the RecordDef and FieldDef classes and is simply a matter of identifying the distinct values of the definitions that make each definition unique.

Pool Events

The whole function of the gRS is event based. This package is the hart of the framework. The events declared here are the ones that define the flow of control governing the lifetime of a record pool, its creation, consumption, synchronization and destruction. Each record pool has associated a PoolState class in which the events that the pool can emit are defined and through which an interested party can register for the event. The PoolState keeps the events in a named collection through which a specific event can be requested.

The event model used is a derivative of the Observer – Observable pattern. Each one of the events declared in the package and stored in the PoolState event collection extends java Observable. Observers can register for state updates of the specific instance of the event. This of course means that there is a single point of update for each event which automatically raises a lot of issues of concurrency, synchronization and race conditions. So the framework uses the Observables for Observers to register with, but the actual state update is not carried through the Observabe the Observers have registered with, but rather as an instance of the same event that is passed as an argument in the respective update call. That way the originally stored Observable instances in the PoolState act as the registration point while a new instance of the Observable that is created by the emitting party is the one that actually carries the notification data.

All events extend the PoolStateEvent which in turns implements the Observable interface and the IProxySubPacket . The later is used during transport to serialize and deserialize an event and is explained in later sections. The events defined and are the bases of the framework are the following:

- MessageToReaderEvent – This event is send with a defined recipient. It can be send by either some component of the framework to notify the consumer client of some condition, such as an error that the consumer may want to be notified for, or from the client of the writer, the producer him self. The message is composed of two parts. One is a simple string payload that can contain any kind of information the sender may want to include. The other part is an attachment. The sender of the object can attach an object implementing the IMarshlableObject interface and the message will be delivered to the consumer along with the attachment object as submitted.

- MessageToWriterEvent – This event is send with a defined recipient. It can be send by either some component of the framework to notify the producer client of some condition, such as an error that the producer may want to be notified for, or from the client of the reader, the consumer him self. The message is composed of two parts. One is a simple string payload that can contain any kind of information the sender may want to include. The other part is an attachment. The sender of the object can attach an object implementing the IMarshlableObject interface and the message will be delivered to the produer along with the attachment object as submitted.

- ItemAddedEvent – This event is generated by the writers when a number of records are inserted in the record pool. Based on this event the reader can evaluate when there are more records for him to consume.

- ItemRequestedEvent – This event is generated by the readers to request a number of records to be produced. Based on this the writer can evaluate when he should produce more records to be consumed.

- ProductionCompletedEvent – This event is generated by the writers when they finish authoring the record pool. It can be used by the readers to identify whether or not they can continue requesting more records than the ones already available to them or not. This event does not imply that the producer is disposed. The Writer instance is still available to respond to control signals as they may be needed. The producer can still use the record pool to exchange messages with the consumer.

- ConsumptionCompletedEvent – This event is generated by the readers when they have finished consuming the record pool. It can be used by the consumers to stop producing more records and stop authoring the record pool. This event does not imply that the consumer is disposed. The Reader can still be used to access records already made available to it, and the records that have already been accessed can be used. The producer and consumer can still exchange messages.

- ItemTouchedEvent – This event is emitted whenever a record is accessed in some way. Whether it is modified, transfered, accessed, some of its payload moved to the consumer from the producer, etc. It is used by the pool life cycle management for pool housekeeping as explained in later section.

- ItemNotNeededEvent – This event is emitted by a consumer whenever he wants to declare that he is done accessing the record and all its payload and that the record is no longer needed. It is used by the pool life cycle management for pool housekeeping as explained in later section. After this event is emitted for some record, the specific record is candidate for disposal at any time the framework sees fitting.

- FieldPayloadRequestedEvent – The framework supports multiple transfer functionalities and parameterizations. In any but the simple cases of collocated producers and consumers and full payload being transferred immediately across the wire to the consumer, the payload of a record's field may need to become gradually available to the consumer through partial transfer. For a payload part to be transferred, the consumer will need to request explicitly through a call the additional payload or implicitly through the use of one of the frameworks mediation utilities. This request is done through this event which requests for a specific record, a specific field of the record, a number of bytes to be made available. There are two ways that this event can be handled by the framework. The first case is when the producer and consumer are connected through a LocalProxy. In this case both actors are collocated and the payload of every field is already available to the consumer. In this case each writer must be able to catch these events and immediately respond with a FieldNoMorePayloadEvent to indicate to the consumer that all the available payload for the field is already available for him to consume. The other case is to have some other type of mediation between the two actors where there must be some kind of transport between the two actors. In this case the protocol connecting the producer side of the proxy must catch the event, retrieve the needed payload from the respective field and respond with the appropriate event, FieldPayloadAddedEvent or FieldNoMorePayloadEvent depending on whether more payload has been made available or no more payload can be made available as all the payload is already transferred,

- FieldPayloadAddedEvent – This event is send as a response to a FieldPayloadRequestedEvent when more data has been made available to the consumer as per request as is sketched above.

- FieldNoMorePayloadEvent – This event is send as a response to a FieldPayloadRequestedEvent when no more data can be send to the consumer as per request because all the available data is already there, as is sketched above.

- LocalPoolItemAddedEvent – This event indicate when a new item has been added to the local pool. Local to the component that catches the event. For example a reader catching the event will interpret it as new item created by the writer, and made available to the reader side as a response to an ItemRequestedEvent that he send. This event is used by the reader and writer classes to update their buffer policies.

- LocalPoolItemRemovedEvent – This event indicate when an item has been retrieved from the local pool. Local to the component that catches the event. For example a writer catching the event will interpret it as an item requested previously by the reader and created and added to the pool by the writer has been transferred to the reader who consumed it. This event is used by the reader and writer classes to update their buffer policies.

- ItemHibernateEvent – A record during its lifetime can be considered as needed to be kept for later use by a consumer but for the time being it is not needed and should be somehow hidden to free up resources. For this purpose any record and its included fields which is already contained in a record pool can be hibernated. The record is persisted to disk and can be subsequently waken up in its initial state. When a record is marked to be hibernated this event is emitted and both the pools that may contain it, producer and consumer pool if the pools are remote and synchronized, will catch the event and hibernate the record. This event processing may be asynchronous.

- ItemAwakeEvent – At some point a hibernated record may be needed again. At this point both the record in the consumer and in the producer side must be awaken and restored to the exact state that is was before the hibernation. This event is emitted and both pools will resurrect the record and its fields from the disk buffer.

- DisposePoolEvent – This event is emitted when the record pool and all its records are no longer needed. The dispose method is called either because the producer decided that he wants to revoke the produced record pool or because the reader decided that he is done consuming the record pool. Since the communication is point to point, once the consumer stops consuming, the record pool is fully disposed. The record pool disposal disposes each record, and if the record is hibernated it wakes it up before it is disposed to make sure each field performs its cleanup. Once this event is emitted all state associated with the record pool is cleaned up whether it is data within the record pool it self or registry entries, open sockets, etc

Record Hibernation

A record pool may grow to large sizes. There is practically little to limit the size of a record pool that a producer may need to create. There are however limitations imposed by the available resources. The gRS makes an attempt to facilitate the creation and consumption of big sets of records with as less resources as technically possible. One aspect of the approach is through the record hibernation facility.

Records that are consumed but should not be disposed of yet, marking them as not needed through an ItemNotNeededEvent, can be set in a state of minimum memory requirements. The record and its fields can then whenever they are needed again to become active once more and reset to their exact previous state ready to be used again. This state is hibernation.

Each record pool has an associated DiskRecordBuffer. Items of the pool can persist themselves through this instance and put themselves in a state of low resource consumption. When and if they are needed again they can be woken up again and deserialize the state they previously persisted and set themselves usable again.

As stated in the pool events section, the hibernation and awakening of records is handled through events. Each record pool registers a HibernationObserver instance to receive the ItemHibernateEvent and ItemAwakeEvent events that are emitted through it, This observer whenever it receives one of the above events will synchronously go to the disk buffer and perform the requested action. When a record pool is disposed, or a single record is disposed, if it is hibernated it will firstly be awaken. This way each field and record can perform its own cleanup.

Life Cycle Management

During the life cycle of a record pool there might be situations in which the actors will need for the pool or part of the pool to be disposed without necessarily waiting for a dispose pool event to be emitted. One example of this is the ItemNotNeededEvent as presented in previous sections. The life cycle of a record pool is separated in two stages. The first stage is considered the pool's active span. Policies can be defined to be applied during this pool's period and their aggregated decision can keep the pool at this phase or move it to the next one. The next phase of the a pool's life cycle is the one where the pool is regarded as candidate for disposal. Policies can be applied to this phase to keep the pool active even at this stage but once the policies aggregated decision dictates it, the pool is disposed along with all of its records. During both these phases there is a third check that takes place. This is the policies defined not for the pool as a whole but for each record. An aggregated decision is made for each record and if this decision dictates it the record can be disposed even if none of the clients have explicitly requested it though an ItemNotNeededEvent.

A class implementing a life cycle policy must implement the IlifeCyclePolicy interface. With this interface the meaning of the decision is distinguished. Whether the policy decides on whether the inspected object should be kept, destroyed or it cannot make a decision. Life cycle policies can be distinguished between two types. Policies that can be applied to a record pool, and policies that can be applied to a record. These are distinguished by two extensions of the IlifeCyclePolicy. IlifeCyclePoolPolicy and IlifeCycleRecordPolicy other than tagging the type of object the policy can be applied on, defines the main entry point to the policy's decision making.

In order for the policies to be applied on a pool or on a record, there must be some statistics kept for all items of the record pool so that the decision on each of the item's life cycle can be informed. Statistics are kept for the record pool as well as for all the records of the record pool by monitoring the events exposed by the record pool. The PoolStatistics class is initialized for each record pool. This class is registered for all the events exposed by the pool. Whenever an event is caught it is processed and the statistics kept by the instance is updated. The statistics kept for the pool are the following:

- If the pool is being disposed

- The life cycle phase the pool is now in

- The time the pool was created

- The time the consumption of the pool was completed

- The time the pool production was completed

- The number of records added to the pool

- The number of records requested by the pool

- The number of records touched in the pool

- The number of messages transfered through the pool

- The time the last record was produced

- The time the last record was requested

- The time the last record was touched

- The time the last message was transfered

- The mean record production time

- The mean record request time

- The mean record touched time

- The mean message transfered time

Additionally to the statistics kept for the record pool in general, statistics are kept for each record of the record pool too. These statistics are the following:

- The time the record was created

- The time the record was marked as not needed

- The last time the record was touched

Based on these statistics a number of life cycle policies have been defined and more can be added. Each policy defines its scope, which can be either Pool, or Record. A policy might be applicable to either of the two or to both. The available types of policies that are now defined are

- LastUsed

- UsageInterval

- UsageRate

- ProductionConsumptionCompleted

The first three currently have only Pool scope, while the third has both Record and Pool scope. Going into more detail, there are the following five implementations of life cycle policies.

- ConsumptionCompletedRecordPolicy – This policy has a Record scope and a policy type of ProductionConsumptionCompleted. The policy will respond that a record is candidate for distraction if the record has been marked as not needed though a ItemNotNeededEvent or the last time the record was touched exceeds some configurable threshold.

- ProductionConsumptionCompletedPoolPolicy – This policy has a Pool scope and a policy type of ProductionConsumptionCompleted. The policy will respond that a pool has exceeded the policy defined grace period if the production of the pool has been completed (ProductionCompletedEvent), the consumption of the pool has been completed (ConsumptionCompletedEvent) and a configurable time threshold has been exceeded since both the time the consumption has been completed and the production has been completed. Depending on the phase of the record pool life cycle the policy has been used the outcome of the policy will be used differently. It will either contribute to moving the pool in the next life cycle phase or disposing it.

- UsageIntervalLifeCyclePoolPolicy – This policy has a Pool scope and a policy type of UsageInterval. The policy will respond that a pool has exceeded the policy defined grace period if the mean message transfer time, the mean record production time, the mean record touch time and the mean record request time have all a value less then some configurable threshold value. Depending on the phase of the record pool life cycle the policy has been used the outcome of the policy will be used differently. It will either contribute to moving the pool in the next life cycle phase or disposing it.

- UsageRateLifeCyclePoolPolicy – This policy has a Pool scope and a policy type of UsageRate. The policy will respond that a pool has exceeded the policy defined grace period if the message rate, the record production rate, the record touched rate and the record request rate have all values less than some configurable threshold value. Depending on the phase of the record pool life cycle the policy has been used the outcome of the policy will be used differently. It will either contribute to moving the pool in the next life cycle phase or disposing it.

- LastUsedLifeCyclePoolPolicy – This policy has a Pool scope and a policy type of LastUsed. The policy will respond that a pool has exceeded the policy defined grace period the time past since the last message, the time since the last record production, the time since the last record touched and the time since the last record request have all values greater than some configurable threshold value. Depending on the phase of the record pool life cycle the policy has been used the outcome of the policy will be used differently. It will either contribute to moving the pool in the next life cycle phase or disposing it.

In order to apply the life cycle policies, each record pool has associated a LifeCycleManager instance. In the configuration of the manager the policies to be used are defined as well as some manager specific properties. Whenever a record pool is remotely instantiated to a consumer site the life cycle manager as well as all its properties and policies are cloned to the remote pool. The attributes a life cycle manager can be parametrized with is weather some default life cycle policies should be used if none are defined and the interval every which the policies will be evaluated. If no life cycle policies are defined and the default ones are not used either, the pool and its records will only be reclaimed on a DipsosePoolEvent. This is also the case for records that are marked as not needed through ItemNotNeededEvent. The life cycle manager is configured as to which policies to use through a list of policy definition arguments represented by the LifeCyclePolicyDefinitionArguments class. The policies to be used are declared indirectly by defining the policy name, the use of the policy, and its scope, along with the specific policy configuration. During the manager's initialization the concrete policy instances are created and the implementor of the policies which will evaluate the policies at the specified interval, aggregate the results of each policy, handle the phase of the pool's life cycle and perform the actual disposal of pool and records is instantiated.

Records and Fields

The whole purpose of the gRS is the movement of payload. This is done through the records and the fields their of. Each information stored and retrieved from the record pool is organized as a record. A record is simply a way to group fields. The fields of a record is the actual construct that contains the payload that needs to be moved from the producer to the consumer.

A record groups fields. A record is the grouping unit that defines the items that can be added to a pool. Each record has a definition, RecordDef, that contains the schema of the record. The schema of the record has some system level attributes needed by the framework and different implementations of a record can add their own additional properties. The two basic attributes of a record is its type, and a transport directive. The type of the record is the class name of the class that implements the IRecord interface. This way when a record is transferred over the wire to a remote location the framework can unmarshal the record to its originally defined instance. The transport directive is a hint to the framework as to how the payload of the records, the fields, should be moved over the wire unless some field has specific requirements. The transport directive a record can define is:

- Full – This directive specifies that all the record fields must be transferred fully to the remote location unless some field has overridden in its own definition this specifying that it needs to be transferred partially.

- Partial – This directive specifies that all the record fields must be transferred partially to the remote location unless some field has overridden in its own definition this specifying that it needs to be transferred fully.

- Inherit – This directive does not specify a concrete directive but it specifies that its directive is instead inherited from the transport directive the record pool defines.

When a record is added to a record pool it cannot be added to any other record pool and its definition can no longer be modified.

A record is simply the grouping placeholder to keep organized a number of fields that may compose the actual record's payload. The payload of the record is the collection of fields. Each field can be of various types depending both on the field's payload type as well as the access methods it provides. Each field has a definition, FieldDef, that contains the schema of the field. The field schema contains some system level attributes needed by the framework, but each field implementation can define additional ones. The system level definition attributes are the following:

- FieldType – The class name of the field. This is needed so that when a field in transferred at a remote location the actual instance of the field is unmarhaled and recreated.

- SupportsChunking – Whether or not chunking of payload should be used when transferring the field to a remote location. Whenever a number of data is requested by a remote consumer to be transported, and chunking is enabled, a multiple of of the ChunkSizeInBytes is send that covers the requested number of data if the field is set to support chunking.

- ChunkSizeInBytes – The number of data that constitutes an undividable unit of transfer when the field supports chunking.

- TransportDirective – The fields desired method of transfer. The available options are Full, Partial an Inherit. If Full transport directive is defined whenever the field is transferred to a remote consumer the full field's payload is always transfered immediately. On partial transfer no payload or a portion of it as defined by the prefetching and chunking properties is transfered. Additional payload must be explicitly requested to be transferred. On Inherit transport directive the transport directive defined for the containing record is used.

- DoPrefetching – Whether or not prefetching of the field's payload must be applied in case of a partial transport directive. In case this is applicable the PrefetchSizeInBytes and the Chunking properties are consulted and the calculated number of bytes is also transfered to the remote consumer site.

- PrefetchSizeInBytes – The number of bytes that should be moved along with the field definition in case the field supports prefetching and the field is being transferred partially.

- MIMEType – This attribute is meant to facilitate the consumer to identify the type of payload the field contains.

When a field is added to a record it cannot be added to any other record and once the record is added to a record pool the field's definition cannot be modified.

Additionally to the FieldDef each field contain a FieldState instance. Through this instance the interested client can register for notifications concerning the fields payload and its availability. The field state instance performs lazy initialization of its events to avoid explosive memory needs for the fields as overhead. The events that are exposed through this instance are FieldPayloadAddedEvent and FieldNoMorePayloadEvent. and are used to handle the partial availability of a field's payload. Whenever a field's payload is partially transferred to the consumer, in order for the consumer to request for more payload he needs to explicitly do so. The framework will then consult the prefetching attributes and request for some specific amount of data to be made available. In case of a locally [consumed pool, the writer that is authoring the pool must respond with a NoMorePayloadEvent as already explained in other section. This will indicate for to the consumer that all the data that can be consumed from the field is already available to him. Otherwise, in case of a mediated consumption and a framework synchnronized consumption the synchronization protocol will intercept the requesting event, consult the referenced field, retrieve the desired number of data, and send it to the consumer. Additionally it will emit a FieldPayloadAddedEvent to let the field consumer know that new payload has been made available to him. In case the referenced field respond that no more payload can be send as the full amount of data available has already been send, a NoMorePayloadEvent will instead be send to the field consumer through the respective FieldState.

The process of field payload synchronization as sketched above is expected to put stress to a simple consumer that needs to take advantage of the partially transferable field payload but want to have a more simplified access method. To this end an additional utility is provided to the field consumer. The MediatingInputStream class can be used for the fields that can support it to consume their payload. This stream can be used to automatically handle the request for additional payload, handle responding events, and blocking until more data are available.

Depending on the type of payload a field contains and preferences on the way the payload is accessed the following IField extending interfaces have been defined. While the names of the interfaces define also to a big extend their logic, there is nothing that is actually stopping the implementors of an interface to implement a different logic than the one expected.

- IReferenceField – This interface is meant to be implemented by fields whose payload is simply a URI identifying a resource that can be consumed through some protocol through which it is accessible. The protocol may be able to serve the payload in any location or there may be a need fro the framework to transfer the payload. This logic is incorporated in each interface implementing field.

- FTPReferenceField – Implementation of the IReferenceField interface. Denotes a field whose payload is an ftp accessible resource. The field contains as payload simply the URI to the ftp location and path to the resource. Whenever a consumer needs to access the resource the field will open an input stream that can read from the remote location. This means that the field can serve the resource to any location without the framework having to transfer any data other than the URI itself. Mediation for this field is provided in the sense that the mediating input stream returned is simply the input stream that would read the remote resource anyway. Requests for additional payload would not provide any additional data other than the one already available through the protocol. So requests for more payload are immediately answered with a no more payload event.

- HTTPReferenceField – Implementation of the IReferenceField interface. Denotes a field whose payload is an http accessible resource. The field contains as payload simply the URI to the http location and path to the resource. Whenever a consumer needs to access the resource the field will open an input stream that can read from the remote location. This means that the field can serve the resource to any location without the framework having to transfer any data other than the URI itself. Mediation for this field is provided in the sense that the mediating input stream returned is simply the input stream that would read the remote resource anyway. Requests for additional payload would not provide any additional data other than the one already available through the protocol. So requests for more payload are immediately answered with a no more payload event.

- GlobalFSReferenceField – Implementation of the IReferenceField interface. Denotes a field whose payload is a globally accessible filesystem resource. The field contains as payload simply the URI to the filesystem location and path to the resource. Whenever a consumer needs to access the resource the field will open an input stream that can read from the filesystem location. This means that the field can serve the resource to any location without the framework having to transfer any data other than the URI itself. Mediation for this field is provided in the sense that the mediating input stream returned is simply the input stream that would read the filesystem resource anyway. Requests for additional payload would not provide any additional data other than the one already available through the protocol. So requests for more payload are immediately answered with a no more payload event.

- LocalFSReferenceField – Implementation of the IReferenceField interface. Denotes a field whose payload is resource accessible only through the producer's filesystem. The field contains as payload simply the URI to the filesystem location and path to the resource. This means that in case the producer resides in the same host as the consumer and their mediation is only performed through a LocalProxy the URI is indeed enough for the consumer to access the resource. In case the communication is mediated the local filesystem resource needs to be transfered as well. The producer side field keeps track of the amount of data it has already transferred and the consumer side field creates a temporary file deleted on field disposal to which it appends the data of the original field as they are made available. The consumer accesses the locally available copy and in case of partial transfer keeps requesting the amount of data that it needs to consume. Mediation for the field is available wrapping an input stream to the local partial file and hiding the additional payload requests as well as blocking until more data is made available whenever a client request would meet the end of the temporary file while more data can be retrieved.

- IStreamField – This interface is meant to be implemented by fields whose payload is accessible through a stream. On the producer side, the client will provide the field with an input stream that reads over the available payload. No data will be read unless they need to be moved to the consumer. If the consumer is collocate with the producer, the consumer will access the payload of the field though the same InputStream the producer provided. Otherwise the mediating proxy will read the needed payload from the producer input stream, transfer them and make them available to the consumer through a respective input stream.

- IValueField – This interface is meant to be implemented by fields whose payload is directly available fully to the gRS at its instantiation and it will become accessible to the consumer directly from the field itself.

- IObjectField – Extension of the IValueField interface that is meant to be implemented by fields that want to offer typed java objects as their payload. The object must be able to be marshaled and unmarshaled to and from a byte stream. Implementations of the field must specify whether or not partial transfer is supported for this field.

- StringField – Implementation of the IObjectField interface. Denotes a field whose payload is simply a string that can be fully or partially transferred to a remote consumer. The specific implementation of the field whenever partially transferred, will create a new instance of its payload on the arrival of new data. Furthermore since the full payload is kept in memory this field is not meant to be used for large data payload.

- IThirdPartyTransferField – This interface is meant to be implemented by fields whose payload will be transferred by a third party protocol / application. This field has a lot in common with the IReferenceField implementations in the sense that the framework needs not transfer anything but an identifying object to enable retrieval of an externally available payload. The difference is that while the IReferenceField expects data to be already available in the resource serving location, implementors of this interface can perform this action upon request and their own field logic. An example of such a field could be a field that uses a database accessible by both producer and consumer. The field could store its payload to the database when requested to serialize itself for transport and transfer a row identifier. At the consumer side it could retrieve the data using this identifier.

Remote and local Pool Synchronization

Proxies and Registry

The two main actors in a gRS scenario is the producer and the consumer. The producer authors a record pool that the consumer needs to access. The two main cases one may distinguish between in this scenario is a consumer that is collocated with the producer in the same host and the same VM in which case they share a common address space. The second case is that the two actors do not share the address space. This may mean that the consumer and producer are running in a different VM either in the same or in a completely different host. The producer and consumer should not be forced to make this distinction in their code. The gRS frees them from handling the two cases differently and allows them to have the same behavior in their code regardless of the other party location. This is achieved through the proxy concept.

A proxy is in fact a mediator between the two actors. Different types of proxies can handle different type of communication, can be initialized with different synchronization protocols, and use different underlying transport technologies. All this are known to the client only at a declarative level in case he may want to have more control over the mechanics of the framework. Different implementations if the proxy semantics can be provided by implementing the IProxy interface.

Every proxy implementation will still need to provide a marshalable identification mechanism which can be send over to a consumer side which will in turn use it to initialize a new proxy instance that will mediate on his side the pool consumption. This object is an implementation of the IProxyLocator interface. Implementations of this interface are capable to identify uniquely a record pool. They must also hold enough information to contact the producer side proxy that mediates the producing side pool. This implies information on the protocol used, and any protocol specific information needed by the consumer side proxy.

A proxy's responsibility is to initialize all needed protocol mechanics and to produce the locator that can be used by the producer proxy. The actual transport, if needed, is not performed by the proxy itself. A proxy although it may have some knowledge on the underlying technologies that will be used does not delve into the protocol details. This is the job of yet another construct, the transporter. Different transporters implementing the ITransporter interface are the constructs that hiding the underlying communication technology mechanics provide a common way for the synchronization protocol to send and receive the needed data. The transporters are also the ones responsible for any communication level security but also for the creation and destruction of the communication link. Depending on the underlying technology a transporter's work may be extremely simplified or it may need to perform its own constructs for providing session like communication over a stateless protocol or for multiplexing communication for multiple record pools over a single communication link or even to chunk protocol packets to comply with communication protocol restrictions. All this is hidden from the layers above it.

Remote synchronization security comes in two levels. The first is the protocol packet authentication headers and it will be discussed in alter sections. The second level is the communication channel encryption. This level of security is handled by the transporter. Should the client wishes to use communication level security, he must also provide with a set of parameters. These include:

- A pair of Private and Public Keys used by the local party component for signatures

- The Public key of the remote party used for signatures

- A supported algorithm to be used for signatures

- A pair of Private and Public Keys used by the local party component for encryption

- The Public key of the remote party used for encryption

- A supported algorithm to be used for symmetric encryption

With this information provided the communication initializing side transporter initializes a security header that is send to the other end of the communication channel. This header contains the symmetric secret key to be used for the communication. The key is encrypted using Public key encryption and signed by the sender. The other end decrypts and verifies the key send. From then on all data that is exchanged between the two parties is encrypted with the shared key. The availability of remote keys is not handled by the framework in any way. It is assumed that there is some infrastructure capable of storing securely and making available the communicating parties keys.

In both the above mentioned cases of collocated and remotely located producers and consumers, there is always the case where a record pool needs to be identified within the address space of the VM that it was created. To do so, since the identification token needs to be serializable and movable to a remote location a java reference is not adequate. There needs to be a different construct that will identify uniquely the record pool and through it the record pool itself can be retrievable. This construct in the gRS framework is the Registry class. The Registry is a static class unique within the context of a VM in which record pools can registered, assigned within a unique id and discoverable through it. Whenever a producer wants to make the record pool it is authoring available for consumption trough a proxy, it registers the record pool and the proxy through which the record pool will be available with the Registry class. The registration procedure produces a registry key consisting of a UUID. The record pool and associated proxy is stored in the registry for future reference through the registry key and the produced registry key is provided back to the registration procedure caller to use it to produce the locator that will be able to identify the record pool through it. The registry construct needs also be registered to some of the record pool events so that it will be able to perform its own cleanup once the record pool is disposed.

Synchronization protocol

Whenever a producer and a consumer share a record pool there needs to be some synchronization between them both to ensure access integrity but also to enable remote consumption. When the producer and consumer are collocated, the synchronization is done without mediation directly through the events exposed by the shared record pool. When the communication is mediated there is a need for a synchronization protocol that will handle the forwarding of locally produced events and their interpretation as well as the marshaling and unmarshaling of events, records, fields and their payload.

This is the work of the IProtocol interface implementing classes. Specifically there are two interfaces extending the IProtocol interface that need to be implemented. The IConsumerProtocol and the IProducerProtocol need to be implemented in pairs. Currently there is only a Client Pull implementing protocol that will be detailed in later section.

Protocol packets

The communication between the producer and the consumer protocol is done by means of packets. The producer and consumer protocols will usually operate in conceptual loops. At every loop one of the two actors will need to send some information, request, payload, events, etc to the other actor. At every protocol loop all the information to be send is aggregated to a single packet. This packet is then send to the other side to process it and possibly to create in respond a new packet and send it to the party that started the process.

A packet can contain the following types of information :

- The UUID of the record pool assigned to it by the registry. This is used to identify the referenced record pool. The proxy and transporter types implied may not require it if they already base their communication on a session enabled protocol, but the information is provided.

- A flag indicating whether the sending side will close its communication marking the disposal of all kept information.

- Authentication headers. This is the second level of communication security. The first level being the encryption of the whole communication stream. Even if such a drastic measure is not needed, one can request for only the authentication of the packets send. This would leave the payload send out in the clear but the sender and receiver would be able to identify one another.

- The current size of the pool that the packet sending side has available. This can be used to offer to the clients a view of the remote pool status. This means that the view of the remote pool status will be updated at the same rate that the packets are exchanged so it will not always mirror the most up to date information.

- A pool definition sub packet that is used during the initial communication initialization. Through this packet the pool the producer has created is defined so that the consumer can create a new instance of the same pool at its own location. The packet contains the pool configuration that instantiated the pool in the first place as well as the life cycle policies definitions.

- An events sub packet that contains events produced by the sending side and need to be send to the packet receiving side.

- A Record payload sub packet that contains definitions of records that are send from the producer to the consumer. Along with the record definitions its field definitions are send also. The transport directive of each field is consulted to check how to handle the payload contained. In case the transport directive is full or it is defined that some prefetching should be employed, additional field payload data is transferred through this sub packet. Field payload is written directly from the field to the transporter to avoid unnecessary resource consumption.

- A field payload sub packet contains additional payload requested for a single field. Whenever s field is consumed with on demand payload transfer, additional field payload is requested through the events sub packet and transferred through this sub packet. Field write directly to the transporter to avoid unnecessary resource consumption.

Whenever a record or field is unmarshaled, its attributes from its definition is used to determine the exact type to instantiate. The field or record is instantiated through reflection and passed the provided initialization definition and supplied payload. When a field part is unmarshaled, the record that contains the field is retrieved from the local pool, the respective field is found and the provided payload is supplied to the field to make it available to its consumer.

Client pull

Different communication protocols can be implemented to handle the synchronization between the producer and the consumer. Protocols should be implemented in pairs for the consumer and the producer side. Currently one protocol is available. The implemented protocol will not transfer any record or field payload unless specifically requested by the consumer. Events and control data are periodically synchronized but no payload is unnecessarily transfered.

Consumer protocol

First the protocol needs to create a local copy of a record pool with the same attributes and characteristics as the one the producer is serving, To do so it communicates with the producer side protocol to receive the record pool served attributes and description.

A communication initialization packet is created and as a response the consumer will receive a packet that will contain the served pool configuration and all needed information to create an exact copy of the remote record pool.

After this the thread enters the protocol loop that is ended once the the local or remote pool is declared as disposed.

- The first step of the protocol loop is to send the local events the consumer has to send to the remote end and receive the producer's responses. Events that have been received by the producer are not send back and events that are for local use only are blocked to avoid unnecessary traffic. Other events that support it are grouped together to send as less data as possible and to minimize object marshaling and unmarshaling. The packet is send and the protocol waits to receive a packet as response. If needed the packet is authenticated and while unmarshaling the packet any provided field payload is also unmarshaled and stored as the specific field defines.

- The second step is the received packet processing. The received events are marked so that they are not send back, new records provided are added to the local pool, and the received events are send to the local record pool while if requested field payload is supplied the field is also retrieved and an event is emitted through that field too.

- Finally depending on whether there are still pending synchronization issues for the consumer or not an interval is defined to avoid flooding the producer with requests and the process starts from step 1.

Producer protocol

Once initialized the producer protocol waits for the communication to be initialized by the consumer. Once the communication initialization packet is received the protocol will retrieve the served record pool configuration and initialization parameters, include it in a response packet and send it to the consumer. Every time a packet is received, if needed an authentication check will be firstly made.

After the communication is fully initialized the thread enters in the main protocol loop which ends only when the producer or consumer dispose the managed record pool.

The first and only step of the protocol loop is to wait for the consumer protocol to send a packet containing its produced events and requests. Once the packet is received the consumer events are processed for their requests. They are marked so that they will not be send back and depending on each event type the respective action is taken. Records are requested from the producer, existing records are packed into a response packet, additional field payload is packed, the locally generated events that can be send are packed and send to the consumer protocol. The protocol will start over and wait for the next requesting packet to be received.

Local Proxy

A common case in producer – consumer scenarios is the one in which the producer and consumer are collocated, running within the boundaries of the same VM, possibly in different threads if their execution is concurrent or even serially one after the other. In such a situation it is obvious that for performance and for resource utilization reasons one would prefer to be able to access directly through the shared memory address space the products of the producer within the consumer.

The record pool design and operation facilitates its usage as a shared buffer of records. The synchronization of producing and consuming parties through events makes transparent for both actors the actual location of their counterpart. In this environment the only thing missing to enable optimized local consumption of a record pool is a way to pass to the consumer a reference to the record pool authored by the producer. To do so in a manner that would hide from the two actors the actual location of each other and not force them to apply any kind of logic to distinguish between the two cases, the gRS registration and mediation classes comes in.

Once the producer is ready to provide its output to any consumer interested, it will register its record pool with the Registry class as described in previous sections. For the registration step an IProxy implementing instance must be provided. Should the consumer is collocated with the producer (this knowledge is assumed to exist somewhere in the system, quite possibly to the component orchestrating the execution) an instance of the LocalProxy class will be provided for the registration step. During the registration process the authored record pool will be assigned to the LocalProxy class. From then on the proxy can be queried to produce an IProxyLocator instance. The LocalProxy class will produce an instance of the LocalProxyLocator class. This locator can me serialized and deserialized to produce a new LocalProxyLocator instance that can still identify the referenced record pool through the RegistryKey that was assigned to the record pool and set to the LocalProxy. This locator can in turn be used to create a new LocalProxy instance and used to instantiate a new Reader class that can consume to produced record pool. The record pool used will be a reference to the one produced since the LocalProxy can lookup the Registry to retrieve the stored record pool associated with the LocalProxyLocator stored registry key.

The current version of the LocalProxy dos not utilize any security specification. These are used only to authenticate and secure remote communication. There is although the possibility to utilize this in later versions to authenticate also components running within the same VM in a uniform manner as one would for remote ones.

TCPAdHoc Proxy

The general case of producer – consumer scenarios is to be unaware of the location of the two actors or specifically know that they are located in different hosts or in general different VMs. This means that no in memory reference can be shared. Producers and consumers must communicate externally to be able to synchronize their operation and exchange data. The protocol of synchronization and data exchange has been details in previous sections.

As has already been described in the LocalProxy synchronization scenario the readers and writers of a record pool are already able to synchronize based on events emitted through the record pool. In the protocol section we illustrated how the events and data that are passed through a record pool can be transferred and mirrored to a remote copy of the record pool. The only thing left to enable remote production and consumption is a technology to transfer the protocol packets. Multiple protocols can be used and in this section the case of a TCP connection use case will be illustrated. Since the producer and consumer need not be aware of any of the underlying technology details, the proxy and registration classes of the framework are the ones managing all needed technology specific details.

As in the case of the LocalProxy the producer is at some point in need to share his output with a consumer. He will again go through the registration procedure but this time instead of a LocalProxy proxy instance, a TCPAdHocProxy instance is passed to the registration method.

The ad hoc TCP proxy for every record pool it is called to mediate, will open a new port in the producer side and wait for the consumer to connect to this port. The consumer side proxy will receive through the locator the port that the producer proxy is listening to and will connect there. After that the security specifications are consulted to create an encoded communication stream or not as already described in different section. Then the chosen in the proxy configuration IProtocol implementations handle the synchronization. The over the wire communication is done through an implementation of the ITransporter interface that reads from and writes to the TCP input and output stream, using a Cipher if needed.

Once the registration process is completed in the producer the producer's side proxy, transport and protocol are all ready to accept incoming calls. The proxy is in a position to create a valid IProxyLocator instance which for the TCPAdHocProxy will be a TCPProxyLocator. The TCPProxyLocator contains the referenced pool RegistryKey, the hostname the producer is running on, the port the ITransporter is listening to, the IProtocol type used, whether the packet headers should be authenticated and whether the communication streams should be encrypted. This locator can be serialized and deserialized into a new TCPProxyLocator that can be used to initialize a Reader to access the remote record pool through a newly initialized ITransporter created from the TCPAdHocProxy of the consumer that instantiated a consumer side IProtocol of the same type as the one used by the producer.

TCPServer Proxy

The TCPAdHocProxy described above needs to open a new port for every record pool that needs to be served by the producer. This though it makes individual pool serving autonomic and more robust can become difficult to be maintained in an infrastructure with security restrictions and firewalled communication. In response to this the TCPServerProxy can be used to bound the communication ports.

The first time a producer creates an instance of the TCPServer proxy within the boundaries of a VM, a TCPTransportServer instance is created following the singleton pattern. From then on any instance of the TCPServerProxy is directed to this server to manage its communication needs. The port the server listens to is configured through the already available configuration class of the proxy instance and after initialized, all traffic will pass through this port.

The server keeps an internal mapping between served record pools and open sockets. Upon request it can provide interested transporters with the socket that is associated with the record pool they are mediating for. Upon disposal of the transporters, the internal state of the server is also purged as far as pool specific information is concerned.

To be able to create such a mapping between the record pool and the specific socket, the server bases its functionality to the consumer send UUID of the record pool it needs to consume as provided through the locator instance it is initialized with. This information is always send out as clear text regardless of specified security specifications, as the configured security specifications are always at a per session scope, while the server needs to recognize the pool UUID regardless of the session that will be created between the two protocol implementations and their transporters.

Furthermore a vulnerability this implementation has is the way the server expects to retrieve the referenced pool UUID to map the opened socket. After opening a socket, the server will block waiting for the consumer to send its pool UUID. Until the information is provided, no other consumer will be accepted by the server. This leaves open ground for a denial of service attack but was chosen not to be handled by a separate thread spawned to save resources.

Third-party point of view

Producing a record pool

In order for a producer to create and author record pool a record pool writer must be created. All writer classes of a record pool are implementations of the IWriter interface. A writer is initialized with a set of configuration options. These options are the ones that define the type of the record pool that will be created, the buffer policy that the producer will use, the intermediate record buffer that will hold records before flushing them to the record pool and in general the behavior of the writer.

The writer monitors the record pool it produces for emitted events. Depending on the type of event caught, the writer may need to perform some operation, bubble the event to its client or for some events it can simply ignore them. The specific needs of each event handling is detailed in previous sections. When the event caught is something that its client might be interested in, the writer will in turn have to be able to emit that event to its client. This is done through the WriterState class that contains the events that a writer's client can register for and receive notifications. These events include the following:

- ConsumptionCompletedEvent – The consumer of the record pool has declared that he will no longer be accessing records and fields from the record pool.

- ItemRequestedEvent – Either the consumer or the producer's buffer policy requested that a number of additional records are required.

- MessageToWriterEvent – A message with the producer as its recipient was emitted by either the consumer or some component of the platform.

- DisposePoolEvent – The record pool is no longer needed by the consumer. Since the gRs support point to point communication this means that the producer's state must also be disposed.

Depending on the type of writer initialized, the client may have to be able to handle and respond to these events, or it can rely on the writer to simplify its usage by offering blocking mechanisms that will call upon the client to act on a specific request without having to take any notice of the events. The BlockingRecordStreamWriter class is such an example while the RecordStreamWriter class is exposing all the needed events a client needs to act only upon notification in an asynchronous fashion.

Consuming a record pool

In order for a consumer to access a record pool a reader class must be initialized. All record pool readers are implementors of the IReader interface. Reader classes are initialized with a set of configuration options that do not affect the record pool that the readers are accessing but rather the behavior of the reader while accessing the record pool.

A reader is initialized by providing an IProxy implementation instantiated though an IProxyLocator capable of identifying a record pool produced by an IWriter instance. Through this proxy the reader gets a reference to the record pool it is going to consume no matter if this reference is the actual reference to the producer's record pool or a synchronizable copy.

The consumption of the records contained in the record pool and their fields may vary depending on the readers desired functionality. Random access may be provided over the pool's records as the RandomRecordReader class provides, or stream like access such as the RecordStreamReader class provides. The Reader will subscribe for events produced by the record pool it accesses and depending on their type it may have to perform some operation, bubble the event to its client, or simply ignore it. The specific needs of each event handling is detailed in previous sections. When the event caught is something that its client might be interested in, the reader will in turn have to be able to emit that event to its client. This is done through the ReaderState class that contains the events that a reader's client can register for and receive notifications. These events include the following:

- ProductionCompletedEvent – The producer of the record pool has declared that he will no longer be producing any more records. This does not mean that the consumption has to stop. The consumer can still access the existing records and their fields.

- ItemAddedEvent – The producer in response to a direct consuming client's request or to a buffering policy request has added some records to the shared record pool.

- MessageToReaderEvent – A message with the consumer as its recipient was emitted by either the producer or some component of the platform.

- DisposePoolEvent – The record pool is no longer needed. Since the gRs support point to point communication this means that the producer and consumer state must be disposed.

Depending on the type of reader initialized, the client may have to be able to handle and respond to these events, or it can rely on the reader to simplify its usage by offering blocking mechanisms that will call upon the client to act on a specific request without having to take any notice of the events. The BlockingRecordStreamReader class is such an example while the RecordStreamreader and the RandomAccessReader class are exposing all the needed events a client needs to act only upon notification in an asynchronous fashion.

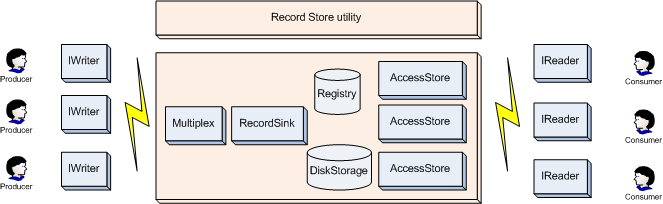

Record Store Utility