Index Management Framework

Contents

- 1 Contextual Query Language Compliance

- 2 New Full Text Index

- 2.1 Headline text

- 2.2 Implementation Overview

- 2.3 Usage Example

- 2.4 Usage Example

- 3 New Forward Index

- 4 Storage Handling layer

- 5 Index Common library

Contextual Query Language Compliance

The gCube Index Framework consists of the Full Text Index, the Geospatial Index and the Forward Index. All of them are able to answer CQL queries. The CQL relations that each of them supports, depends on the underlying technologies. The mechanisms for answering CQL queries, using the internal design and technologies, are described later for each case. The supported relations are:

- New Full Text Index : =, adj, fuzzy, proximity, within

- Full Text Index : =, adj, fuzzy, proximity, within

- Geo-Spatial Index : geosearch

- New Forward Index : ==, within

- Forward Index : ==, within

New Full Text Index

The Full Text Index is responsible for providing quick full text data retrieval capabilities in the gCube environment.

Headline text

Implementation Overview

Services

The new full text index is implemented through one service. It is implemented according to the Factory pattern:

- The FullTextIndexNode Service represents an index node. It is used for managements, lookup and updating the node. It is a compaction of the 3 services that were used in the old Full Text Index.

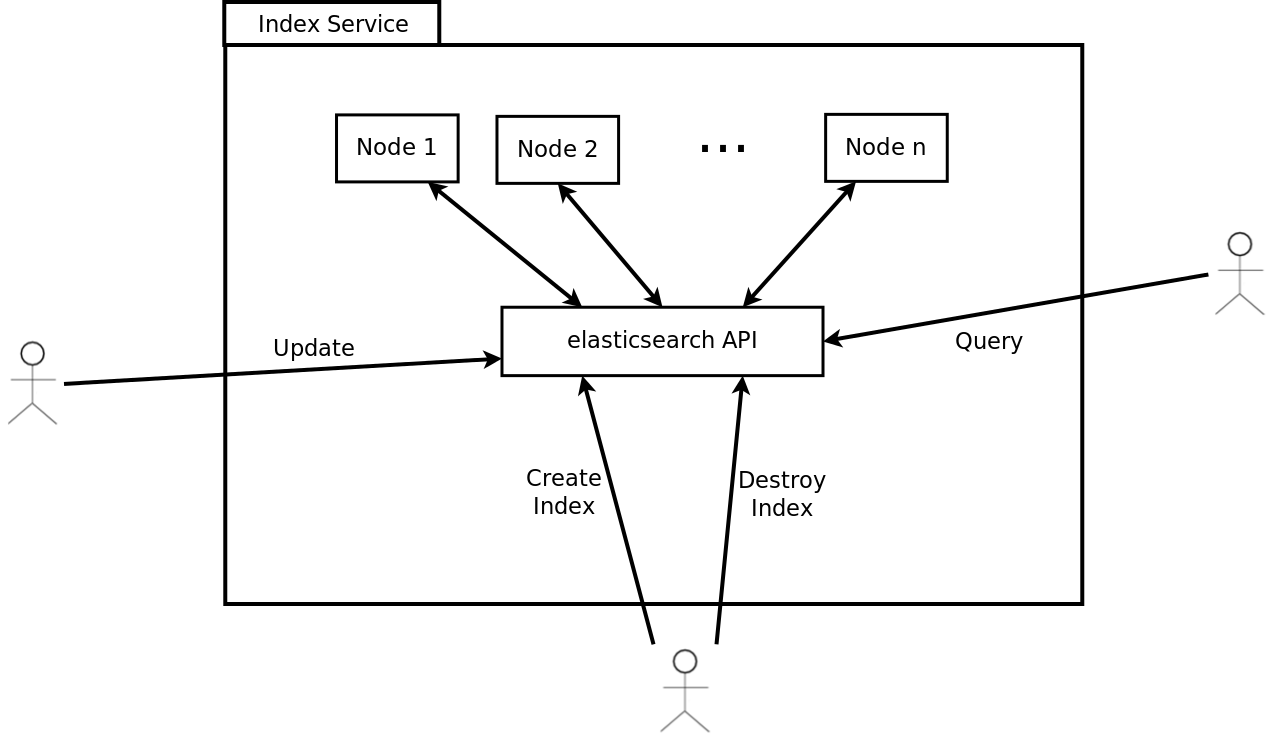

The following illustration shows the information flow and responsibilities for the different services used to implement the Full Text Index:

It is actually a wrapper over ElasticSearch and each FullTextIndexNode has a 1-1 relationship with an ElasticSearch Node. For this reason creation of multiple resources of FullTextIndexNode service is discouraged, instead the best case is to have one resource (one node) at each gHN that consists the cluster.

Clusters can be created in almost the same way that a group of lookups and updaters and a manager were created in the old Full Text Index (using the same indexID). Having multiple clusters within a scope is feasible but discouraged because it usually better to have a large cluster than multiple small clusters.

The cluster distinction is done through a clusterID which is either the same as the indexID or the scope. The deployer of the service can choose between these two by setting the value of useClusterId variable in the deploy-jndi-config.xml file true of false respectively.

Example

<environment name="useClusterId" value="false" type="java.lang.Boolean" override="false" />

or

<environment name="useClusterId" value="true" type="java.lang.Boolean" override="false" />

ElasticSearch, which is the underlying technology of the new Full Text Index, can configure the number of replicas and shards for each index. This is done by setting the variables noReplicas and noShards in the deploy-jndi-config.xml file

Example:

<environment name="noReplicas" value="1" type="java.lang.Integer" override="false" /> <environment name="noShards" value="4" type="java.lang.Integer" override="false" />

Highlighting is a new supported feature by Full Text Index (also supported in the old Full Text Index). If highlighting is enabled the index returns a snippet of the matching query that is performed on the presentable fields. This snippet is usually a concatenation of a number of matching fragments in those fields that match queries. The maximum size of each fragment as well as the maximum number of the fragments that will be used to construct a snippet can be configured by setting the variables maxFragmentSize and maxFragmentCnt in the deploy-jndi-config.xml file respectively:

Example:

<environment name="maxFragmentCnt" value="100" type="java.lang.Integer" override="false" /> <environment name="maxFragmentSize" value="100" type="java.lang.Integer" override="false" />

Finally, the folder where the data of the index are stored can be configured by setting the variable dataDir in the deploy-jndi-config.xml file (if the variable is not set the default location ServiceContext.getContext().getPersistenceRoot().getAbsolutePath() + "/indexData/elasticsearch/").

Example :

<environment name="dataDir" value="/tmp" type="java.lang.String" override="false" />

CQL capabilities implementation

Full Text Index uses Lucene as its underlying technology. A CQL Index-Relation-Term triple has a straightforward transformation in lucene. This transformation is explained through the following examples:

| CQL triple | explanation | lucene equivalent |

|---|---|---|

| title adj "sun is up" | documents with this phrase in their title | title:"sun is up" |

| title fuzzy "invorvement" | documents with words "similar" to invorvement in their title | title:invorvement~ |

| allIndexes = "italy" (documents have 2 fields; title and abstract) | documents with the word italy in some of their fields | title:italy OR abstract:italy |

| title proximity "5 sun up" | documents with the words sun, up inside an interval of 5 words in their title | title:"sun up"~5 |

| date within "2005 2008" | documents with a date between 2005 and 2008 | date:[2005 TO 2008] |

In a complete CQL query, the triples are connected with boolean operators. Lucene supports AND, OR, NOT(AND-NOT) connections between single criteria. Thus, in order to transform a complete CQL query to a lucene query, we first transform CQL triples and then we connect them with AND, OR, NOT equivalently.

RowSet

The content to be fed into an Index, must be served as a ResultSet containing XML documents conforming to the ROWSET schema. This is a very simple schema, declaring that a document (ROW element) should contain of any number of FIELD elements with a name attribute and the text to be indexed for that field. The following is a simple but valid ROWSET containing two documents:

<ROWSET idxType="IndexTypeName" colID="colA" lang="en">

<ROW>

<FIELD name="ObjectID">doc1</FIELD>

<FIELD name="title">How to create an Index</FIELD>

<FIELD name="contents">Just read the WIKI</FIELD>

</ROW>

<ROW>

<FIELD name="ObjectID">doc2</FIELD>

<FIELD name="title">How to create a Nation</FIELD>

<FIELD name="contents">Talk to the UN</FIELD>

<FIELD name="references">un.org</FIELD>

</ROW>

</ROWSET>

The attributes idxType and colID are required and specify the Index Type that the Index must have been created with, and the collection ID of the documents under the <ROWSET> element. The lang attribute is optional, and specifies the language of the documents under the <ROWSET> element. Note that for each document a required field is the "ObjectID" field that specifies its unique identifier.

IndexType

How the different fields in the ROWSET should be handled by the Index, and how the different fields in an Index should be handled during a query, is specified through an IndexType; an XML document conforming to the IndexType schema. An IndexType contains a field list which contains all the fields which should be indexed and/or stored in order to be presented in the query results, along with a specification of how each of the fields should be handled. The following is a possible IndexType for the type of ROWSET shown above:

<index-type>

<field-list>

<field name="title" lang="en">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

<field name="contents" lang="en>

<index>yes</index>

<store>no</store>

<return>no</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

<field name="references" lang="en>

<index>yes</index>

<store>no</store>

<return>no</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

<field name="gDocCollectionID">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

<field name="gDocCollectionLang">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

</field-list>

</index-type>

Note that the fields "gDocCollectionID", "gDocCollectionLang" are always required, because, by default, all documents will have a collection ID and a language ("unknown" if no collection is specified). Fields present in the ROWSET but not in the IndexType will be skipped. The elements under each "field" element are used to define how that field should be handled, and they should contain either "yes" or "no". The meaning of each of them is explained bellow:

- index

- specifies whether the specific field should be indexed or not (ie. whether the index should look for hits within this field)

- store

- specifies whether the field should be stored in its original format to be returned in the results from a query.

- return

- specifies whether a stored field should be returned in the results from a query. A field must have been stored to be returned. (This element is not available in the currently deployed indices)

- tokenize

- specifies whether the field should be tokenized. Should usually contain "yes".

- sort

- Not used

- boost

- Not used

For more complex content types, one can also specify sub-fields as in the following example:

<index-type>

<field-list>

<field name="contents">

<index>yes</index>

<store>no</store>

<return>no</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

<!-- subfields of contents -->

<field name="title">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

<!-- subfields of title which itself is a subfield of contents -->

<field name="bookTitle">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

<field name="chapterTitle">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

</field>

<field name="foreword">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

<field name="startChapter">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

<field name="endChapter">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

</field>

<!-- not a subfield -->

<field name="references">

<index>yes</index>

<store>no</store>

<return>no</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

</field-list>

</index-type>

Querying the field "contents" in an index using this IndexType would return hitsin all its sub-fields, which is all fields except references. Querying the field "title" would return hits in both "bookTitle" and "chapterTitle" in addition to hits in the "title" field. Querying the field "startChapter" would only return hits in from "startChapter" since this field does not contain any sub-fields. Please be aware that using sub-fields adds extra fields in the index, and therefore uses more disks pace.

We currently have five standard index types, loosely based on the available metadata schemas. However any data can be indexed using each, as long as the RowSet follows the IndexType:

- index-type-default-1.0 (DublinCore)

- index-type-TEI-2.0

- index-type-eiDB-1.0

- index-type-iso-1.0

- index-type-FT-1.0

Query language

The Full Text Index receives CQL queries and transforms them into Lucene queries. Queries using wildcards will not return usable query statistics.

Usage Example

Create a FullTextIndex Node, feed and query using the corresponding client library

FullTextIndexNodeFactoryCLProxyI proxyRandomf = FullTextIndexNodeFactoryDSL.getFullTextIndexNodeFactoryProxyBuilder().build(); //Create a resource CreateResource createResource = new CreateResource(); CreateResourceResponse output = proxyRandomf.createResource(createResource); //Get the reference StatefulQuery q = FullTextIndexNodeDSL.getSource().withIndexID(output.IndexID).build(); List<EndpointReference> refs = q.fire(); //Get a proxy try { FullTextIndexNodeCLProxyI proxyRandom = FullTextIndexNodeDSL.getFullTextIndexNodeProxyBuilder().at((W3CEndpointReference)refs.get(0)).build(); //Feed proxyRandom.feedLocator(locator); //Query proxyRandom.query(query); } catch (FullTextIndexNodeException e) { //Handle the exception }

</nowiki></span>

<field name="title">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

<!-- subfields of title which itself is a subfield of contents -->

<field name="bookTitle">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

<field name="chapterTitle">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

</field>

<field name="foreword">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

<field name="startChapter">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

<field name="endChapter">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

</field>

<!-- not a subfield -->

<field name="references">

<index>yes</index>

<store>no</store>

<return>no</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

</field-list>

</index-type>

Querying the field "contents" in an index using this IndexType would return hitsin all its sub-fields, which is all fields except references. Querying the field "title" would return hits in both "bookTitle" and "chapterTitle" in addition to hits in the "title" field. Querying the field "startChapter" would only return hits in from "startChapter" since this field does not contain any sub-fields. Please be aware that using sub-fields adds extra fields in the index, and therefore uses more disks pace.

We currently have five standard index types, loosely based on the available metadata schemas. However any data can be indexed using each, as long as the RowSet follows the IndexType:

- index-type-default-1.0 (DublinCore)

- index-type-TEI-2.0

- index-type-eiDB-1.0

- index-type-iso-1.0

- index-type-FT-1.0

The IndexType of a FullTextIndexManagement Service resource can be changed as long as no FullTextIndexUpdater resources have connected to it. The reason for this limitation is that the processing of fields should be the same for all documents in an index; all documents in an index should be handled according to the same IndexType.

The IndexType of a FullTextIndexLookup Service resource is originally retrieved from the FullTextIndexManagement Service resource it is connected to. However, the "returned" property can be changed at any time in order to change which fields are returned. Keep in mind that only fields which have a "stored" attribute set to "yes" can have their "returned" field altered to return content.

Query language

The Full Text Index receives CQL queries and transforms them into Lucene queries. Queries using wildcards will not return usable query statistics.

Statistics

Linguistics

The linguistics component is used in the Full Text Index.

Two linguistics components are available; the language identifier module, and the lemmatizer module.

The language identifier module is used during feeding in the FullTextBatchUpdater to identify the language in the documents. The lemmatizer module is used by the FullTextLookup module during search operations to search for all possible forms (nouns and adjectives) of the search term.

The language identifier module has two real implementations (plugins) and a dummy plugin (doing nothing, returning always "nolang" when called). The lemmatizer module contains one real implementation (one plugin) (no suitable alternative was found to make a second plugin), and a dummy plugin (always returning an empty String "").

Fast has provided proprietary technology for one of the language identifier modules (Fastlangid) and the lemmatizer module (Fastlemmatizer). The modules provided by Fast require a valid license to run (see later). The license is a 32 character long string. This string must be provided by Fast (contact Stefan Debald, setfan.debald@fast.no), and saved in the appropriate configuration file (see install a lingustics license).

The current license is valid until end of March 2008.

Plugin implementation

The classes implementing the plugin framework for the language identifier and the lemmatizer are in the SVN module common. The package is:

org/gcube/indexservice/common/linguistics/lemmatizerplugin and org/gcube/indexservice/common/linguistics/langidplugin

The class LanguageIdFactory loads an instance of the class LanguageIdPlugin. The class LemmatizerFactory loads an instance of the class LemmatizerPlugin.

The language id plugins implements the class org.gcube.indexservice.common.linguistics.langidplugin.LanguageIdPlugin. The lemmatizer plugins implements the class org.gcube.indexservice.common.linguistics.lemmatizerplugin.LemmatizerPlugin. The factory use the method:

Class.forName(pluginName).newInstance();

when loading the implementations. The parameter pluginName is the package name of the plugin class to be loaded and instantiated.

Language Identification

There are two real implementations of the language identification plugin available in addition to the dummy plugin that always returns "nolang".

The plugin implementations that can be selected when the FullTextBatchUpdaterResource is created:

org.gcube.indexservice.common.linguistics.jtextcat.JTextCatPlugin

org.gcube.indexservice.linguistics.fastplugin.FastLanguageIdPlugin

org.gcube.indexservice.common.linguistics.languageidplugin.DummyLangidPlugin

JTextCat

The JTextCat is maintained by http://textcat.sourceforge.net/. It is a light weight text categorization language tool in Java. It implements the N-Gram-Based Text Categorization algorithms that is described here: http://citeseer.ist.psu.edu/68861.html It supports the languages: German, English, French, Spanish, Italian, Swedish, Polish, Dutch, Norwegian, Finnish, Albanian, Slovakian, Slovenian, Danish and Hungarian.

The JTexCat is loaded and accessed by the plugin: org.gcube.indexservice.common.linguistics.jtextcat.JTextCatPlugin

The JTextCat contains no config - or bigram files since all the statistical data about the languages are contained in the package itself.

The JTextCat is delivered in the jar file: textcat-1.0.1.jar.

The license for the JTextCat: http://www.gnu.org/copyleft/lesser.html

Fastlangid

The Fast language identification module is developed by Fast. It supports "all" languages used on the web. The tools is implemented in C++. The C++ code is loaded as a shared library object. The Fast langid plugin interfaces a Java wrapper that loads the shared library objects and calls the native C++ code. The shared library objects are compiled on Linux RHE3 and RHE4.

The Java native interface is generated using Swig.

The Fast langid module is loaded by the plugin (using the LanguageIdFactory)

org.gcube.indexservice.linguistics.fastplugin.FastLanguageIdPlugin

The plugin loads the shared object library, and when init is called, instantiate the native C++ objects that identifies the languages.

The Fastlangid is in the SVN module: trunk/linguistics/fastlinguistics/fastlangid

The lib catalog contains one catalog for RHE3 and one catalog for RHE4 shared objects (.so). The etc catalog contains the config files. The license string is contained in the config file config.txt

The shared library object is called liblangid.so

The configuration files for the langid module are installed in $GLOBUS_LOACTION/etc/langid.

The org_gcube_indexservice_langid.jar contains the plugin FastLangidPlugin (that is loaded by the LanguageIdFactory) and the Java native interface to the shared library object.

The shared library object liblangid.so is deployed in the $GLOBUS_LOCATION/lib catalogue.

The license for the Fastlangid plugin:

Language Identifier Usage

The language identifier is used by the Full Text Updater in the Full Text Index. The plugin to use for an updater is decided when the resource is created, as a part of the create resource call. (see Full Text Updater). The parameter is the package name of the implementation to be loaded and used to identify the language.

The language identification module and the lemmatizer module are loaded at runtime by using a factory that loads the implementation that is going to be used.

The feeded documents may contain the language per field in the document. If present this specified language is used when indexing the document. In this case the language id module is not used. If no language is specified in the document, and there is a language identification plugin loaded, the FullTextIndexUpdater Service will try to identify the language of the field using the loaded plugin for language identification. Since language is assigned at the Collections level in Diligent, all fields of all documents in a language aware collection should contain a "lang" attribute with the language of the collection.

A language aware query can be performed at a query or term basis:

- the query "_querylang_en: car OR bus OR plane" will look for English occurrences of all the terms in the query.

- the queries "car OR _lang_en:bus OR plane" and "car OR _lang_en_title:bus OR plane" will only limit the terms "bus" and "title:bus" to English occurrences. (the version without a specified field will not work in the currently deployed indices)

- Since language is specified at a collection level, language aware queries should only be used for language neutral collections.

Lemmatization

There is one real implementations of the lemmatizer plugin available in addition to the dummy plugin that always returns "" (empty string).

The plugin implementations is selected when the FullTextLookupResource is created:

org.diligentproject.indexservice.linguistics.fastplugin.FastLemmatizerPlugin

org.diligentproject.indexservice.common.linguistics.languageidplugin.DummyLemmatizerPlugin

Fastlemmatizer

The Fast lemmatizer module is developed by Fast. The lemmatizer modules depends on .aut files (config files) for the language to be lemmatized. Both expansion and reduction is supported, but expansion is used. The terms (noun and adjectives) in the query are expanded.

The lemmatizer is configured for the following languages: German, Italian, Portuguese, French, English, Spanish, Netherlands, Norwegian. To support more languages, additional .aut files must be loaded and the config file LemmatizationQueryExpansion.xml must be updated.

The lemmatizer is implemented in C++. The C++ code is loaded as a shared library object. The Fast langid plugin interfaces a Java wrapper that loads the shared library objects and calls the native C++ code. The shared library objects are compiled on Linux RHE3 and RHE4.

The Java native interface is generated using Swig.

The Fast lemmatizer module is loaded by the plugin (using the LemmatizerIdFactory)

org.diligentproject.indexservice.linguistics.fastplugin.FastLemmatizerPlugin

The plugin loads the shared object library, and when init is called, instantiate the native C++ objects.

The Fastlemmatizer is in the SVN module: trunk/linguistics/fastlinguistics/fastlemmatizer

The lib catalog contains one catalog for RHE3 and one catalog for RHE4 shared objects (.so). The etc catalog contains the config files. The license string is contained in the config file LemmatizerConfigQueryExpansion.xml The shared library object is called liblemmatizer.so

The configuration files for the langid module are installed in $GLOBUS_LOACTION/etc/lemmatizer.

The org_diligentproject_indexservice_lemmatizer.jar contains the plugin FastLemmatizerPlugin (that is loaded by the LemmatizerFactory) and the Java native interface to the shared library.

The shared library liblemmatizer.so is deployed in the $GLOBUS_LOCATION/lib catalogue.

The $GLOBUS_LOCATION/lib must therefore be include in the LD_LIBRARY_PATH environment variable.

Fast lemmatizer configuration

The LemmatizerConfigQueryExpansion.xml contains the paths to the .aut files that is loaded when a lemmatizer is instanciated.

<lemmas active="yes" parts_of_speech="NA">etc/lemmatizer/resources/dictionaries/lemmatization/en_NA_exp.aut</lemmas>

The path is relative to the env variable GLOBUS_LOCATION. If this path is wrong, it the Java machine will core dump.

The license for the Fastlemmatizer plugin:

Fast lemmatizer logging

The lemmatizer logs info, debug and error messages to the file "lemmatizer.txt"

Lemmatization Usage

The FullTextIndexLookup Service uses expansion during lemmatization; a word (of a query) is expanded into all known versions of the word. It is of course important to know the language of the query in order to know which words to expand the query with. Currently the same methods used to specify language for a language aware query is used is used to specify language for the lemmatization process. A way of separating these two specifications (such that lemmatization can be performed without performing a language aware query) will be made available shortly...

Linguistics Licenses

The current license key for the fastalngid and fastlemmatizer is valid through March 2008.

If a new license is required please contact: Stefan.debald@fast.no to get a new license key.

The license must be installed both in the Fastlangid and the Fastlemmatizer module.

The fastlangid license is installed by updating the SVN text file: linguistics/fastlinguistics/fastlangid/etc/langid/config.txt

Use a text editor and replace the 32 character license string with the new license string:

// The license key // Contact stefand.debald@fast.no for new license key: LICSTR=KILMDEPFKHNBNPCBAKONBCCBFLKPOEFG

A running system is updated by replacing the license string in the file: $GLOBUS_LOCATION/etc/langid/config.txt as described above.

The fastlemmatizer license is installed by updating the SVN text file:

linguistics/fastlinguistics/fastlemmatizer/etc/LemmatizationConfigQueryExpansion.xml

Use a text editor and replace the 32 character license string with the new license string:

<lemmatization default_mode="query_expansion" default_query_language="en" license="KILMDEPFKHNBNPCBAKONBCCBFLKPOEFG">

The running system is updated by replacing the license string in the file: $GLOBUS_LOCATION/etc/lemmatizer/LemmatizationConfigQueryExpansion.xml

Partitioning

In order to handle situations where an Index replication does not fit on a single node, partitioning has been implemented for the FullTextIndexLookup Service; in cases where there is not enough space to perform an update/addition on the FullTextIndexLookup Service resource, a new resource will be created to handle all the content which didn't fit on the first resource. The partitioning is handled automatically and is transparent when performing a query, however the possibility of enabling/disabling partitioning will be added in the future. In the deployed Indices partitioning has been disabled due to problems with the creation of statistics. Will be fixed shortly.

Usage Example

Create a Management Resource

//Get the factory portType String managementFactoryURI = "http://some.domain.no:8080/wsrf/services/gcube/index/FullTextIndexManagementFactoryService"; FullTextIndexManagementFactoryServiceAddressingLocator managementFactoryLocator = new FullTextIndexManagementFactoryServiceAddressingLocator(); managementFactoryEPR = new EndpointReferenceType(); managementFactoryEPR.setAddress(new Address(managementFactoryURI)); managementFactory = managementFactoryLocator .getFullTextIndexManagementFactoryPortTypePort(managementFactoryEPR); //Create generator resource and get endpoint reference of WS-Resource. org.gcube.indexservice.fulltextindexmanagement.stubs.CreateResource managementCreateArguments = new org.gcube.indexservice.fulltextindexmanagement.stubs.CreateResource(); managementCreateArguments.setIndexTypeName(indexType));//Optional (only needed if not provided in RS) managementCreateArguments.setIndexID(indexID);//Optional (should usually not be set, and the service will create the ID) managementCreateArguments.setCollectionID("myCollectionID"); managementCreateArguments.setContentType("MetaData"); org.gcube.indexservice.fulltextindexmanagement.stubs.CreateResourceResponse managementCreateResponse = managementFactory.createResource(managementCreateArguments); managementInstanceEPR = managementCreateResponse.getEndpointReference(); String indexID = managementCreateResponse.getIndexID();

Create an Updater Resource and start feeding

//Get the factory portType updaterFactoryURI = "http://some.domain.no:8080/wsrf/services/gcube/index/FullTextIndexUpdaterFactoryService"; //could be on any node updaterFactoryEPR = new EndpointReferenceType(); updaterFactoryEPR.setAddress(new Address(updaterFactoryURI)); updaterFactory = updaterFactoryLocator .getFullTextIndexUpdaterFactoryPortTypePort(updaterFactoryEPR); //Create updater resource and get endpoint reference of WS-Resource org.gcube.indexservice.fulltextindexupdater.stubs.CreateResource updaterCreateArguments = new org.gcube.indexservice.fulltextindexupdater.stubs.CreateResource(); //Connect to the correct Index updaterCreateArguments.setMainIndexID(indexID); //Now let's insert some data into the index... Firstly, get the updater EPR. org.gcube.indexservice.fulltextindexupdater.stubs.CreateResourceResponse updaterCreateResponse = updaterFactory .createResource(updaterCreateArguments); updaterInstanceEPR = updaterCreateResponse.getEndpointReference(); //Get updater instance PortType updaterInstance = updaterInstanceLocator.getFullTextIndexUpdaterPortTypePort(updaterInstanceEPR); //read the EPR of the ResultSet containing the ROWSETs to feed into the index BufferedReader in = new BufferedReader(new FileReader(eprFile)); String line; resultSetLocator = ""; while((line = in.readLine())!=null){ resultSetLocator += line; } //Tell the updater to start gathering data from the ResultSet updaterInstance.process(resultSetLocator);

Create a Lookup resource and perform a query

//Let's put it on another node for fun... lookupFactoryURI = "http://another.domain.no:8080/wsrf/services/gcube/index/FullTextIndexLookupFactoryService"; FullTextIndexLookupFactoryServiceAddressingLocator lookupFactoryLocator = new FullTextIndexLookupFactoryServiceAddressingLocator(); EndpointReferenceType lookupFactoryEPR = null; EndpointReferenceType lookupEPR = null; FullTextIndexLookupFactoryPortType lookupFactory = null; FullTextIndexLookupPortType lookupInstance = null; //Get factory portType lookupFactoryEPR= new EndpointReferenceType(); lookupFactoryEPR.setAddress(new Address(lookupFactoryURI)); lookupFactory =lookupFactoryLocator.getFullTextIndexLookupFactoryPortTypePort(factoryEPR); //Create resource and get endpoint reference of WS-Resource org.gcube.indexservice.fulltextindexlookup.stubs.CreateResource lookupCreateResourceArguments = new org.gcube.indexservice.fulltextindexlookup.stubs.CreateResource(); org.gcube.indexservice.fulltextindexlookup.stubs.CreateResourceResponse lookupCreateResponse = null; lookupCreateResourceArguments.setMainIndexID(indexID); lookupCreateResponse = lookupFactory.createResource( lookupCreateResourceArguments); lookupEPR = lookupCreateResponse.getEndpointReference(); //Get instance PortType lookupInstance = instanceLocator.getFullTextIndexLookupPortTypePort(instanceEPR); //Perform a query String query = "good OR evil"; String epr = lookupInstance.query(query); //Print the results to screen. (refer to the ResultSet Framework page for a more detailed explanation) RSXMLReader reader=null; ResultElementBase[] results; try{ //create a reader for the ResultSet we created reader = RSXMLReader.getRSXMLReader(new RSLocator(epr)); //Print each part of the RS to std.out System.out.println("<Results>"); do{ System.out.println(" <Part>"); if (reader.getNumberOfResults() > 0){ results = reader.getResults(ResultElementGeneric.class); for(int i = 0; i < results.length; i++ ){ System.out.println(" "+results[i].toXML()); } } System.out.println(" </Part>"); if(!reader.getNextPart()){ break; } } while(true); System.out.println("</Results>"); } catch(Exception e){ e.printStackTrace(); }

Getting statistics from a Lookup resource

String statsLocation = lookupInstance.createStatistics(new CreateStatistics()); //Connect to a CMS Running Instance EndpointReferenceType cmsEPR = new EndpointReferenceType(); cmsEPR.setAddress(new Address("http://swiss.domain.ch:8080/wsrf/services/gcube/contentmanagement/ContentManagementServiceService")); ContentManagementServiceServiceAddressingLocator cmslocator = new ContentManagementServiceServiceAddressingLocator(); cms = cmslocator.getContentManagementServicePortTypePort(cmsEPR); //Retrieve the statistics file from CMS GetDocumentParameters getDocumentParams = new GetDocumentParameters(); getDocumentParams.setDocumentID(statsLocation); getDocumentParams.setTargetFileLocation(BasicInfoObjectDescription.RAW_CONTENT_IN_MESSAGE); DocumentDescription description = cms.getDocument(getDocumentParams); //Write the statistics file from memory to disk File downloadedFile = new File("Statistics.xml"); DecompressingInputStream input = new DecompressingInputStream( new BufferedInputStream(new ByteArrayInputStream(description.getRawContent()), 2048)); BufferedOutputStream output = new BufferedOutputStream( new FileOutputStream(downloadedFile), 2048); byte[] buffer = new byte[2048]; int length; while ( (length = input.read(buffer)) >= 0){ output.write(buffer, 0, length); } input.close(); output.close();

-->

New Forward Index

The Forward Index provides storage and retrieval capabilities for documents that consist of key-value pairs. It is able to answer one-dimensional(that refer to one key only) or multi-dimensional(that refer to many keys) range queries, for retrieving documents with keyvalues within the corresponding range. The Forward Index Service design pattern is similar to/the same as the Full Text Index Service design and the Geo Index Service design. The forward index supports the following schema for each key value pair: key; integer, value; string key; float, value; string key; string, value; string key; date, value;string The schema for an index is given as a parameter when the index is created. The schema must be known in order to be able to build the indices with correct type for each field. The Objects stored in the database can be anything.

Implementation Overview

Services

The new forward index is implemented through one service. It is implemented according to the Factory pattern:

- The ForwardIndexNode Service represents an index node. It is used for managements, lookup and updating the node. It is a compaction of the 3 services that were used in the old Full Text Index.

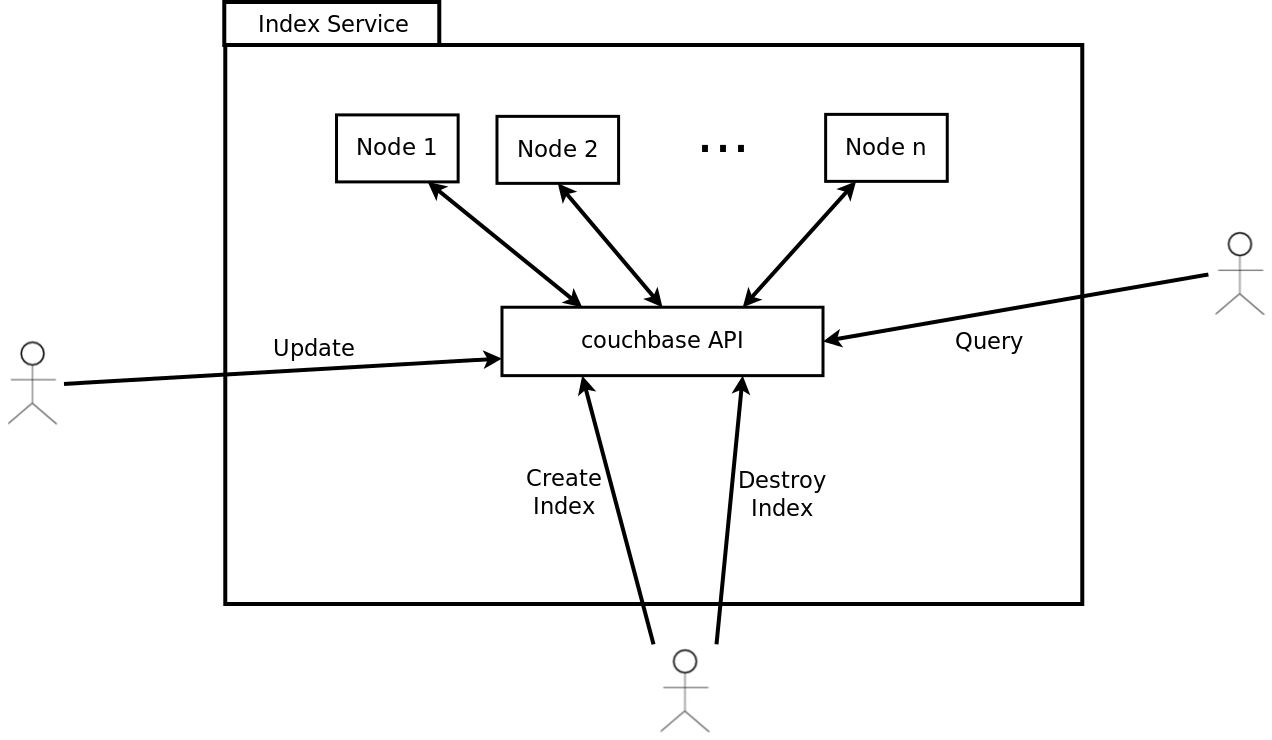

The following illustration shows the information flow and responsibilities for the different services used to implement the Forward Index:

It is actually a wrapper over Couchbase and each ForwardIndexNode has a 1-1 relationship with a Couchbase Node. For this reason creation of multiple resources of ForwardIndexNode service is discouraged, instead the best case is to have one resource (one node) at each gHN that consists the cluster.

Clusters can be created in almost the same way that a group of lookups and updaters and a manager were created in the old Full Text Index (using the same indexID). Having multiple clusters within a scope is feasible but discouraged because it usually better to have a large cluster than multiple small clusters.

The cluster distinction is done through a clusterID which is either the same as the indexID or the scope. The deployer of the service can choose between these two by setting the value of useClusterId variable in the deploy-jndi-config.xml file true of false respectively.

Example

<environment name="useClusterId" value="false" type="java.lang.Boolean" override="false" />

or

<environment name="useClusterId" value="true" type="java.lang.Boolean" override="false" />

Couchbase, which is the underlying technology of the new Forward Index, can configure the number of replicas for each index. This is done by setting the variables noReplicas in the deploy-jndi-config.xml file

Example:

<environment name="noReplicas" value="1" type="java.lang.Integer" override="false" />

After deployment of the service it is important to change some properties in the deploy-jndi-config.xml in order for the service to communicate with the couchbase server. The IP address of the gHN, the port of the couchbase server and also the credentials for the couchbase server need to be specified. It is important that all the couchbase servers within the cluster to share the same credentials in order to know how to connect with each other.

Example:

<environment name="couchbaseIP" value="127.0.0.1" type="java.lang.String" override="false" /> <environment name="couchbasePort" value="8091" type="java.lang.String" override="false" /> <!-- should be the same for all nodes in the cluster --> <environment name="couchbaseUsername" value="Administrator" type="java.lang.String" override="false" /> <!-- should be the same for all nodes in the cluster --> <environment name="couchbasePassword" value="mycouchbase" type="java.lang.String" override="false" />

Total RAM of each bucket-index can be specified as well in the deploy-jndi-config.xml

Example:

<environment name="ramQuota" value="512" type="java.lang.Integer" override="false" />

The fact that Couchbase is not embedded (as ElasticSearch) means that there is a possibility for a ForwardIndexNode to stop but the respective Couchbase server will be still running. Also, initialization of couchbase server (setting of credentials, port, data_path etc) needs to be done separetely for the first time after couchbase server installation, so it can be used by the server. In order to automate these routine process (and some others) the following bash scripts have been developed and come with the service.

# Initialize node run: $ cb_initialize_node.sh HOSTNAME PORT USERNAME PASSWORD # If the service is down you need to remove the couchbase server from the cluster as well and reinitialize it in order to restart it later run : $ cb_remove_node.sh HOSTNAME PORT USERNAME PASSWORD # If the service is down and you want for some reason to delete the bucket (index) to rebuild it you can simply run : $ cb_delete_bucket BUCKET_NAME HOSTNAME PORT USERNAME PASSWORD

CQL capabilities implementation

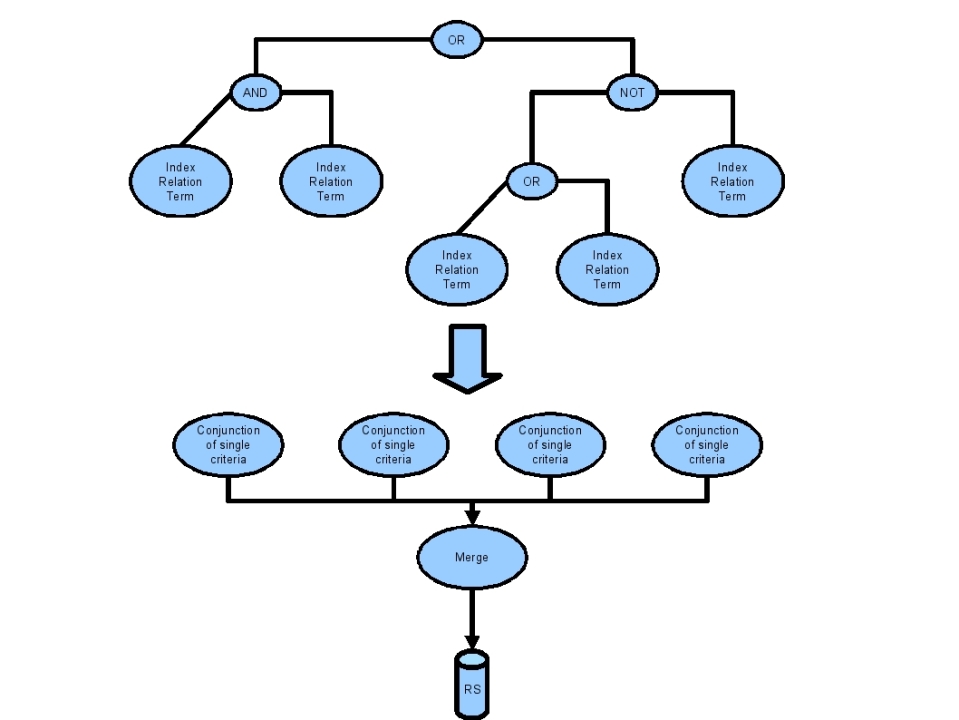

As stated in the previous section the design of the Forward Index Lookup enables the efficient execution of range queries that are conjunctions of single criteria. A initial CQL query is transformed into an equivalent one that is a union of range queries. Each range query will be a conjunction of single criteria. A single criterion will refer to a single indexed key of the Forward Index. This transformation is described by the following figure:

In a similar manner with the Geo-Spatial Index Lookup, the Forward Index Lookup will exploit any possibilities to eliminate parts of the query that will not produce any results, by applying "cut-off" rules. The merge operation of the range queries' results is performed internally by the Forward Index Lookup.

RowSet

The content to be fed into an Index, must be served as a ResultSet containing XML documents conforming to the ROWSET schema. This is a simple schema, containing key and value pairs. The following is an example of a ROWSET for that can be fed into the Forward Index Updater:

The row set "schema"

<ROWSET> <INSERT> <TUPLE> <KEY> <KEYNAME>title</KEYNAME> <KEYVALUE>sun is up</KEYVALUE> </KEY> <KEY> <KEYNAME>ObjectID</KEYNAME> <KEYVALUE>cms://dfe59f60-f876-11dd-8103-acc6e633ea9e/16b96b70-f877-11dd-8103-acc6e633ea9e</KEYVALUE> </KEY> <KEY> <KEYNAME>gDocCollectionID</KEYNAME> <KEYVALUE>dfe59f60-f876-11dd-8103-acc6e633ea9e</KEYVALUE> </KEY> <KEY> <KEYNAME>gDocCollectionLang</KEYNAME> <KEYVALUE>es</KEYVALUE> </KEY> <VALUE> <FIELD name="title">sun is up</FIELD> <FIELD name="ObjectID">cms://dfe59f60-f876-11dd-8103-acc6e633ea9e/16b96b70-f877-11dd-8103-acc6e633ea9e</FIELD> <FIELD name="gDocCollectionID">dfe59f60-f876-11dd-8103-acc6e633ea9e</FIELD> <FIELD name="gDocCollectionLang">es</FIELD> </VALUE> </TUPLE> </INSERT> </ROWSET>

The <KEY> elements specify the indexable information, while the <FIELD> elements under the <VALUE> element specifies the presentable information.

Usage Example

Create a ForwardIndex Node, feed and query using the corresponding client library

ForwardIndexNodeFactoryCLProxyI proxyRandomf = ForwardIndexNodeFactoryDSL.getGeoIndexUpdaterFactoryProxyBuilder().build(); //Create a resource CreateResource createResource = new CreateResource(); CreateResourceResponse output = proxyRandomf.createResource(createResource); //Get the reference StatefulQuery q = ForwardIndexNodeDSL.getSource().withIndexID(output.IndexID).build(); List<EndpointReference> refs = q.fire(); //Get a proxy try { ForwardIndexNodeCLProxyI proxyRandom = ForwardIndexNodeDSL.getForwardIndexNodeProxyBuilder().at((W3CEndpointReference) refs.get(0)).build(); //Feed proxyRandom.feedLocator(locator); //Query proxyRandom.query(query); } catch (ForwardIndexNodeException e) { //Handle the exception }

Storage Handling layer

The Storage Handling layer is responsible for the actual storage of the index data to the infrastructure. All the index components rely on the functionality provided by this layer in order to store and load their data. The implementation of the Storage Handling layer can be easily modified independently of the way the index components work in order to produce their data. The current implementation splits the index data to chunks called "delta files" and stores them in the Content Management Layer, through the use of the Content Management and Collection Management services. The Storage Handling service must be deployed together with any other index. It cannot be invoked directly, since it's meant to be used only internally by the upper layers of the index components hierarchy.

Index Common library

The Index Common library is another component which is meant to be used internally by the other index components. It provides some common functionality required by the various index types, such as interfaces, XML parsing utilities and definitions of some common attributes of all indices. The jar containing the library should be deployed on every node where at least one index component is deployed.