Index Management Framework

Contents

- 1 Contextual Query Language Compliance

- 2 Full Text Index

- 2.1 Implementation Overview

- 2.2 Usage Example

- 3 Geo-Spatial Index

- 4 Forward Index

- 5 Storage Handling layer

- 6 Index Common library

Contextual Query Language Compliance

The gCube Index Framework consists of the Full Text Index, the Geospatial Index and the Forward Index. All of them are able to answer CQL queries. The CQL relations that each of them supports, depends on the underlying technologies. The mechanisms for answering CQL queries, using the internal design and technologies, are described later for each case. The supported relations are:

- Full Text Index : =, adj, fuzzy, proximity, within

- Geo-Spatial Index : geosearch

- Forward Index : ==, within

Full Text Index

The Full Text Index is responsible for providing quick full text data retrieval capabilities in the gCube environment.

Implementation Overview

Services

The full text index is implemented through three services. They are all implemented according to the Factory pattern:

- The FullTextIndexManagement Service represents an index manager. There is a one to one relationship between an Index and a Management instance, and their life-cycles are closely related; an Index is created by creating an instance (resource) of FullTextIndexManagement Service, and an index is removed by terminating the corresponding FullTextIndexManagement resource. The FullTextIndexManagement Service should be seen as an interface for managing the life-cycle and properties of an Index, but it is not responsible for feeding or querying its index. In addition, a FullTextIndexManagement Service resource does not store the content of its Index locally, but contains references to content stored in Content Management Service.

- The FullTextIndexUpdater Service is responsible for feeding an Index. One FullTextIndexUpdater Service resource can only update a single Index, but one Index can be updated by multiple FullTextIndexUpdater Service resources. Feeding is accomplished by instantiating a FullTextIndexUpdater Service resources with the EPR of the FullTextIndexManagement resource connected to the Index to update, and connecting the updater resource to a ResultSet containing the content to be fed to the Index.

- The FullTextIndexLookup Service is responsible for creating a local copy of an index, and exposing interfaces for querying and creating statistics for the index. One FullTextIndexLookup Service resource can only replicate and lookup a single instance, but one Index can be replicated by any number of FullTextIndexLookup Service resources. Updates to the Index will be propagated to all FullTextIndexLookup Service resources replicating that Index.

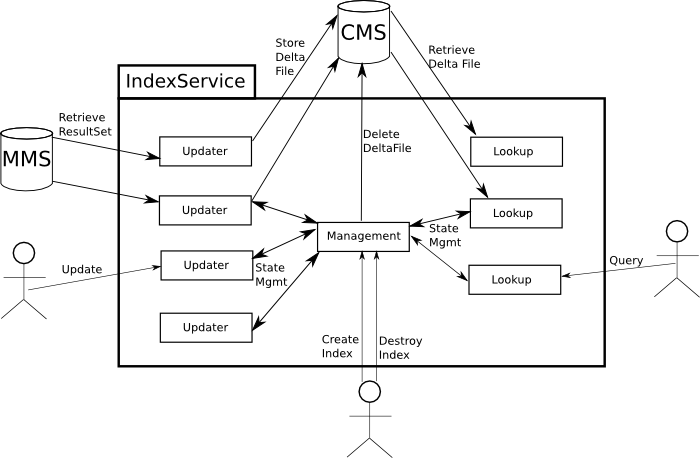

It is important to note that none of the three services have to reside on the same node; they are only connected through WebService calls, the gCube notifications' framework and the gCube Content Management System. The following illustration shows the information flow and responsibilities for the different services used to implement the Full Text Index:

CQL capabilities implementation

Full Text Index uses Lucene as its underlying technology. A CQL Index-Relation-Term triple has a straightforward transformation in lucene. This transformation is explained through the following examples:

| CQL triple | explanation | lucene equivalent |

|---|---|---|

| title adj "sun is up" | documents with this phrase in their title | title:"sun is up" |

| title fuzzy "invorvement" | documents with words "similar" to invorvement in their title | title:invorvement~ |

| allIndexes = "italy" (documents have 2 fields; title and abstract) | documents with the word italy in some of their fields | title:italy OR abstract:italy |

| title proximity "5 sun up" | documents with the words sun, up inside an interval of 5 words in their title | title:"sun up"~5 |

| date within "2005 2008" | documents with a date between 2005 and 2008 | date:[2005 TO 2008] |

In a complete CQL query, the triples are connected with boolean operators. Lucene supports AND, OR, NOT(AND-NOT) connections between single criteria. Thus, in order to transform a complete CQL query to a lucene query, we first transform CQL triples and then we connect them with AND, OR, NOT equivalently.

RowSet

The content to be fed into an Index, must be served as a ResultSet containing XML documents conforming to the ROWSET schema. This is a very simple schema, declaring that a document (ROW element) should contain of any number of FIELD elements with a name attribute and the text to be indexed for that field. The following is a simple but valid ROWSET containing two documents:

<ROWSET idxType="IndexTypeName" colID="colA" lang="en">

<ROW>

<FIELD name="ObjectID">doc1</FIELD>

<FIELD name="title">How to create an Index</FIELD>

<FIELD name="contents">Just read the WIKI</FIELD>

</ROW>

<ROW>

<FIELD name="ObjectID">doc2</FIELD>

<FIELD name="title">How to create a Nation</FIELD>

<FIELD name="contents">Talk to the UN</FIELD>

<FIELD name="references">un.org</FIELD>

</ROW>

</ROWSET>

The attributes idxType and colID are required and specify the Index Type that the Index must have been created with, and the collection ID of the documents under the <ROWSET> element. The lang attribute is optional, and specifies the language of the documents under the <ROWSET> element. Note that for each document a required field is the "ObjectID" field that specifies its unique identifier.

IndexType

How the different fields in the ROWSET should be handled by the Index, and how the different fields in an Index should be handled during a query, is specified through an IndexType; an XML document conforming to the IndexType schema. An IndexType contains a field list which contains all the fields which should be indexed and/or stored in order to be presented in the query results, along with a specification of how each of the fields should be handled. The following is a possible IndexType for the type of ROWSET shown above:

<index-type>

<field-list>

<field name="title" lang="en">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

<field name="contents" lang="en>

<index>yes</index>

<store>no</store>

<return>no</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

<field name="references" lang="en>

<index>yes</index>

<store>no</store>

<return>no</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

<field name="gDocCollectionID">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

<field name="gDocCollectionLang">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

</field-list>

</index-type>

Note that the fields "gDocCollectionID", "gDocCollectionLang" are always required, because, by default, all documents will have a collection ID and a language ("unknown" if no collection is specified). Fields present in the ROWSET but not in the IndexType will be skipped. The elements under each "field" element are used to define how that field should be handled, and they should contain either "yes" or "no". The meaning of each of them is explained bellow:

- index

- specifies whether the specific field should be indexed or not (ie. whether the index should look for hits within this field)

- store

- specifies whether the field should be stored in its original format to be returned in the results from a query.

- return

- specifies whether a stored field should be returned in the results from a query. A field must have been stored to be returned. (This element is not available in the currently deployed indices)

- tokenize

- specifies whether the field should be tokenized. Should usually contain "yes".

- sort

- Not used

- boost

- Not used

For more complex content types, one can also specify sub-fields as in the following example:

<index-type>

<field-list>

<field name="contents">

<index>yes</index>

<store>no</store>

<return>no</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

<!-- subfields of contents -->

<field name="title">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

<!-- subfields of title which itself is a subfield of contents -->

<field name="bookTitle">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

<field name="chapterTitle">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

</field>

<field name="foreword">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

<field name="startChapter">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

<field name="endChapter">

<index>yes</index>

<store>yes</store>

<return>yes</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

</field>

<!-- not a subfield -->

<field name="references">

<index>yes</index>

<store>no</store>

<return>no</return>

<tokenize>yes</tokenize>

<sort>no</sort>

<boost>1.0</boost>

</field>

</field-list>

</index-type>

Querying the field "contents" in an index using this IndexType would return hitsin all its sub-fields, which is all fields except references. Querying the field "title" would return hits in both "bookTitle" and "chapterTitle" in addition to hits in the "title" field. Querying the field "startChapter" would only return hits in from "startChapter" since this field does not contain any sub-fields. Please be aware that using sub-fields adds extra fields in the index, and therefore uses more disks pace.

We currently have five standard index types, loosely based on the available metadata schemas. However any data can be indexed using each, as long as the RowSet follows the IndexType:

- index-type-default-1.0 (DublinCore)

- index-type-TEI-2.0

- index-type-eiDB-1.0

- index-type-iso-1.0

- index-type-FT-1.0

The IndexType of a FullTextIndexManagement Service resource can be changed as long as no FullTextIndexUpdater resources have connected to it. The reason for this limitation is that the processing of fields should be the same for all documents in an index; all documents in an index should be handled according to the same IndexType.

The IndexType of a FullTextIndexLookup Service resource is originally retrieved from the FullTextIndexManagement Service resource it is connected to. However, the "returned" property can be changed at any time in order to change which fields are returned. Keep in mind that only fields which have a "stored" attribute set to "yes" can have their "returned" field altered to return content.

Query language

The Full Text Index receives CQL queries and transforms them into Lucene queries. Queries using wildcards will not return usable query statistics.

Statistics

Linguistics

The linguistics component is used in the Full Text Index.

Two linguistics components are available; the language identifier module, and the lemmatizer module.

The language identifier module is used during feeding in the FullTextBatchUpdater to identify the language in the documents. The lemmatizer module is used by the FullTextLookup module during search operations to search for all possible forms (nouns and adjectives) of the search term.

The language identifier module has two real implementations (plugins) and a dummy plugin (doing nothing, returning always "nolang" when called). The lemmatizer module contains one real implementation (one plugin) (no suitable alternative was found to make a second plugin), and a dummy plugin (always returning an empty String "").

Fast has provided proprietary technology for one of the language identifier modules (Fastlangid) and the lemmatizer module (Fastlemmatizer). The modules provided by Fast require a valid license to run (see later). The license is a 32 character long string. This string must be provided by Fast (contact Stefan Debald, setfan.debald@fast.no), and saved in the appropriate configuration file (see install a lingustics license).

The current license is valid until end of March 2008.

Plugin implementation

The classes implementing the plugin framework for the language identifier and the lemmatizer are in the SVN module common. The package is:

org/gcube/indexservice/common/linguistics/lemmatizerplugin and org/gcube/indexservice/common/linguistics/langidplugin

The class LanguageIdFactory loads an instance of the class LanguageIdPlugin. The class LemmatizerFactory loads an instance of the class LemmatizerPlugin.

The language id plugins implements the class org.gcube.indexservice.common.linguistics.langidplugin.LanguageIdPlugin. The lemmatizer plugins implements the class org.gcube.indexservice.common.linguistics.lemmatizerplugin.LemmatizerPlugin. The factory use the method:

Class.forName(pluginName).newInstance();

when loading the implementations. The parameter pluginName is the package name of the plugin class to be loaded and instantiated.

Language Identification

There are two real implementations of the language identification plugin available in addition to the dummy plugin that always returns "nolang".

The plugin implementations that can be selected when the FullTextBatchUpdaterResource is created:

org.gcube.indexservice.common.linguistics.jtextcat.JTextCatPlugin

org.gcube.indexservice.linguistics.fastplugin.FastLanguageIdPlugin

org.gcube.indexservice.common.linguistics.languageidplugin.DummyLangidPlugin

JTextCat

The JTextCat is maintained by http://textcat.sourceforge.net/. It is a light weight text categorization language tool in Java. It implements the N-Gram-Based Text Categorization algorithms that is described here: http://citeseer.ist.psu.edu/68861.html It supports the languages: German, English, French, Spanish, Italian, Swedish, Polish, Dutch, Norwegian, Finnish, Albanian, Slovakian, Slovenian, Danish and Hungarian.

The JTexCat is loaded and accessed by the plugin: org.gcube.indexservice.common.linguistics.jtextcat.JTextCatPlugin

The JTextCat contains no config - or bigram files since all the statistical data about the languages are contained in the package itself.

The JTextCat is delivered in the jar file: textcat-1.0.1.jar.

The license for the JTextCat: http://www.gnu.org/copyleft/lesser.html

Fastlangid

The Fast language identification module is developed by Fast. It supports "all" languages used on the web. The tools is implemented in C++. The C++ code is loaded as a shared library object. The Fast langid plugin interfaces a Java wrapper that loads the shared library objects and calls the native C++ code. The shared library objects are compiled on Linux RHE3 and RHE4.

The Java native interface is generated using Swig.

The Fast langid module is loaded by the plugin (using the LanguageIdFactory)

org.gcube.indexservice.linguistics.fastplugin.FastLanguageIdPlugin

The plugin loads the shared object library, and when init is called, instantiate the native C++ objects that identifies the languages.

The Fastlangid is in the SVN module: trunk/linguistics/fastlinguistics/fastlangid

The lib catalog contains one catalog for RHE3 and one catalog for RHE4 shared objects (.so). The etc catalog contains the config files. The license string is contained in the config file config.txt

The shared library object is called liblangid.so

The configuration files for the langid module are installed in $GLOBUS_LOACTION/etc/langid.

The org_gcube_indexservice_langid.jar contains the plugin FastLangidPlugin (that is loaded by the LanguageIdFactory) and the Java native interface to the shared library object.

The shared library object liblangid.so is deployed in the $GLOBUS_LOCATION/lib catalogue.

The license for the Fastlangid plugin:

Language Identifier Usage

The language identifier is used by the Full Text Updater in the Full Text Index. The plugin to use for an updater is decided when the resource is created, as a part of the create resource call. (see Full Text Updater). The parameter is the package name of the implementation to be loaded and used to identify the language.

The language identification module and the lemmatizer module are loaded at runtime by using a factory that loads the implementation that is going to be used.

The feeded documents may contain the language per field in the document. If present this specified language is used when indexing the document. In this case the language id module is not used. If no language is specified in the document, and there is a language identification plugin loaded, the FullTextIndexUpdater Service will try to identify the language of the field using the loaded plugin for language identification. Since language is assigned at the Collections level in Diligent, all fields of all documents in a language aware collection should contain a "lang" attribute with the language of the collection.

A language aware query can be performed at a query or term basis:

- the query "_querylang_en: car OR bus OR plane" will look for English occurrences of all the terms in the query.

- the queries "car OR _lang_en:bus OR plane" and "car OR _lang_en_title:bus OR plane" will only limit the terms "bus" and "title:bus" to English occurrences. (the version without a specified field will not work in the currently deployed indices)

- Since language is specified at a collection level, language aware queries should only be used for language neutral collections.

Lemmatization

There is one real implementations of the lemmatizer plugin available in addition to the dummy plugin that always returns "" (empty string).

The plugin implementations is selected when the FullTextLookupResource is created:

org.diligentproject.indexservice.linguistics.fastplugin.FastLemmatizerPlugin

org.diligentproject.indexservice.common.linguistics.languageidplugin.DummyLemmatizerPlugin

Fastlemmatizer

The Fast lemmatizer module is developed by Fast. The lemmatizer modules depends on .aut files (config files) for the language to be lemmatized. Both expansion and reduction is supported, but expansion is used. The terms (noun and adjectives) in the query are expanded.

The lemmatizer is configured for the following languages: German, Italian, Portuguese, French, English, Spanish, Netherlands, Norwegian. To support more languages, additional .aut files must be loaded and the config file LemmatizationQueryExpansion.xml must be updated.

The lemmatizer is implemented in C++. The C++ code is loaded as a shared library object. The Fast langid plugin interfaces a Java wrapper that loads the shared library objects and calls the native C++ code. The shared library objects are compiled on Linux RHE3 and RHE4.

The Java native interface is generated using Swig.

The Fast lemmatizer module is loaded by the plugin (using the LemmatizerIdFactory)

org.diligentproject.indexservice.linguistics.fastplugin.FastLemmatizerPlugin

The plugin loads the shared object library, and when init is called, instantiate the native C++ objects.

The Fastlemmatizer is in the SVN module: trunk/linguistics/fastlinguistics/fastlemmatizer

The lib catalog contains one catalog for RHE3 and one catalog for RHE4 shared objects (.so). The etc catalog contains the config files. The license string is contained in the config file LemmatizerConfigQueryExpansion.xml The shared library object is called liblemmatizer.so

The configuration files for the langid module are installed in $GLOBUS_LOACTION/etc/lemmatizer.

The org_diligentproject_indexservice_lemmatizer.jar contains the plugin FastLemmatizerPlugin (that is loaded by the LemmatizerFactory) and the Java native interface to the shared library.

The shared library liblemmatizer.so is deployed in the $GLOBUS_LOCATION/lib catalogue.

The $GLOBUS_LOCATION/lib must therefore be include in the LD_LIBRARY_PATH environment variable.

Fast lemmatizer configuration

The LemmatizerConfigQueryExpansion.xml contains the paths to the .aut files that is loaded when a lemmatizer is instanciated.

<lemmas active="yes" parts_of_speech="NA">etc/lemmatizer/resources/dictionaries/lemmatization/en_NA_exp.aut</lemmas>

The path is relative to the env variable GLOBUS_LOCATION. If this path is wrong, it the Java machine will core dump.

The license for the Fastlemmatizer plugin:

Fast lemmatizer logging

The lemmatizer logs info, debug and error messages to the file "lemmatizer.txt"

Lemmatization Usage

The FullTextIndexLookup Service uses expansion during lemmatization; a word (of a query) is expanded into all known versions of the word. It is of course important to know the language of the query in order to know which words to expand the query with. Currently the same methods used to specify language for a language aware query is used is used to specify language for the lemmatization process. A way of separating these two specifications (such that lemmatization can be performed without performing a language aware query) will be made available shortly...

Linguistics Licenses

The current license key for the fastalngid and fastlemmatizer is valid through March 2008.

If a new license is required please contact: Stefan.debald@fast.no to get a new license key.

The license must be installed both in the Fastlangid and the Fastlemmatizer module.

The fastlangid license is installed by updating the SVN text file: linguistics/fastlinguistics/fastlangid/etc/langid/config.txt

Use a text editor and replace the 32 character license string with the new license string:

// The license key // Contact stefand.debald@fast.no for new license key: LICSTR=KILMDEPFKHNBNPCBAKONBCCBFLKPOEFG

A running system is updated by replacing the license string in the file: $GLOBUS_LOCATION/etc/langid/config.txt as described above.

The fastlemmatizer license is installed by updating the SVN text file:

linguistics/fastlinguistics/fastlemmatizer/etc/LemmatizationConfigQueryExpansion.xml

Use a text editor and replace the 32 character license string with the new license string:

<lemmatization default_mode="query_expansion" default_query_language="en" license="KILMDEPFKHNBNPCBAKONBCCBFLKPOEFG">

The running system is updated by replacing the license string in the file: $GLOBUS_LOCATION/etc/lemmatizer/LemmatizationConfigQueryExpansion.xml

Partitioning

In order to handle situations where an Index replication does not fit on a single node, partitioning has been implemented for the FullTextIndexLookup Service; in cases where there is not enough space to perform an update/addition on the FullTextIndexLookup Service resource, a new resource will be created to handle all the content which didn't fit on the first resource. The partitioning is handled automatically and is transparent when performing a query, however the possibility of enabling/disabling partitioning will be added in the future. In the deployed Indices partitioning has been disabled due to problems with the creation of statistics. Will be fixed shortly.

Usage Example

Create a Management Resource

//Get the factory portType String managementFactoryURI = "http://some.domain.no:8080/wsrf/services/gcube/index/FullTextIndexManagementFactoryService"; FullTextIndexManagementFactoryServiceAddressingLocator managementFactoryLocator = new FullTextIndexManagementFactoryServiceAddressingLocator(); managementFactoryEPR = new EndpointReferenceType(); managementFactoryEPR.setAddress(new Address(managementFactoryURI)); managementFactory = managementFactoryLocator .getFullTextIndexManagementFactoryPortTypePort(managementFactoryEPR); //Create generator resource and get endpoint reference of WS-Resource. org.gcube.indexservice.fulltextindexmanagement.stubs.CreateResource managementCreateArguments = new org.gcube.indexservice.fulltextindexmanagement.stubs.CreateResource(); managementCreateArguments.setIndexTypeName(indexType));//Optional (only needed if not provided in RS) managementCreateArguments.setIndexID(indexID);//Optional (should usually not be set, and the service will create the ID) managementCreateArguments.setCollectionID("myCollectionID"); managementCreateArguments.setContentType("MetaData"); org.gcube.indexservice.fulltextindexmanagement.stubs.CreateResourceResponse managementCreateResponse = managementFactory.createResource(managementCreateArguments); managementInstanceEPR = managementCreateResponse.getEndpointReference(); String indexID = managementCreateResponse.getIndexID();

Create an Updater Resource and start feeding

//Get the factory portType updaterFactoryURI = "http://some.domain.no:8080/wsrf/services/gcube/index/FullTextIndexUpdaterFactoryService"; //could be on any node updaterFactoryEPR = new EndpointReferenceType(); updaterFactoryEPR.setAddress(new Address(updaterFactoryURI)); updaterFactory = updaterFactoryLocator .getFullTextIndexUpdaterFactoryPortTypePort(updaterFactoryEPR); //Create updater resource and get endpoint reference of WS-Resource org.gcube.indexservice.fulltextindexupdater.stubs.CreateResource updaterCreateArguments = new org.gcube.indexservice.fulltextindexupdater.stubs.CreateResource(); //Connect to the correct Index updaterCreateArguments.setMainIndexID(indexID); //Now let's insert some data into the index... Firstly, get the updater EPR. org.gcube.indexservice.fulltextindexupdater.stubs.CreateResourceResponse updaterCreateResponse = updaterFactory .createResource(updaterCreateArguments); updaterInstanceEPR = updaterCreateResponse.getEndpointReference(); //Get updater instance PortType updaterInstance = updaterInstanceLocator.getFullTextIndexUpdaterPortTypePort(updaterInstanceEPR); //read the EPR of the ResultSet containing the ROWSETs to feed into the index BufferedReader in = new BufferedReader(new FileReader(eprFile)); String line; resultSetLocator = ""; while((line = in.readLine())!=null){ resultSetLocator += line; } //Tell the updater to start gathering data from the ResultSet updaterInstance.process(resultSetLocator);

Create a Lookup resource and perform a query

//Let's put it on another node for fun... lookupFactoryURI = "http://another.domain.no:8080/wsrf/services/gcube/index/FullTextIndexLookupFactoryService"; FullTextIndexLookupFactoryServiceAddressingLocator lookupFactoryLocator = new FullTextIndexLookupFactoryServiceAddressingLocator(); EndpointReferenceType lookupFactoryEPR = null; EndpointReferenceType lookupEPR = null; FullTextIndexLookupFactoryPortType lookupFactory = null; FullTextIndexLookupPortType lookupInstance = null; //Get factory portType lookupFactoryEPR= new EndpointReferenceType(); lookupFactoryEPR.setAddress(new Address(lookupFactoryURI)); lookupFactory =lookupFactoryLocator.getFullTextIndexLookupFactoryPortTypePort(factoryEPR); //Create resource and get endpoint reference of WS-Resource org.gcube.indexservice.fulltextindexlookup.stubs.CreateResource lookupCreateResourceArguments = new org.gcube.indexservice.fulltextindexlookup.stubs.CreateResource(); org.gcube.indexservice.fulltextindexlookup.stubs.CreateResourceResponse lookupCreateResponse = null; lookupCreateResourceArguments.setMainIndexID(indexID); lookupCreateResponse = lookupFactory.createResource( lookupCreateResourceArguments); lookupEPR = lookupCreateResponse.getEndpointReference(); //Get instance PortType lookupInstance = instanceLocator.getFullTextIndexLookupPortTypePort(instanceEPR); //Perform a query String query = "good OR evil"; String epr = lookupInstance.query(query); //Print the results to screen. (refer to the ResultSet Framework page for a more detailed explanation) RSXMLReader reader=null; ResultElementBase[] results; try{ //create a reader for the ResultSet we created reader = RSXMLReader.getRSXMLReader(new RSLocator(epr)); //Print each part of the RS to std.out System.out.println("<Results>"); do{ System.out.println(" <Part>"); if (reader.getNumberOfResults() > 0){ results = reader.getResults(ResultElementGeneric.class); for(int i = 0; i < results.length; i++ ){ System.out.println(" "+results[i].toXML()); } } System.out.println(" </Part>"); if(!reader.getNextPart()){ break; } } while(true); System.out.println("</Results>"); } catch(Exception e){ e.printStackTrace(); }

Getting statistics from a Lookup resource

String statsLocation = lookupInstance.createStatistics(new CreateStatistics()); //Connect to a CMS Running Instance EndpointReferenceType cmsEPR = new EndpointReferenceType(); cmsEPR.setAddress(new Address("http://swiss.domain.ch:8080/wsrf/services/gcube/contentmanagement/ContentManagementServiceService")); ContentManagementServiceServiceAddressingLocator cmslocator = new ContentManagementServiceServiceAddressingLocator(); cms = cmslocator.getContentManagementServicePortTypePort(cmsEPR); //Retrieve the statistics file from CMS GetDocumentParameters getDocumentParams = new GetDocumentParameters(); getDocumentParams.setDocumentID(statsLocation); getDocumentParams.setTargetFileLocation(BasicInfoObjectDescription.RAW_CONTENT_IN_MESSAGE); DocumentDescription description = cms.getDocument(getDocumentParams); //Write the statistics file from memory to disk File downloadedFile = new File("Statistics.xml"); DecompressingInputStream input = new DecompressingInputStream( new BufferedInputStream(new ByteArrayInputStream(description.getRawContent()), 2048)); BufferedOutputStream output = new BufferedOutputStream( new FileOutputStream(downloadedFile), 2048); byte[] buffer = new byte[2048]; int length; while ( (length = input.read(buffer)) >= 0){ output.write(buffer, 0, length); } input.close(); output.close();

Geo-Spatial Index

Implementation Overview

Services

The geo index is implemented through three services, in the same manner as the full text index. They are all implemented according to the Factory pattern:

- The GeoIndexManagement Service represents an index manager. There is a one to one relationship between an Index and a Management instance, and their life-cycles are closely related; an Index is created by creating an instance (resource) of GeoIndexManagement Service, and an index is removed by terminating the corresponding GeoIndexManagement resource. The GeoIndexManagement Service should be seen as an interface for managing the life-cycle and properties of an Index, but it is not responsible for feeding or querying its index. In addition, a GeoIndexManagement Service resource does not store the content of its Index locally, but contains references to content stored in Content Management Service.

- The GeoIndexUpdater Service is responsible for feeding an Index. One GeoIndexUpdater Service resource can only update a single Index, but one Index can be updated by multiple GeoIndexUpdater Service resources. Feeding is accomplished by instantiating a GeoIndexUpdater Service resources with the EPR of the GeoIndexManagement resource connected to the Index to update, and connecting the updater resource to a ResultSet containing the content to be fed to the Index.

- The GeoIndexLookup Service is responsible for creating a local copy of an index, and exposing interfaces for querying and creating statistics for the index. One GeoIndexLookup Service resource can only replicate and lookup a single instance, but one Index can be replicated by any number of GeoIndexLookup Service resources. Updates to the Index will be propagated to all GeoIndexLookup Service resources replicating that Index.

It is important to note that none of the three services have to reside on the same node; they are only connected through WebService calls, the gCube notifications' framework and the gCube Content Management System. The following illustration shows the information flow and responsibilities for the different services used to implement the Geo Index:

Underlying Technology

Geo-Spatial Index uses Geotools as its underlying technology. The documents hosted in a Geo-Spatial Index Lookup belong to different collections and language. For each language of each collection, the Geo-Spatial Index Lookup uses a seperate R-tree to index the corresponding documents. As we will describe in the following section, the CQL queries received by a Geo Index Lookup may refer to specific languages and collections. Through this design we aim at high performance for complicated queries that involve many collections and languages.

CQL capabilities implementation

Geo-Spatial Index Lookup supports one custom CQL relation. The "geosearch" relation has a number of modifiers, that specify the collection and language of the results, the inclusion type of the query, a refiner for filtering further the results and a ranker for computing a score for each result. All of the modifiers are optional. Let's see the following example in order to understand better the geosearch relation and its modifiers:

geo geosearch/colID="colA"/lang="en"/inclusion="0"/ranker="rankerA false arg1 arg2"/refiner="refinerA arg1 arg2 arg3" "1 1 1 10 10 1 10 10"

In this example the results will be the documents that belong to collection with ID "colA", they are in English and they intersect with the polygon defined by points (1,1), (1,10), (10,1), (10,10). These results will be filtered by the refiner with ID "refinerA" that will take "arg1 arg2 arg3" as arguments for the filtering operation, will be ordered by rankerA that will take "arg1 arg2" as arguments for the ranking operation and will be returned as the output for this simple CQL query. The "false" indication to the ranker modifier signifies that we don't want reverse ordering of the results(true signifies that the higher score must be placed at the end). There are three inclusion types. 0 is "intersects", 1 is "contains" (documents that are contained in the specified polygon) and 2 is "inside" (documents that are inside the specified polygon). The next sections will provide the details for the refiners and rankers.

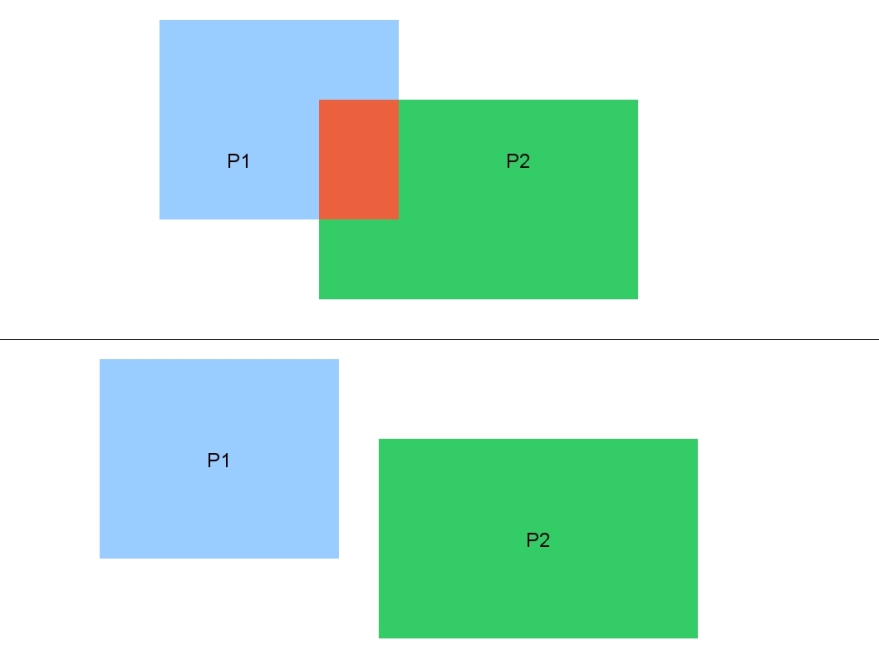

A complete CQL query for a Geo-Spatial Index Lookup will contain many CQL geosearch triples, connected with AND, OR, NOT operators. The approach we follow in order to execute CQL queries, is to apply boolean algebra rules, and transform the initial query to an equivalent one. We aim at producing a query which is a union of operations that each refers to a single R-tree. Additionally we apply "cut-off" rules that eliminate parts of the initial query that have a zero number of results. Consider the following example of a "cut-off" rule:

(geo geosearch/colID="colA"/inclusion="1" <P1>) AND (geo geosearch/colID="colA"/inclusion="1" <P2>)

Here we want documents of collection "colA" that are contained in polygon P1 AND are also contained in polygon P2. Note that if the collections in the two criteria were different then we could eliminate this subquery, since it could not produce any result(each document belongs to one collection only). Since the two criteria specify the same collection, we must examine the relation of the two polygons. If the two polygons do not intersect then there is no area in which the documents should be contained, so no document can satisfy the conjunction of the 2 criteria. This is depicted in the following figure:

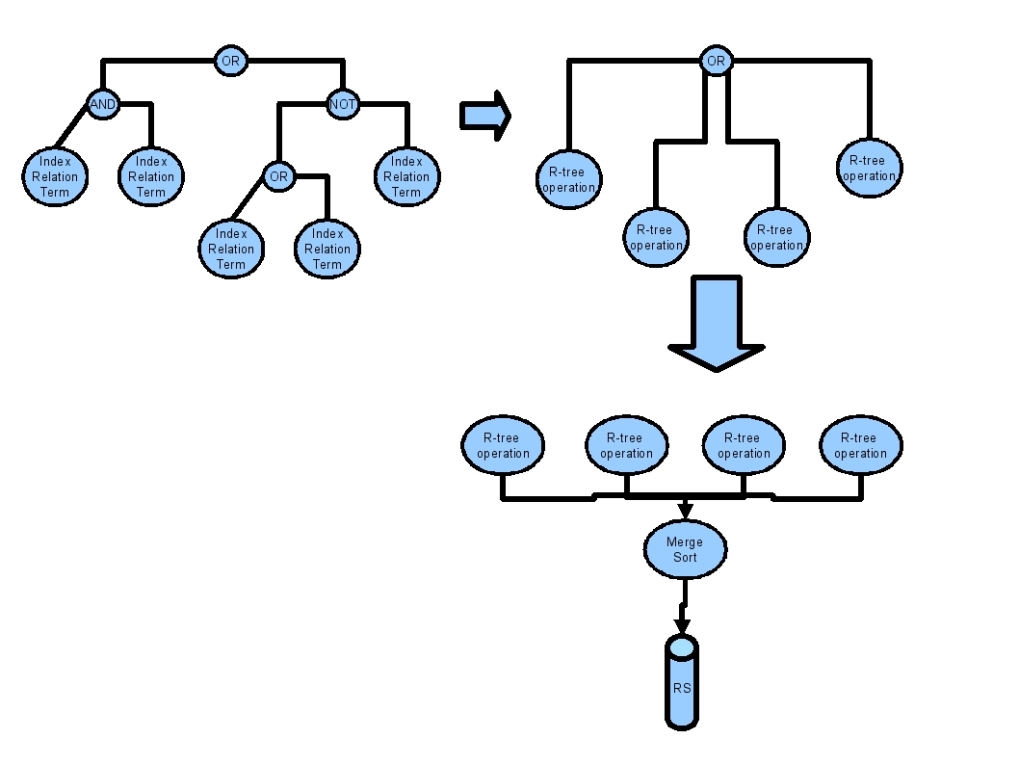

The transformation of a initial CQL query to a union of R-tree operations is depicted in the following figure:

Each R-tree operation refers to a lookup operation for a given polygon on a single R-tree. The MergeSorter component implements the union of the individual R-tree operations, based on the scores of the results. Flow control is supported by the MergeSorter component in order to pause and synchronize the workers that execute the single R-tree operations, depending on the behavior of the client that reads the results.

RowSet

The content to be fed into a Geo Index, must be served as a ResultSet containing XML documents conforming to the GeoROWSET schema. This is a very simple schema, declaring that an object (ROW element) should containan id, start and end X coordinates (x1-mandatory and x2-set to equal x1 if not provided) as well as start and end Y coordinates (y1-mandatory and y2-set to equal y1 if not provided). In addition, and of any number of FIELD elements containing a name attribute and information to be stored and perhaps used for refinement of a query or ranking of results. As opposed to the ROWSETs used for fulltext indices, all rows in a GeoROWSET must contain all fields specified in the IndexType. The following is a simple but valid GeoROWSET containing two objects:

<ROWSET>

<ROW id="doc1" x1="4321" y1="1234">

<FIELD name="StartTime">2001-05-27T14:35:25.523</FIELD>

<FIELD name="EndTime">2001-05-27T14:38:03.764</FIELD>

</ROW>

<ROW id="doc1" x1="1337" x2="4123" y1="1337" y2="6534">

<FIELD name="StartTime">2001-06-27</FIELD>

<FIELD name="EndTime">2001-07-27</FIELD>

</ROW>

</ROWSET>

GeoIndexType

Which fields should be present in the RowSet, and how these fields are to be handled by the Geo Index is specified through a GeoIndexType; an XML document conforming to the GeoIndexType schema. Which GeoIndexType to use for a specific GeoIndex instance, is specified by supplying a GeoIndexType ID during initialization of the GeoIndexManagement resource. A GeoIndexType contains a field list which contains all the fields which should be stored in order to be presented in the query results or used for refinement. The following is a possible IndexType for the type of ROWSET shown above:

<index-type>

<field-list>

<field name="StartTime">

<type>date</type>

<return>yes</return>

</field>

<field name="EndTime">

<type>date</type>

<return>yes</return>

</field>

</field-list>

</index-type>

Fields present in the ROWSET but not in the IndexType will be skipped. Fields present in the IndexType but not in a ROW in the ROWSET will cause an exception. The two elements under each "field" element are used to define that field should be handled. The meaning and expected content of each of them is explained bellow:

- type specifies the data type of the field. Accepted values are:

- SHORT - A number fitting into a Java "short"

- INT - A number fitting into a Java "short"

- LONG - A number fitting into a Java "short"

- DATE - A date in the format yyyy-MM-dd'T'HH:mm:ss.s where only yyyy is mandatory

- FLOAT - A decimal number fitting into a Java "float"

- DOUBLE - A decimal number fitting into a Java "double"

- STRING - A string with a maximum length of 40 (or so...)

- return specifies whether the field should be returned in the results from a query. "yes" and "no" are the only accepted values.

Plugin Framework

As explained in the GeoIndexType section, which fields a GeoIndex instance should contain can be dynamically specified through a GeoIndexType provided during GeoIndexManagement initialization. However, since new GeoIndexTypes can be added at any time with any number of new fields, there is no way for the GeoIndex itself to know how to use the information in such fields in any meaningful manner when processing a query; a static generic algorithm for processing such information would drastically limit the usefulness of the information. In order to allow for dynamic introduction of field evaluation algorithms capable of handling the dynamic nature of IndexTypes, a plugin framework was introduced. The framework allows for the creation of GeoIndexType-specific evaluators handling ranking and refinement.

Ranking

The results of a query are sorted according to their rank, and their ranks are also returned to the caller. A RankEvaluator plugin is used to determine the rank of objects. It is provided with the query region, Object data, GeoIndexType and an optional set of plugin specific arguments, and is expected to use this information in order to return a meaningful rank of each object.

Refinement

The GeoIndex uses TwoStep processing in order to process a query. Firstly, a very efficient filtering step will all possible hits (along with some false hits) using the minimal bounding rectangle (mbr) of the query region. Then, a more costly refinement step will use additional object and query information in order to eliminate all the false hits. While the filtering step is handled internally in the index, the refinement step is handled by a refiner plugin. It is provided with the query region, Object data, GeoIndexType and an optional set of plugin specific arguments, and is expected to use this information in order to determine whether an object is within a query or not.

Creating a Rank Evaluator

A RankEvaluator plugin has to extend the abstract class org.gcube.indexservice.geo.ranking.RankEvaluator which contains three abstract methods:

- abstract public void initialize(String args[]) -- a method called during the initiation of the RankEvaluator plugin, providing the plugin with any arguments provided in the code. All arguments are given as Strings, and it's up to the plugin to parse the string into the datatype needed by the plugin.

- abstract public boolean isIndexTypeCompatible(GeoIndexType indexType) -- should be able to determine whether this plugin can be used by an index conforming to the GeoIndexType argument

- abstract public double rank(Object entry) -- the method that calculates the rank of an entry.

In addition, the RankEvaluator abstract class implements two other methods worth noting

- final public void init(Polygon polygon, InclusionType containmentMethod, GeoIndexType indexType, String args[]) -- initialized the protected variables Polygon polygon, Envelope envelope, InclusionType containmentMethod and GeoIndexType indexType, before calling initialize() using the last argument. This means that all the four protected variables are available in the initialize() method.

- protected Object getDataField(String field, Data data) -- a method used to retrieve a the contents of a specific GeoIndexType field from a org.geotools.index.Data object conforming to the GeoIndexType used by the plugin.

Ok, simple enough... So let's create a RankEvaluator plugin. We'll assume that for a certain use case, entries which span over a long period of time are of less interest than objects wich span over a short period of time. Since we're dealing with TimeSpans, we'll assume that the data stored in the index will have a "StartTime" field and an "EndTime" field, in accordance with the GeoIndexType created earlier.

The first thing we need to do, is to create a class which extends RankEvaluator:

package org.mojito.ranking;

import org.gcube.indexservice.geo.ranking.RankEvaluator;

public class SpanSizeRanker extends RankEvaluator{

}

Next, we'll implement the isIndexTypeCompatible method. To do this, we need a way of determine if the fields we need are present in the GeoIndexType argument. Luckily, GeoIndexType contains a method called containsField which expects the String name and GeoIndexField.DataType (date, double, float, int, long, short or string) type of the field in question as arguments. In addition, we'll implement the initialize() method, which we'll leave empty as the plugin we are creating doesn't need to handle any arguments.

package org.mojito.ranking;

import org.gcube.indexservice.common.GeoIndexField;

import org.gcube.indexservice.common.GeoIndexType;

import org.gcube.indexservice.geo.ranking.RankEvaluator;

public class SpanSizeRanker extends RankEvaluator{

public void initialize(String[] args) {}

public boolean isIndexTypeCompatible(GeoIndexType indexType) {

return indexType.containsField("StartTime", GeoIndexField.DataType.DATE) &&

indexType.containsField("EndTime", GeoIndexField.DataType.DATE);

}

}

Last, but not least... We need to implement the Rank() method. This is of course the method which calculates a rank for an entry, based on the query polygon, any extra arguments and the different fields of the entry. In our implementation, we'll simply calculate the timespan, and devide 1 by this number in order to get a quick and dirty rank. Keep in mind that this method is not called for all the entries resulting from the R-Tree filtering step, but only a subset roughly fitting the resultset page size. This means that somewhat computationally heavy operation can be performed (if needed) without drastically lowering response time. Please also note how the getDataField() method is used in order retrieve the evaluated fields from the entry data, and how the result is cast to Long (even though we are dealing with dates). The reason for this is that the GeoIndex internally represents a date as a long containing the number of seconds from the Epoch. If we wanted to evaluate the Minimal Bouning Rectangle (MBR) of the entries, we could access them through entry.getBounds().

package org.mojito.ranking;

import org.gcube.indexservice.common.GeoIndexField;

import org.gcube.indexservice.common.GeoIndexType;

import org.gcube.indexservice.geo.ranking.RankEvaluator;

import org.geotools.index.Data;

import org.geotools.index.rtree.Entry;

public class SpanSizeRanker extends RankEvaluator{

public void initialize(String[] args) {}

public boolean isIndexTypeCompatible(GeoIndexType indexType) {

return indexType.containsField("StartTime", GeoIndexField.DataType.DATE) &&

indexType.containsField("EndTime", GeoIndexField.DataType.DATE);

}

public double rank(Object obj){

Entry entry = (Entry)obj;

Data data = (Data)entry.getData();

Long entryStartTime = (Long) this.getDataField("StartTime", data);

Long entryEndTime = (Long) this.getDataField("EndTime", data);

long spanSize = entryEndTime - entryStartTime;

return 1/(spanSize + 1);

}

}

And there we are! Our first working RankEvaluator plugin.

Creating a Refiner

A Refiner plugin has to extend the abstract class org.diligentproject.indexservice.geo.refinement.Refiner which contains three abstract methods:

- abstract public void initialize(String args[]) -- a method called during the initiation of the RankEvaluator plugin, providing the plugin with any arguments provided in the code. All arguments are given as Strings, and it's up to the plugin to parse the string into the datatype needed by the plugin.

- abstract public boolean isIndexTypeCompatible(GeoIndexType indexType) -- should be able to determine whether this plugin can be used by an index conforming to the GeoIndexType argument

- abstract public List<Entry> refine(List<Entry> entries); -- the method responsible for refining a list of results.

In addition, the Refiner abstract class implements two other methods worth noting

- final public void init(Polygon polygon, InclusionType containmentMethod, GeoIndexType indexType, String args[]) -- initialized the protected variables Polygon polygon, Envelope envelope, InclusionType containmentMethod and GeoIndexType indexType, before calling the abstract initialize() using the last argument. This means that all the four protected variables are available in the initialize() method.

- protected Object getDataField(String field, Data data) -- a method used to retrieve a the contents of a specific GeoIndexType field from a org.geotools.index.Data object conforming to the GeoIndexType used by the plugin.

Quite similar to the RankEvaluator isn't it?... So let's create a Refiner plugin to go with the previously created RankEvaluator. We'll still assume that the data stored in the index will have a "StartTime" field and an "EndTime" field, in accordance with the GeoIndexType created earlier. The "shorter is better" notion from the RankEvaluator example still holds true, and we want to create a plugin which refines a query by removing all objects wich span over a time bigger than a maxSpanSize value, avoiding those ridiculous everlasting objects... The maxSpanSize value will be provided to the plugin as an initialization argument.

The first thing we need to do, is to create a class which extends Refiner:

package org.mojito.refinement;

import org.gcube.indexservice.geo.refinement.Refiner;

public class SpanSizeRefiner extends Refiner{

}

The isIndexTypeCompatible method is implemented in a similar manner as for the SpanSizeRanker. However in this plugin we have to pay closer attention to the initialize() function, since we expect the maxSpanSize to be given as an argument. Since maxSpanSize is the only argument, the String array argument of initialize(String[] args) will contain a single element which will be a String representation of the maxSpanSize. In order for this value to be usable, we will parse it to a long, which will represent the maxSpanSize in milliseconds.

package org.mojito.refinement;

import org.gcube.indexservice.common.GeoIndexField;

import org.gcube.indexservice.common.GeoIndexType;

import org.gcube.indexservice.geo.refinement.Refiner;

public class SpanSizeRefiner extends Refiner {

private long maxSpanSize;

public void initialize(String[] args) {

this.maxSpanSize = Long.parseLong(args[0]);

}

public boolean isIndexTypeCompatible(GeoIndexType indexType) {

return indexType.containsField("StartTime", GeoIndexField.DataType.DATE) &&

indexType.containsField("EndTime", GeoIndexField.DataType.DATE);

}

}

And once again we've saved the best, or at least the most important, for last; the refine() implementation is where we decide how to refine the query results. It takse a list of Entry objects as an argument, and is expected to return a similar (though usually smaller) list of Entry objects as a result. As with the RankEvaluator, the synchronization with the ResultSet page size allows for quite computationally heavy operations, however we have little use for that in this example. We will simply calculate the time span of each entry in the argument List and compare it to the maxSpanSize value. If it is smaller or equal, we'll add it to the results List.

package org.mojito.refinement;

import java.util.ArrayList;

import java.util.List;

import org.gcube.indexservice.common.GeoIndexField;

import org.gcube.indexservice.common.GeoIndexType;

import org.gcube.indexservice.geo.refinement.Refiner;

import org.geotools.index.Data;

import org.geotools.index.rtree.Entry;

public class SpanSizeRefiner extends Refiner {

private long maxSpanSize;

public void initialize(String[] args) {

this.maxSpanSize = Long.parseLong(args[0]);

}

public boolean isIndexTypeCompatible(GeoIndexType indexType) {

return indexType.containsField("StartTime", GeoIndexField.DataType.DATE) &&

indexType.containsField("EndTime", GeoIndexField.DataType.DATE);

}

public List<Entry> refine(List<Entry> entries){

ArrayList<Entry> returnList = new ArrayList<Entry>();

Data data;

Long entryStartTime = null, entryEndTime = null;

for(Entry entry : entries){

data = (Data)entry.getData();

entryStartTime = (Long) this.getDataField("StartTime", data);

entryEndTime = (Long) this.getDataField("EndTime", data);

if (entryEndTime < entryStartTime){

long temp = entryEndTime;

entryEndTime = entryStartTime;

entryStartTime = temp;

}

if (entryEndTime - entryStartTime <= maxSpanSize){

returnList.add(entry);

}

}

return returnList;

}

}

And that's all there is to it! We have created our first Refinement plugin, capable of getting rid of those annoying long-lived objects.

Query language

A query is specified through a SearchPolygon object, containing the points of the vertices of the query region, an optional RankingRequest object and an optional list of RefinementRequest objects. A RankingRequest object contains the String ID of the RankEvaluator to use, along with an optional String array of arguments to be used by the specified RankEvaluator. Similarly, the RefinementRequest contains the String ID of the Refiner to use, along with an optional String array of arguments to be used by the specified Refiner

Usage Example

Create a Management Resource

//Get the factory portType String geoManagementFactoryURI = "http://some.domain.no:8080/wsrf/services/gcube/index/GeoIndexManagementFactoryService"; GeoIndexManagementFactoryServiceAddressingLocator geoManagementFactoryLocator = new GeoIndexManagementFactoryServiceAddressingLocator(); geoManagementFactoryEPR = new EndpointReferenceType(); geoManagementFactoryEPR.setAddress(new Address(geoManagementFactoryURI)); geoManagementFactory = geoManagementFactoryLocator .getGeoIndexManagementFactoryPortTypePort(managementFactoryEPR); //Create generator resource and get endpoint reference of WS-Resource. org.gcube.indexservice.fulltextindexmanagement.stubs.CreateResource managementCreateArguments = new org.diligentproject.indexservice.fulltextindexmanagement.stubs.CreateResource(); managementCreateArguments.setIndexTypeID(indexType);//Optional (only needed if not provided in RS) managementCreateArguments.setIndexID(indexID);//Optional (should usually not be set, and the service will create the ID) managementCreateArguments.setCollectionID(new String[] {collectionID}); managementCreateArguments.setGeographicalSystem("WGS_1984"); managementCreateArguments.setUnitOfMeasurement("DD"); managementCreateArguments.setNumberOfDecimals(4); org.gcube.indexservice.geoindexmanagement.stubs.CreateResourceResponse geoManagementCreateResponse = geoManagementFactory.createResource(generatorCreateArguments); geoManagementInstanceEPR = geoManagementCreateResponse.getEndpointReference(); String indexID = geoManagementCreateResponse.getIndexID();

Create an Updater Resource and start feeding

EndpointReferenceType geoUpdaterFactoryEPR = null; EndpointReferenceType geoUpdaterInstanceEPR = null; GeoIndexUpdaterFactoryPortType geoUpdaterFactory = null; GeoIndexUpdaterPortType geoUpdaterInstance = null; GeoIndexUpdaterServiceAddressingLocator geoUpdaterInstanceLocator = new GeoIndexUpdaterServiceAddressingLocator(); GeoIndexUpdaterFactoryServiceAddressingLocator updaterFactoryLocator = new GeoIndexUpdaterFactoryServiceAddressingLocator(); //Get the factory portType String geoUpdaterFactoryURI = "http://some.domain.no:8080/wsrf/services/gcube/index/GeoIndexUpdaterFactoryService"; //could be on any node geoUpdaterFactoryEPR = new EndpointReferenceType(); geoUpdaterFactoryEPR.setAddress(new Address(geoUpdaterFactoryURI)); geoUpdaterFactory = updaterFactoryLocator .getGeoIndexUpdaterFactoryPortTypePort(geoUpdaterFactoryEPR); //Create updater resource and get endpoint reference of WS-Resource org.gcube.indexservice.geoindexupdater.stubs.CreateResource geoUpdaterCreateArguments = new org.gcube.indexservice.geoindexupdater.stubs.CreateResource(); updaterCreateArguments.setMainIndexID(indexID); //Now let's insert some data into the index... Firstly, get the updater EPR. org.gcube.indexservice.geoindexupdater.stubs.CreateResourceResponse geoUpdaterCreateResponse = updaterFactory .createResource(geoUpdaterCreateArguments); geoUpdaterInstanceEPR = geoUpdaterCreateResponse.getEndpointReference() //Get updater instance PortType geoUpdaterInstance = geoUpdaterInstanceLocator.getGeoIndexUpdaterPortTypePort(geoUpdaterInstanceEPR); //read the EPR of the ResultSet containing the ROWSETs to feed into the index BufferedReader in = new BufferedReader(new FileReader(eprFile)); String line; resultSetLocator = ""; while((line = in.readLine())!=null){ resultSetLocator += line; } //Tell the updater to start gathering data from the ResultSet geoUpdaterInstance.process(resultSetLocator);

Create a Lookup resource and perform a query

//Let's put it on another node for fun... String geoLookupFactoryURI = "http://another.domain.no:8080/wsrf/services/gcube/index/GeoIndexLookupFactoryService"; EndpointReferenceType geoLookupFactoryEPR = null; EndpointReferenceType geoLookupEPR = null; GeoIndexLookupFactoryServiceAddressingLocator geoFactoryLocator = new GeoIndexLookupFactoryServiceAddressingLocator(); GeoIndexLookupServiceAddressingLocator geoLookupInstanceLocator = new GeoIndexLookupServiceAddressingLocator(); GeoIndexLookupFactoryPortType geoIndexLookupFactory = null; GeoIndexLookupPortType geoIndexLookupInstance = null; //Get factory portType geoLookupFactoryEPR = new EndpointReferenceType(); geoLookupFactoryEPR.setAddress(new Address(geoLookupFactoryURI)); geoLookupFactory = geoIndexFactoryLocator.getGeoIndexLookupFactoryPortTypePort(geoLookupFactoryEPR); //Create resource and get endpoint reference of WS-Resource org.gcube.indexservice.geoindexlookup.stubs.CreateResource geoLookupCreateResourceArguments = new org.gcube.indexservice.geoindexlookup.stubs.CreateResource(); org.gcube.indexservice.geoindexlookup.stubs.CreateResourceResponse geoLookupCreateResponse = null; geoLookupCreateResourceArguments.setMainIndexID(indexID); geoLookupCreateResponse = geoLookupFactory.createResource( geoLookupCreateResourceArguments); geoLookupEPR = geoLookupCreateResponse.getEndpointReference(); //Get instance PortType geoLookupInstance = geoLookupInstanceLocator.getGeoIndexLookupPortTypePort(geoLookupInstanceEPR); //Start creating the query SearchPolygon search = new SearchPolygon(); Point[] vertices = new Point[] {new Point(-100, 11), new Point(-100, -100), new Point(100, -100), new Point(100, 11)}; //A request to rank by the ranker created in the previous example RankingRequest ranker = new RankingRequest(new String[]{}, "SpanSizeRanker"); //A request to use the refiner created in the previous example. //Please make note of the refiner argument in the String array. RefinementRequest refinement = new RefinementRequest(new String[]{"100000"}, "SpanSizeRefiner"); //Perform the query search.setVertices(vertices); search.setRanker(ranker); search.setRefinementList(new RefinementRequest[]{refinement}); search.setInclusion(InclusionType.contains); String resultEpr = geoIndexLookupInstance.search(search); //Print the results to screen. (refer to the ResultSet Framework page for a more detailed explanation) RSXMLReader reader=null; ResultElementBase[] results; try{ //create a reader for the ResultSet we created reader = RSXMLReader.getRSXMLReader(new RSLocator(resultEpr)); //Print each part of the RS to std.out System.out.println("<Results>"); do{ System.out.println(" <Part>"); if (reader.getNumberOfResults() > 0){ results = reader.getResults(ResultElementGeneric.class); for(int i = 0; i < results.length; i++ ){ System.out.println(" "+results[i].toXML()); } } System.out.println(" </Part>"); if(!reader.getNextPart()){ break; } } while(true); System.out.println("</Results>"); } catch(Exception e){ e.printStackTrace(); }

Forward Index

The Forward Index provides storage and retrieval capabilities for documents that consist of key-value pairs. It is able to answer one-dimensional(that refer to one key only) or multi-dimensional(that refer to many keys) range queries, for retrieving documents with keyvalues within the corresponding range. The Forward Index Service design pattern is similar to/the same as the Full Text Index Service design and the Geo Index Service design. The forward index supports the following schema for each key value pair:

key; integer, value; string

key; float, value; string

key; string, value; string

key; date, value;string

The schema for an index is given as a parameter when the index is created. The schema must be known in order to be able to instantiate a class that is capable of comparing the two keys (implements java.util.comparator). The Objects stored in the database can be anything. There is no limit to the length of the keys or values (except for the typed keys).

Implementation Overview

Services

The forward index is implemented through three services. They are all implemented according to the factory-instance pattern:

- An instance of ForwardIndexManagement Service represents an index and manages this index. The life-cycle of the index is the same as the life-cycle of the management instance; the index is created when the ForwardIndexManagement instance is created, and the index is terminated (deleted) when the ForwardIndexManagement instance resource is removed. The ForwardIndexManagement Service manage the life-cycle and properties of the forward index. It co-operates with instances of the ForwardIndexUpdater Service when feeding content into the index, and with instances of the ForwardIndexLookup Service for getting content from the index. The Content Management service is used for safe storage of an index. A logical file is established in Content Management when the index is created. The index is retrieved from Content Management and established on the local node when an existing forward index is dynamically deployed on a node. The logical file in Content Management is deleted when the ForwardIndexManagement instance is deleted.

- The ForwardIndexUpdater Service is responsible for feeding content into the forward index. The content of the forward index consists of key value pairs. A ForwardIndexUpdater Service resource updates a single Index. One index may be updated by several ForwardIndexUpdater Service instances simultaneously. When feeding the index, a ForwardIndexUpdater Service is created, with the EPR of the FullTextIndexManagement resource connected to the Index to update. The ForwardIndexUpdater instance is connected to a ResultSet that contains the content to be fed to the Index.

- The ForwardIndexLookup Service is responsible receiving queries for the index, and returning responses that matches the queries. The ForwardIndexLookup gets a reference to the ForwardIndexManagement instance that is managing the index, when it is created. It can only query this index. Several ForwardIndexLookup instances may query the same index. The ForwardIndexLookup instances gets the index from Content Management, and establishes a local copy of the index on the file system that is queried. The local copy is kept up to date by subscribing for index change notifications that are emitted my the ForwardIndexManagement instance.

It is important to note that none of the three services have to reside on the same node; they are only connected through web service calls, the gCube notifications' framework and the gCube Content Management System.

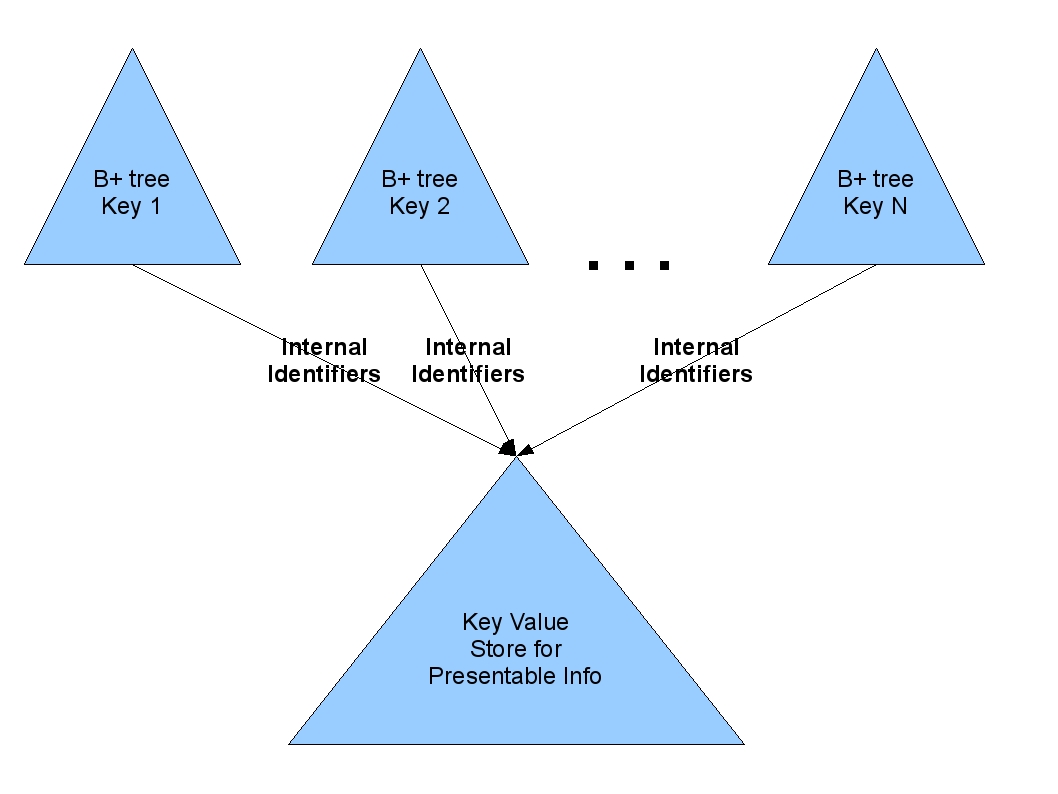

Underlying Technology

The Forward Index Lookup is based on BerkeleyDB. A BerkeleyDB B+tree is used as an internal index for each dimension-key. An additional BerkeleyDB key-value store is used for storing the presentable information of each document. The range query that a Forward Index Lookup resource will execute internally is a conjunction of single range criteria, that each refers to a single key. For each criterion the B+tree of the corresponding key is used. The outcome of the initial range query, is the intersection of the documents that satisfy all the criteria. The following figure shows the internal design of Forward Index Lookup:

CQL capabilities implementation

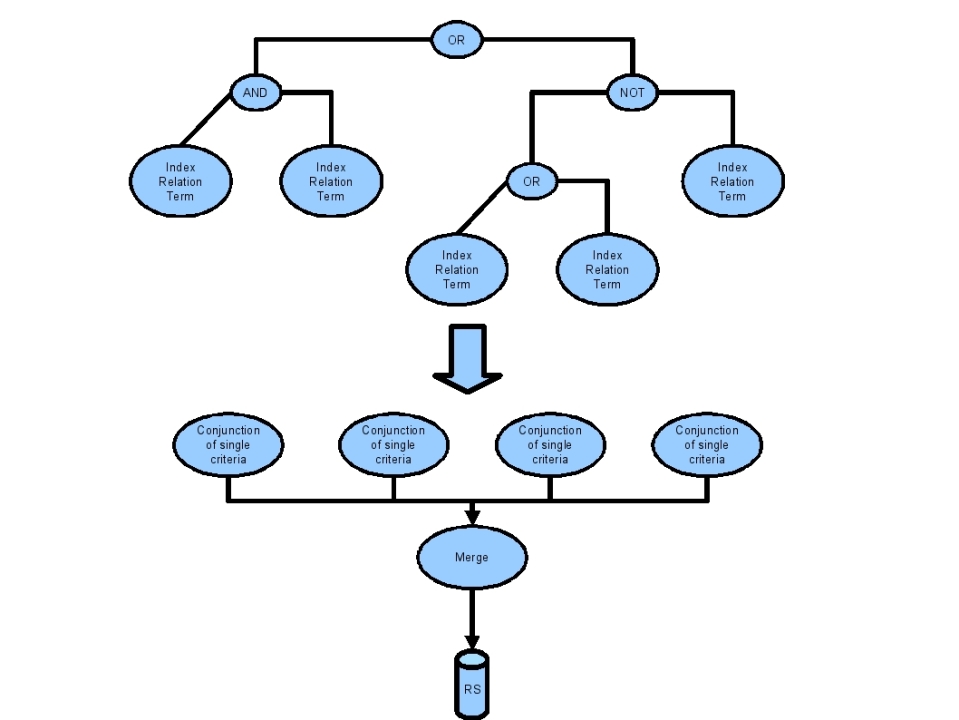

As stated in the previous section the design of the Forward Index Lookup enables the efficient execution of range queries that are conjunctions of single criteria. A initial CQL query is transformed into an equivalent one that is a union of range queries. Each range query will be a conjunction of single criteria. A single criterion will refer to a single indexed key of the Forward Index. This transformation is described by the following figure:

In a similar manner with the Geo-Spatial Index Lookup, the Forward Index Lookup will exploit any possibilities to eliminate parts of the query that will not produce any results, by applying "cut-off" rules. The merge operation of the range queries' results is performed internally by the Forward Index Lookup.

RowSet

The content to be fed into an Index, must be served as a ResultSet containing XML documents conforming to the ROWSET schema. This is a simple schema, containing key and value pairs. The following is an example of a ROWSET for that can be fed into the Forward Index Updater:

The row set "schema"

<rowset> <insert> <tuple><key></key><value></value></tuple> <tuple><key></key><value></value></tuple> </insert> <delete> <key></key> <key></key> </delete> </rowset>

The rowset may contain a insert section, or a delete section or both. The key and value pairs (tuples) in the insert section may be repeated 1 or infinite number of times. The key in the delete section may be repeated 1 or infinite number of times.

Test Client ForwardIndexClient

The org.gcube.indexservice.clients.ForwardIndexClient test client is used to test the ForwardIndex.

The ForwardIndexClient is in the SVN module test/client The ForwardIndexClient uses a property file ForwardIndex.properties:

The property file contains the following properties: ForwardIndexManagementFactoryResource= /wsrf/services/gcube/index/ForwardIndexManagementFactoryService Host=dili02.osl.fast.no ForwardIndexUpdaterFactoryResource= /wsrf/services/gcube/index/ForwardIndexUpdaterFactoryService ForwardIndexLookupFactoryResource= /wsrf/services/gcube/index/ForwardIndexLookupFactoryService geoManagementFactoryResource= /wsrf/services/gcube/index/GeoIndexManagementFactoryService Port=8080 Create-ForwardIndexManagementFactory=true Create-ForwardIndexLookupFactory=true Create-ForwardIndexUpdaterFactory=true

The property Host and Port must be edited to point to VO of interest.

The test client creates the Factory services (gets the EPRs of) and uses the factory services to create the statefull web services:

ForwardIndexManagementService - responsible for holding the list of delta files that

in sum is the index. The service also relays Notifications

from the ForwardIndexUpdaterService to the ForwardIndexLookupService

when new delta files must be merged into the index.

ForwardIndexUpdaterService - responsible for creating new delta files with tuples that shall

be deleted from the index or inserted into the index.

ForwardIndexLookupService - responsible for looking up queries and returning the answer.

The test clients creates one WS - resource of each type, inserts some data into the update, and queries the data by using the lookup WS resource.

Inserting data and deleting tuples Tuples can be inserted and deleted by: insertingPair(key,value) / deletingPair(key) -simple methods to insert / delete tuples. process(rowSet) - method to insert / delete a series of tuples. procesResultSet - method to insert / delete a series of tuples in a rowset inserted into a resultSet.

Lookup: Tuples can be queried by : getEQ_int(key), getEQ_float(key), getEQ_string(key), getEQ_date(key) getLT_int(key), getLT_float(key), getLT_string(key), getLT_date(key) getLE_int(key), getLE_float(key), getLE_string(key), getLE_date(key) getGT_int(key), getGT_float(key), getGT_string(key), getGT_date(key) getGE_int(key), getGE_float(key), getGE_string(key), getGE_date(key) getGTandLT_int(keyGT,keyLT), getGTandLT_float(keyGT,keyLT),getGTandLT_string(keyGT,keyLT), getGTandLT_date(keyGT,keyLT) getGEandLT_int(keyGE,keyLT), getGEandLT_float(keyGE,keyLT),getGEandLT_string(keyGE,keyLT), getGEandLT_date(keyGE,keyLT) getGTandLE_int(keyGT,keyLE), getGTandLE_float(keyGT,keyLE),getGTandLE_string(keyGT,keyLE), getGTandLE_date(keyGT,keyLE) getGEandLE_int(keyGE,keyLE), getGEandLE_float(keyGE,keyLE),getGEandLE_string(keyGE,keyLE), getGEandLE_date(keyGE,keyLE) getAll

The result is provided to the client by using the Result Set service.

Storage Handling layer

The Storage Handling layer is responsible for the actual storage of the index data to the infrastructure. All the index components rely on the functionality provided by this layer in order to store and load their data. The implementation of the Storage Handling layer can be easily modified independently of the way the index components work in order to produce their data. The current implementation splits the index data to chunks called "delta files" and stores them in the Content Management Layer, through the use of the Content Management and Collection Management services. The Storage Handling service must be deployed together with any other index. It cannot be invoked directly, since it's meant to be used only internally by the upper layers of the index components hierarchy.

Index Common library

The Index Common library is another component which is meant to be used internally by the other index components. It provides some common functionality required by the various index types, such as interfaces, XML parsing utilities and definitions of some common attributes of all indices. The jar containing the library should be deployed on every node where at least one index component is deployed.