Difference between revisions of "Content Management"

Ali.boloori (Talk | contribs) (→Functions) |

Ali.boloori (Talk | contribs) (→Resources and Properties) |

||

| Line 42: | Line 42: | ||

This service provides the high-level operations for collection management which are mapped onto generic Storage Management operations. | This service provides the high-level operations for collection management which are mapped onto generic Storage Management operations. | ||

| − | ==Resources and Properties== | + | ===Resources and Properties=== |

The service is implemented as a stateless WRSF service. However, it publishes summary information about the collections it manages on the Information System. Each collection is exposed as a distinct gCube-Resource. The properties stored in the resource are the ID and name of the collection, its storage properties (e.g is the collection is virtual and if it is a user-collection), and its references. | The service is implemented as a stateless WRSF service. However, it publishes summary information about the collections it manages on the Information System. Each collection is exposed as a distinct gCube-Resource. The properties stored in the resource are the ID and name of the collection, its storage properties (e.g is the collection is virtual and if it is a user-collection), and its references. | ||

Revision as of 17:17, 24 November 2008

While other infrastructures for the manipulation of content in Grid-based environments, like gLite, provide basic file-system like functionality for content manipulation, the Information Organization services are aimed to provide more high-level functionality, built on top of gLite or other storage facilities. Content is stored and organized following a graph-based data model, the Information Object Model, that allows finer control of content w.r.t. a file based view, by incorporating the possibility to annotate content with arbitrary properties and to relate different content unities via arbitrary relationships. Building on this basic data model, other services in the Information Organization family provide to other gCube services more sophisticated data models to manage complex documents, document collections, metadata and annotations.

Contents

Reference Architecture

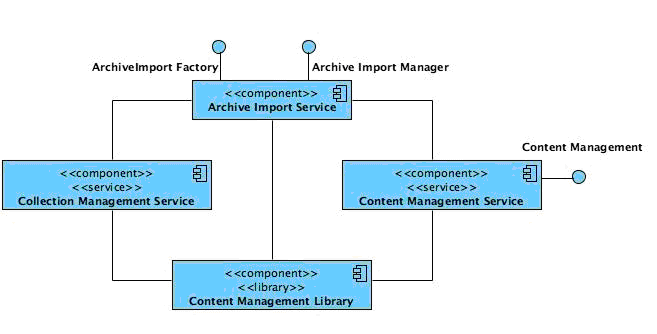

The Content Management Layer exposes the functionality to manipulate documents and collections according to the corresponding models described above. It also offers facilities to populate collections starting from data which is stored externally to the gCube infrastructure. This functionality is provided by three main services (i) the Content Management Service; (ii) the Collection Management Service; and (iii) the Archive Import Service. Internally, these services use the Content Management Library. The architecture of this layer is shown in Figure 1.

Content Management Service

This service expose the document model described in the previous section (cf. Section 6.1.2), providing operations for documents storage, access and update. Internally, these high-level operations are mapped onto generic Storage Management operations. For this reason, many of its operations accept non functional parameters to be passed to the underlying Storage Management Service in the form of Storage Hints (see previous section). It is important to observe that, though the document model is per se independent of other high level models, this service is not completely independent, for the logics of its operation, from the collection Management Service described in the

next section. More specifically, its interface enforces that any document must belong to at least one materialized collection. The reason for this is that permissions and visibility of resources are based on collections. Hence, a document cannot be stored outside of collections, because then its permissions would be undefined. Any document may on the other hand belong to more than one collection.

Resources and Properties

The Content Management Service is implemented as a stateless WRSF-compliant web service. It does not publish any resource, and only depends on the underlying Storage Management Service for its operation.

Functions

The main functions supported by the Content Management Service are:

- storeDocument() – which takes as input parameter a message containing the document ID, an URI from where the raw content can be gathered or the raw content itself, a collection ID and an array of storage hints and stores the document within the given collection;

- getDocument() – which takes as input parameter a message containing the document ID, the URI from where the raw content can be downloaded, a set of storage hints and a specification of the unroll level, i.e. an upper limit on the maximum number of relations that have to be traversed when retrieving a complex document composed by multiple nested subparts (this is an optimization feature), and returns the document description;

- updateDocumentContent() – which takes as input parameter a message containing the document ID, an URI from where the raw content can be gathered or the raw content itself, and an array of storage hints and updates the content of the given document;

- deleteDocument() – which takes as input parameter a message containing the document ID and deletes such a document (including raw content and references, may cause cascaded deletes based on propagation rules) from the set of documents managed by the service;

- renameDocument() – which takes as input parameter a message containing the document ID and the document name and attaches such a name to the given document;

- setDocumentProperty() – which takes as input parameter a message containing a document ID, a property name, a property type and a property value and attaches such a property to the given document;

- getDocumentProperty() – which takes as input parameter a message containing a document ID and a property name and returns the specified document property in terms of the ID, name, type and value;

- unsetDocumentProperty() – which takes as input parameter a message containing a document ID and a property name and removes the specified property from the document;

- addPart() – which takes as input parameter a message containing the parent document ID, the part document ID, the position the part should occupy in the parent document (optional) and a Boolean value indicating whether the associated part has to be removed whenever the main document is removed or not and attach the part to the specified document (i.e. creates the part-of relation);

- removePart() – which takes as input parameter a message containing the parent document ID and the part document ID and removes the specified part from the selected document (i.e. removes the part-of relation);

- removeAlternativeRepresentation() – which takes as input parameter a message containing the document ID and the representation ID, and removes the alternative representation from the selected document (i.e. the is-representation-of relation);

- getParts() – which takes as input parameter a message containing the parent document ID and returns the list of Document IDs that are (direct) parts of the specified document;

- getDirectParents() – which takes as input parameter a message containing an Information Object ID and returns a list of Information Object IDs of all the objects that are (direct) parents of the specified one;

- getDirectParents() – which takes as input parameter a message containing the ID of an alternative representation and returns the list of Information Object IDs (OIDs) of the main representations of the Document;

- addAlternativeRepresentation() – which takes as input parameter a message containing the document ID, the ID of an Information Object that represent the alternative representation, the rank this alternative representation has (optional) and a Boolean value (optional) indicating whether the alternative representation has to be deleted whenever the main object is deleted or not, and assigns such a representation to the specified Document;

- getAlternativeRepresentations() – which takes as input parameter a message containing the document ID and returns the list of all existing representations of the specified document;

- hasRawContent() – which takes as input parameter as input parameter a message containing the document ID and returns a Boolean value indicating whether the document has a raw content or not;

- getContentLength() – which takes as input parameter as input parameter a message containing the document ID and returns the size of the raw content of the document;

- getMimeType() – which takes as input parameter a message containing the document ID and returns the MIME Type associated to the raw content of the document;

- setMimeType() – which takes as input parameter a message containing the Document ID and a string representing a MIME Type and set such a type as those of the raw content of the document;

- registerToDocumentEvent() – which takes as input parameter a message containing the DocumentID, the event type and the EPR of a service and registers the service to that kind of event on the specified document.

Collection Management Service

This service provides the high-level operations for collection management which are mapped onto generic Storage Management operations.

Resources and Properties

The service is implemented as a stateless WRSF service. However, it publishes summary information about the collections it manages on the Information System. Each collection is exposed as a distinct gCube-Resource. The properties stored in the resource are the ID and name of the collection, its storage properties (e.g is the collection is virtual and if it is a user-collection), and its references.

Functions

The main functions supported by the Collection Management Service are:

- createCollection() – which takes as input parameter a message containing a collection name, a Boolean flag indicating whether the collection is virtual or not, and a membership predicate (optional) returns the Information Object ID of the object created to represent the collection;

- getCollection() – which takes as input parameter a message containing a collection ID and returns the collection description including the object ID, the object type, the object name, the object flavour, the virtual flag, the user collection flag, the membership predicate, the created and last modified value, and the set of other collection properties of the given collection;

- getMembers() – which takes as input parameter a message containing a collection ID and returns a list of Information Object Description (including the object ID, the object type, the object name, the object flavour, the virtual flag, the user collection flag, the membership predicate, the created and last modified value, and the set of other collection properties) of all the objects members of the given collection;

- getMemberOIDs() – which takes as input parameter a message containing a collection ID and returns a list of Information Object IDs (OIDs) of all the objects members of the given collection;

- addMember() – which takes as input parameter a message containing the collection ID and a Document ID and adds the object to the given collection. This method fails if invoked on a virtual collection;

- removeMember() – which takes as input parameter a message containing a collection ID and a Document ID and removes the specified object from the given collection. This method fails if invoked on a virtual collection;

- updateCollection() – which takes as input parameter a message containing the collection ID and a membership predicate and changes the collection accordingly. For a virtual collection, this simply updates the stored membership predicate. For a materialized collection, it adds all new members that satisfy the predicate and removes all members that no longer satisfy the predicate. The latter may cause deletion of documents that are no longer member of any collection;

- deleteCollection() – which takes as input parameter a message containing the collection ID and removes such a collection from the set of collections existing in the gCube system;

- renameCollection() – which takes as input parameter a message containing a collection ID and a collection name and assigns the specified name to the given collection;

- setCollectionProperty() – which takes as input parameter a message containing a collection ID, a property name, a property type and a property value and attach such a property to the given collection;

- getCollectionProperty() – which takes as input parameter a message containing a collection ID and a property name and returns the specified collection property, i.e. the object ID, the property name, type and value;

- unsetCollectionProperty() – which takes as input parameter a message containing a collection ID and a property name and removes the specified parameter from the ones attached to the given collection;

- getMaterializedCollectionMembership() – which takes as input parameter a message containing a document ID and returns a list of collection IDs and names of the materialized collection such an object is member of;

- isUserCollection() – which takes as input parameter a message containing the collection ID and returns a Boolean value indicating whether the given collection is a user collection, i.e. can be directly perceived by the VRE users, or not;

- setUserCollection() – which takes as input parameter a message containing a collection ID and a Boolean value indicating whether the specified collection has to be considered a user collection, i.e. a collection that is perceived by the users of some Virtual Research Environment, or not and sets such a property on the given collection;

- listAllCollectionIDsAndNames() – which returns a list of collection ID and collection name representing all the collections currently managed in gCube which are defined in the scope of the caller;

- listAllCollections() – which returns a list of collection descriptions representing all the collection currently managed in gCube which are defined in the scope of the caller;

- listAllCollectionsHavingNames() – which takes as input parameter a message containing a collection name and returns a list of collection descriptions of the collections having such a name. No support for wildcards of similar kind of similarity evaluation. This method and the following one are provided for those services who need to access collections by name instead that by their ID;

- listAllCollectionIDsHavingName() – which takes as input parameter a message containing a collection name and returns a list of collection IDs of the collection having such a name. No support for wildcards of similar kind of similarity evaluation;

- registerToCollectionEvent() – which takes as input parameter a message containing the CollectionID, the event type and the EPR of a service and registers the service to that kind of event on the specified collection;

Archive Import Service

The Archive Import Service (AIS) is in charge of defining collections and importing their content into the gCube infrastructure, by interacting with the Collection Management service, the Content Management service and other services at the content management layer, such as the Metadata Manager Service. While the functionality it offers is, from a logical point of view, well defined and rather confined, the AIS must be able to deal with a large number of different ways to define collections and offer extensibility features so to accommodate types of collections and ways to describe them not known/required at the time of its initial design. These needs impact on the architecture of the service and on its interface. From an architectural point of view, the AIS offers the possibility to add new functionality by using pluggable software modules. At the interface level, high flexibility is ensured by relying on a scripting language used to define import tasks, rather than on a interface with fixed parameters only. As importing collections might be an expensive task, resource-wise, the AIS offers features that can be used to optimize import tasks. In particular, it supports incremental import of collections. The description that follows introduces first the rationale behind the functionality of the AIS, its overall architecture, its scripting language, its extensibility features, and the concepts related to incremental import. Then, it presents the interface of the service. Overall, the execution of an import task can be thought as divided in two main phases (i) the representation phase and (ii) the import phase. During the representation phase, a representation of the resources to be imported and their relationships is modelled using a graph-based model. The model contains three main constructs: collection, object and relationship, thus resembling the collection and Information Object models on which the content management services are based. Each construct has a type, and can be annotated with a number of properties. The type of the resource is a marker that is used by importers to select the resources they are dedicated. The properties are just name-value pairs. These values can be of any java type. Only some types of resources and their properties are fixed in advance. The existing types are used to support the import of content and metadata. The functionality of the AIS can be extended define new types, annotated with different properties. To specify a representation of the resources to import it is possible to use a procedural scripting language called AISL (Archive Import Service Language). More details on the language are given below.

The representation graph built during the representation phase is then exploited during the import phase. An import-engine dynamically loads and invokes a number of importers, software modules that encapsulate the logics needed to import specific kind of resources, interfacing with the appropriate services. Each importer inspects the representation graph, identifies the resources for which it is responsible, and performs the actions needed to import them. Besides performing the import, the importer may also produce some further annotation of the resources in the graph. These annotations are used in later execution of tasks that involve the same resources, and may also be exploited by other importers. For example, in the case of importing metadata related to some content, the content objects to which the metadata objects refer should have already been stored in the content-management service, and should have been annotated with the identifiers used by that service. Similar considerations hold for content collections. Importers enabled to handle content-objects and metadata objects are already provided by the AIS. Additional importers, dedicated to store specific kind of objects, can be added. At the end of the import phase, the resource graph created during the representation phase and annotated during the import phase is stored persistently. Defining an import task for the AIS amounts to building a description of the resources to import. This can be done by submitting to the AIS a script written in AISL. AISL is an interpreted language with XML-based syntax, designed to support the most common tasks needed during the definition of an import task. As most programming languages, it supports various flow-control structures, allows to manipulate variables and evaluate various kinds of expressions. However, the goal of an AISL script is not that of performing arbitrary computations, but to create a graph of resources. Representation objects (collections, objects and relationships) are first class entities in the language, that provides constructs to build them and assign them properties. Representation objects may resemble objects in oo-languages, in that their properties can be accessed as fields and assigned values, and references to representation-objects themselves can be stored in variables. A fundamental difference is that, once created, representation objects are never destroyed, even when the control-flow exits the scope in which they were created. AISL is not typed, i.e. variables can be assigned values of any kind, and is the responsibility of the programmer to ensure proper type concordance. Basically, AISL does not even define its own type system, but exploits the type system of the underlying Java language. The language offers constructs for building objects of some basic types, like strings, sets, files, and integers. However, expressions in AISL can return any Java object. Specific functions can be thus used to build objects of given types in a controlled way. For instance, the built-in "dom()" function accepts as input a file object and produces a DOM document object. Similarly, the "xpath" function takes a DOM object and an expression, and returns the result of the evaluation of an xpath over the DOM node object as a set of DOM Node objects. In general, thus, types cannot be directly manipulated from the language, except for a few cases, but the variables of the language can be assigned with any java type, and objects of given type can be built using functions. This means that add-on-functions can produce as result objects of user-defined types. These objects can be stored in representation objects as properties, and later used by specific add-on-importers. However, AISL itself provides a few special data-types, not present in the standard java libraries. In particular, the type AISLFile is designed to work in combination with some AISL built-in functions and to optimize the management of files during import, in particular with regard to memory and disk storage resources consumption. An AISLFile object encapsulates information about a given file, such as file length, and allow access to its content. However, the file content is not necessarily stored locally. If the file is obtained through the file() function, then the download of its actual content is deferred, and only performed when needed. When describing a large number of resources, as in the case of large collections, it is not feasible (or anyway not efficient) to store locally to the archive import service all contents that need to be imported. This is especially true for content that has to be imported without having to be processed in any way before the import itself. Even for those files that might need some processing (for instance for extracting information), it might be desirable for the AISL script-writer to be able to import the file, use for the time he needs it (e.g. pass it to the xslt() function) and then free the memory resources used to maintain it, without having to deal directly with the details. The AISL file offers a transparent mechanism to handle access to the content of remote files accessible through a variety of protocols, by having a placeholder (an AISFile object) that can be treated as a file. Internally, an AISLFile implements a caching strategy for documents whose content has to be accessed at resource-description time. For files whose contents have to be handled only at import time, it offers a way to encapsulate easily inside a representation object all the information needed to pass to other service like the storage management or the content management service. To encapsulate complex operations, AISL provides functions that work as in most programming languages. A number of built-in functions is already defined inside the language. These functions cover common needs arising during the definition of resource graphs.,.For instance, the dom() function takes as input an AISFile object and returns a DOM document obtained by parsing the file (or null if the parsing fail, e.g. if the file is not a valid XML file). The language can be expanded by adding new functions. Furthermore, AISL provides extensible-functions, meaning that their functionality can be extended to handle special kinds of arguments. For instance, the file() function allows to retrieve a list of files given a set of arguments that define their location. The built-in function is already able to deal with a number of protocols, including http, ftp and gridftp. However, the function can be extended to handle additional protocols. The motivation behind extensible functions is to keep the syntax of AISL as lean and transparent for the user as possible. The complete syntax of the AISL language and the reference on its built-in functions, together with examples of usage, are detailed in the documentation accompanying with the service. The entire architecture of the AIS is design to offer an high degree of extensibility at various levels. All these mechanisms are based on a plug-in style approach: the service doesn't have to be recompiled, redeployed or even stopped whenever an extension is added to it. An AISL script can be used to specify an AIS import task in a fully flexible way. However, it might be desirable to offer a simpler interface to certain functionality that has to be invoked often without many variations. For instance, in the case when the files to import are all stored at certain directory accessible through ftp, or in the case when a single file available at some location describes the location of files and related metadata, it would be desirable to invoke the AIS with the bare number of parameters needed to perform the task, rather than having to write an AISL script from scratch. For this reason, it is possible to plug-in in the AIS adapters. An adapter is a software module that can be invoked directly from the interface of the AISL with a limited number of parameters and is in charge of building a representation graph. Internally, the adapter may use a parameterized AISL script to perform its job, but also manipulate directly the constructs of the representation model. The AISL language itself provides extension mechanisms. New functions can be defined for the language, and existing extensible functions can be extended to treat a larger number of argument types. The representation model used to describe resources is fully flexible: new types can be defined for collections, objects and relationships, and all these representation constructs can be attached with arbitrary properties of arbitrary types. Regarding the importing phase, new objects types can be handled by defining pluggable importers. These importers will be invoked together with all other importers available to the AIS service, and handle specific types of representation objects. It is to be observed that the definition of new representation constructs and that of new importers are not disjoint activities. In order for new object types to have meaning, there must be an appropriate importer which is able to handle them (otherwise they will just be ignored). In order for an importer to work with a specific kind of object type, the importer must be aware of its interface, i.e. its properties. While properties attached to representation objects can be always accessed by name, in order to make easier the development of importers it is possible to define new representation constructs so that their properties can be accessed via getters and setters. During the description phase, the AISL interpreter will recognize if a new type is connected to a subclass of the related representation construct and build an object of the most specific class, so that later on the importers can work directly on objects of the types they recognize. In order to support these extensibility mechanism, the AIS exploits a simple plug-in pattern. The modules (Java classes) that have to be made available must extend appropriate interfaces/classes defined inside the AIS framework, and be defined in specific Java packages. To be compiled properly, these classes must of course be linked against the rest of the code of the AIS. After compilation, the resulting .class files can be made available to an AIS instance by putting them into a special folder under the control of the AIS instance. The classes will be dynamically loaded and used (partially using the Java reflection facilities). The archive import service supports incremental import. With this term, we denote the fact that if the same collection is imported twice, the import task should only perform the actions needed to update the collection with the changes occurred within the two imports, and not re-import the entire collection. Incremental import requires two features. First, it must be possible to specify that a collection is the same an another collection already imported. For the existing importers, this is achieved by specifying inside the description of a collection the unique identifier of the collection in the related service. For instance, for content collections, this would be the collection ID attached to each collection by the Collection Management Service. After the description of the new collection has been created, the service will compare this description with that resulting from the previous import, and decide which objects must be imported, which must be substituted, and which must be deleted from the collection. When comparing two collections, the import service must know how to decide whether two objects present inside a collection are actually the same object. In order to support this behaviour, the AIS must support two concepts, that of external-object-identity and that of content-equality. External object identity is an external object-identifier. Two objects are considered to be the same if and only if they have the same external identifier. Notice that the external identifier is distinct from the internal-object identity that is used by services to distinguish between objects. Of course, the AIS must ensure a correspondence between internal and external identifiers. Thus, if an object with a given external identifier has been stored with a given internal identifier, then another object is imported with the same external identifier then the AIS will not create a new object, but (possibly) update the contents/properties of the existing object. External Identity is sufficient to decide whether an object to be imported already exists in a given collection. If an object already exists, and it has changed, then it will be updated. Deciding whether an object has changed also requires additional knowledge. For many properties attached to an object, the comparison is straightforward. However, for the actual content of an object this is less immediate. The content of an object (that is a file) might reside at the same location, but differ from that previously imported. Furthermore, comparing the old content with the new content is an expensive task: it requires at least to fetch the entire content of the new object and thus is as expensive as just re-importing the content itself. For this reason the AIS also supports the concept of content-identity. A content-identifier can be specified. If two identical objects (i.e. having the same external-object-identity) have the same content-identifier, than the content is not re-imported. If, on the other hand, the identifier differs, then the new content is re-imported. A content identifier can be a signature of the actual content, or any other string. If a content identifier is not specified, the AIS the content-identifier is obtained exploiting the following information location of the content, size of the content (when available, depends on the particular protocol used), date of last modification of the content (when available, depends on the protocol used), an hash signature of the content (when available from the server, depends on the protocol used. For instance, it is possible to get an MD5 signature of a file from an HTTP server). The AIS also supports continuous import. The term continuous import denotes the fact that the AIS should provide updates for certain collections without need to resubmit the same import task every time. As a way to monitor resources outside the gCube infrastructure is not defined, this means that the same import task will not be executed once, but scheduled for re-execution at appropriate intervals of time.

Resources and Properties

The Archive Import Service is implemented as a stateful WSRF-compliant web-service, following a factory-instance pattern, and publishes a WS-resource for each instance. The WS-resource contains the parameters that are used to create the instance (e.g. the related AISL script), but also contains summary information like the current status of an import (on-going, completed, continuous), the collections involved in the import, information on errors and other information useful for monitoring the import of collections.

Functions

The main functions supported by the Archive Import Service Factory are:

- createImportTask() – which takes as input parameter a message containing a specification of an import action expressed through the AISL scripting language, a specification whether the import should be continuous and an interval of time for the re-execution of the task, and submits it to the appropriate agent managing the import action, returns the EPR of the corresponding instance;

- createAdapterTask() – which takes as input parameter a message containing an adapter component (i.e. an helper implementing the import action accordingly) and the relative parameters, a specification whether the import should be continuous and an interval of time for the re-execution of the task, and submits this import action task to an appropriate agent, managing the import action through the given adapter, and returns the EPR of the corresponding instance;

- registerPlugin() – which takes as input parameter a message containing the URI a new import plug-in can be downloaded and loads such a plug-in to the pool of those equipping the service. The plug-ins will be available to all instances created by the factory afterwards.

The main functions supported buy the Archive Import Manager (i.e. the service acting on the WS-Resource) are:

- stopImportTask() – which takes as input parameter a message specifying a stop rule, and causes the import action performed by the instance to stop. This method is provided to control instances that run a continuous import task.