Difference between revisions of "How to use the DataMiner Pool Manager"

(→Usage and APIs) |

(→Usage and APIs) |

||

| Line 139: | Line 139: | ||

| − | The DMPM REST Service will expose five main functionalities (three for the staging phase, and two for the release phase) | + | The DMPM REST Service will expose five main functionalities (three for the staging phase, and two for the release phase). |

| + | The result of the execution will be monitored asynchronously by means of a REST call to a log having as parameter the ID of the operation. | ||

| + | This can be done both at STAGING and RELEASE phases. | ||

1. '''STAGING PHASE''': a method returning immediately the log ID useful to monitor the execution, able to: | 1. '''STAGING PHASE''': a method returning immediately the log ID useful to monitor the execution, able to: | ||

| Line 176: | Line 178: | ||

2. '''RELEASE PHASE''': a method invoked from SAI, executed after that the Test phase has successfully finished, able to: | 2. '''RELEASE PHASE''': a method invoked from SAI, executed after that the Test phase has successfully finished, able to: | ||

| − | ** update the SVN list of production with the new algorithms | + | ** update the SVN list of production with the new algorithms (that is the input for the CRON-job) |

<source lang="text"> | <source lang="text"> | ||

| Line 183: | Line 185: | ||

| − | + | The parameters to consider are the following: | |

* the '''algorithm''' (URL to package containing the dependencies and the script to install) | * the '''algorithm''' (URL to package containing the dependencies and the script to install) | ||

| + | * the '''targetVRE''' | ||

* the '''category''' to which the algorithm belong to | * the '''category''' to which the algorithm belong to | ||

| − | * the VRE ''' | + | * the VRE '''algorithm_type''' |

| − | + | ||

| − | + | ||

| − | An example of Rest call is the following: | + | An example of Rest call related to the publishing is the following: |

<source lang="text"> | <source lang="text"> | ||

| Line 200: | Line 201: | ||

</source> | </source> | ||

| − | An example of Rest call is the following: | + | An example of Rest call related to the monitoring is the following: |

<source lang="text"> | <source lang="text"> | ||

| Line 206: | Line 207: | ||

&logUrl=d3ab34a7-cc8e-4e00-b771-bb8536ddb608 | &logUrl=d3ab34a7-cc8e-4e00-b771-bb8536ddb608 | ||

</source> | </source> | ||

| − | |||

| − | |||

| − | |||

==DataMinerPoolManager Portlet== | ==DataMinerPoolManager Portlet== | ||

Revision as of 15:22, 5 September 2017

Contents

DataMiner Pool Manager

DataMiner Pool Manager service, aka DMPM, is a REST service able to rationalize and automatize the current process for publishing SAI algorithms on DataMiner nodes and keep DataMiner cluster updated.

Maven coordinates

The second version of the the service has been released in gCube 4.6.1. The maven artifact coordinates are:

<dependency> <groupId>org.gcube.dataanalysis</groupId> <artifactId>dataminer-pool-manager</artifactId> <version>2.0.0-SNAPSHOT</version> <packaging>war</packaging> </dependency>

Overview

The service may accept an algorithm descriptor, including its dependencies, generates (via templating) ansible playbook, inventory and roles for the relevant stuff (algorithm installer, algorithms, dependencies), executes ansible playbook on a Staging DataMiner, and finally udpdates the lists of dependendencies and algorithms that will be used from a Cron-job for the installation.

In such sense, the service accepts as input, the url of an algorithm package (including jar, and metadata), extracts the information needed to installation, installs the script, updates the list of dependencies, publishes the new algorithm in the Information System and returns asynchronously the execution outcome to the caller.

Architecture

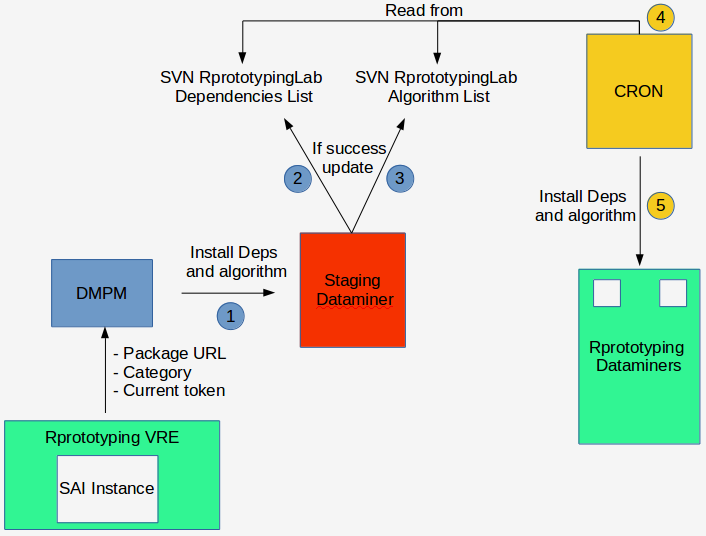

The following main entities will be involved in the process of integration between SAI and the production environment:

- SAI: such component allows the user to upload the Package related to the algorithm to deploy and to decide on which VRE

- Dataminer Pool Manager: a Smartgears REST service in charge of managing the installation of algorithms on the infrastructure dataminers

- The Staging DataMiner: a particular dataminer machine, usable only by the Dataminer Pool Manager, used to test the installation of an algorithm and to its dependencies. Two different dataminers in the d4science infrastructure are staging-oriented (such information can be set by the user inside the configuration file):

- dataminer1-devnext.d4science.org for the development environment

- dataminer-proto-ghost.d4science.org for the production environment

- SVN Dependencies Lists: lists (in files on SVN) of dependencies that must be installed on Dataminer machines. There is one list for type of dependency both for Dev, RProto and Production.

- SVN Algorithms List: lists (in files on SVN) of algorithms that must be installed on Dataminer machines. The service uses three different lists, one for the Dev environment, one for RProto and another one for the production.

- The Cron job: runs on every Dataminer and periodically (every minute) aligns the packages and the algorithms installed on the machine with the SVN Dependencies List and the SVN Algorithms Lists. Concerning the Algorithms, The Cron Job should have to be configured to run the command line available as record of SVN list, while as far as the Dependencies concerns, the Cron Job should have to be configured in order to read and install from both the set of dependencies lists. The lists to consider are the following:

- Production Algorithms:

http://svn.research-infrastructures.eu/public/d4science/gcube/trunk/data-analysis/DataMinerConfiguration/algorithms/prod/algorithms

- RProto Algorithms:

http://svn.research-infrastructures.eu/public/d4science/gcube/trunk/data-analysis/DataMinerConfiguration/algorithms/proto/algorithms

- Dev Algorithms:

http://svn.research-infrastructures.eu/public/d4science/gcube/trunk/data-analysis/DataMinerConfiguration/algorithms/dev/algorithms

Process (From SAI to Production VRE)

Until now, SAI was deployed in several scopes and the user may deploy the algorithm just in the actual VRE. The idea is to have different instances of SAI in many VREs and allow the user to specify the VRE to consider for the deploy.

The process is composed of two main phases:

- STAGING Phase: the installation of an algorithm in the staging dataminer; it ends with the publishing of an algorithm in the pool of dataminers of the target VRE

- The DMPM contacts the Staging Dataminer and installs the algorithm and the dependencies

- The output is retrieved. If there are errors in the installation (e.g. a dependency that does not exist or is written not correctly) it stops and the log is returned to the user (a mail notification is sent to the user and to the VRE adminstrators).

- The DMPM updates the SVN Algorithms list

- A mail notification is sent to the user and to the VRE administrators

- Cron read the SVN lists (both Dependencies and Algorithms) and installs the algorithm in the pool of dataminers for the current VRE.

- The script publishes the new algorithm in the Information System.

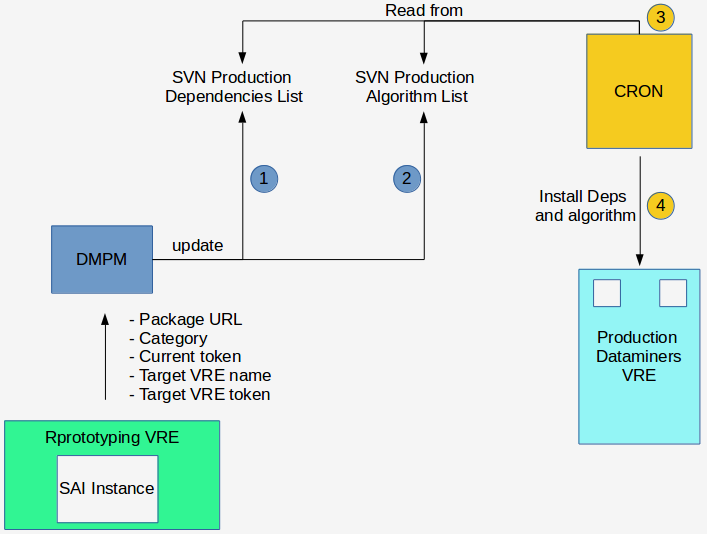

- RELEASE Phase

- SAI will invoke the service working in RELEASE PHASE in order to install the algorithm in a particular VRE of production (provided by the user); SAI will pass to the DMPM the target VRE.

- The DMPM updates the SVN Production Algorithms list

- Cron installs the algorithm in the production dataminers

- A mail notification is sent to the user and to the VRE administrators

- The script publishes the algorithm in the VRE

Configuration and Testing

DMPM is a SmartGears compliant service.

/home/gcube/tomcat/webapps/dataminer-pool-manager-2.0.0-SNAPSHOT

In such sense, an instance has been deployed and configured at Development, Preprod and Prod levels.

http://node2-d-d4s.d4science.org:8080/dataminer-pool-manager-2.0.0-SNAPSHOT/rest/

In order to use the service, two manual configuration are needed:

- to modify the parameter <param-value>Dev</param-value> in /home/gcube/tomcat/webapps/dataminer-pool-manager/WEB-INF/web.xml file according to scope where the service runs (Dev, RProto or Prod); such information will be read dinamically from the service for the switching among the list algorithms and dependencies to consider, of for the selection of the staging dataminer.

- to edit the file /home/gcube/dataminer-pool-manager/dpmConfig/service.properties. Such file contains among the others, the staging dataminer to consider (automatically selected based on the environment at the previous point), the SVN repositories for the algorithms of each environment and for all the typologies of dependencies generated from SAI and available in the metadata file. (e.g., the service carries out his checks on the correctness of the name of a dependency by going to read in the correspondent file according to the language defined in the info.txt file; or for instance, the staging dataminer is ).

#YML node file DEV_STAGING_HOST: dataminer1-devnext.d4science.org PROTO_PROD_STAGING_HOST: dataminer-proto-ghost.d4science.org SVN_REPO: https://svn.d4science.research-infrastructures.eu/gcube/trunk/data-analysis/RConfiguration/RPackagesManagement/ #HAPROXY_CSV: http://data.d4science.org/Yk4zSFF6V3JOSytNd3JkRDlnRFpDUUR5TnRJZEw2QjRHbWJQNStIS0N6Yz0 svn.repository = https://svn.d4science.research-infrastructures.eu/gcube svn.algo.main.repo = /trunk/data-analysis/DataMinerConfiguration/algorithms svn.rproto.algorithms-list = /trunk/data-analysis/DataMinerConfiguration/algorithms/proto/algorithms svn.rproto.deps-linux-compiled = svn.rproto.deps-pre-installed = svn.rproto.deps-r-blackbox = svn.rproto.deps-r = /trunk/data-analysis/RConfiguration/RPackagesManagement/test_r_cran_pkgs.txt svn.rproto.deps-java = svn.rproto.deps-knime-workflow = svn.rproto.deps-octave = svn.rproto.deps-python = svn.rproto.deps-windows-compiled = svn.prod.algorithms-list = /trunk/data-analysis/DataMinerConfiguration/algorithms/prod/algorithms svn.prod.deps-linux-compiled = svn.prod.deps-pre-installed = svn.prod.deps-r-blackbox = svn.prod.deps-r = /trunk/data-analysis/RConfiguration/RPackagesManagement/r_cran_pkgs.txt svn.prod.deps-java = svn.prod.deps-knime-workflow = svn.prod.deps-octave = svn.prod.deps-python = svn.prod.deps-windows-compiled = svn.dev.algorithms-list = /trunk/data-analysis/DataMinerConfiguration/algorithms/dev/algorithms svn.dev.deps-linux-compiled = svn.dev.deps-pre-installed = svn.dev.deps-r-blackbox = svn.dev.deps-r = /trunk/data-analysis/RConfiguration/RPackagesManagement/r_cran_pkgs.txt svn.dev.deps-java = svn.dev.deps-knime-workflow = svn.dev.deps-octave = svn.dev.deps-python = svn.dev.deps-windows-compiled =

Usage and APIs

The DMPM REST Service will expose five main functionalities (three for the staging phase, and two for the release phase). The result of the execution will be monitored asynchronously by means of a REST call to a log having as parameter the ID of the operation. This can be done both at STAGING and RELEASE phases.

1. STAGING PHASE: a method returning immediately the log ID useful to monitor the execution, able to:

- test the installation of the algorithm on a staging dataminer

- to update the algorithms SVN list

The parameters to consider are the following:

- the algorithm (URL to package containing the dependencies and the script to install)

- the targetVRE (actually the current VRE)

- the category to which the algorithm belong to

- the VRE algorithm_type

An example of Rest call related to the Installation is the following:

http://node2-d-d4s.d4science.org:8080/dataminer-pool-manager-2.0.0-SNAPSHOT/api/algorithm/stage?gcube-token=***** &algorithmPackageURL=http://data-d.d4science.org/TSt3cUpDTG1teUJMemxpcXplVXYzV1lBelVHTTdsYjlHbWJQNStIS0N6Yz0 &category=BLACK_BOX &algorithm_type=transducerers &targetVRE=/gcube/devNext/NextNext

An example of Rest call related to the log is the following:

http://node2-d-d4s.d4science.org:8080/dataminer-pool-manager-2.0.0-SNAPSHOT/api/log?gcube-token=***** &logUrl=id_from_previous_call

An example of Rest call related to the monitor of the execution is the following (actually three different status are available: COMPLETED, IN PROGRESS, FAILED:

http://node2-d-d4s.d4science.org:8080/dataminer-pool-manager-2.0.0-SNAPSHOT/api/monitor?gcube-token=***** &logUrl=id_from_previous_first_call

2. RELEASE PHASE: a method invoked from SAI, executed after that the Test phase has successfully finished, able to:

- update the SVN list of production with the new algorithms (that is the input for the CRON-job)

| OCTAVEBLACKBOX | Giancarlo Panichi | BLACK_BOX | Dev | <notextile>./addAlgorithm.sh OCTAVEBLACKBOX BLACK_BOX org.gcube.dataanalysis.executor.rscripts.OctaveBlackBox /gcube/devNext/NextNext transducerers N http://data-d.d4science.org/TSt3cUpDTG1teUJMemxpcXplVXYzV1lBelVHTTdsYjlHbWJQNStIS0N6Yz0 "OctaveBlackBox" </notextile> | none | Fri Sep 01 16:58:47 UTC 2017 |

The parameters to consider are the following:

- the algorithm (URL to package containing the dependencies and the script to install)

- the targetVRE

- the category to which the algorithm belong to

- the VRE algorithm_type

An example of Rest call related to the publishing is the following:

http://node2-d-d4s.d4science.org:8080/dataminer-pool-manager-2.0.0-SNAPSHOT/api/algorithm/add?gcube-token=***** &algorithmPackageURL=http://data-d.d4science.org/TSt3cUpDTG1teUJMemxpcXplVXYzV1lBelVHTTdsYjlHbWJQNStIS0N6Yz0 &category=BLACK_BOX &algorithm_type=transducers &targetVRE=/gcube/devNext/NextNext

An example of Rest call related to the monitoring is the following:

http://node2-d-d4s.d4science.org:8080/dataminer-pool-manager-2.0.0-SNAPSHOT/api/monitor?gcube-token=***** &logUrl=d3ab34a7-cc8e-4e00-b771-bb8536ddb608

DataMinerPoolManager Portlet

Please refer to https://next.d4science.org/group/nextnext/dataminerdeployer for a graphical representation of the service.

Requirements toward the SAI integration

The user allows SAI to generate the package. Each package generated by SAI must have a Info.txt metadata file having the following information specified by the user:

Algorithm Name, Author, Category, Class Name, Packages (list of dependencies)

- The dependencies in the metadata file inside the algorithm package must respect the following guidelines:

- R Dependencies must have prefix cran:

- OS Dependencies must have prefix os:

- Custom Dependencies must have prefix github:

Such dependencies will be stored in the correspondent SVN file without the prefix.

Three buttons will be available in the new SAI interface in order to allow the interaction among SAI and the three methods exposed by the Service.

- On the host where the Service is deployed, must be possible to execute the ansible-playbook command, in order to allow the installation of the dependencies on the staging dataminer, and to install the algorithm on the target VRE

- At least for the staging dataminers used in the test phase, the application must have SSH root access