Difference between revisions of "Data Transformation"

(→Usage Examples) |

(→Finding applicable transformation units) |

||

| Line 1,045: | Line 1,045: | ||

==== Finding applicable transformation units ==== | ==== Finding applicable transformation units ==== | ||

| − | This example demonstrates how it is possible to search for transformation units that are able to perform a transformation from a source to a target content type. In this example we are trying to find one or more transformation units that can transform a tiff image to png format with size | + | This example demonstrates how it is possible to search for transformation units that are able to perform a transformation from a source to a target content type. In this example we are trying to find one or more transformation units that can transform a tiff image to png format with size 500x500px. |

<pre> | <pre> | ||

Revision as of 15:55, 21 December 2009

Contents

Metadata Broker

Introduction

The main functionality of the Metadata Broker is to convert XML documents from some input schema and/or language to another. The inputs and outputs of the transformation process can be single records, ResultSets or entire collections. In the special case where both the inputs and the output are collections, a persistent transformation is possible, meaning that whenever there is a change in the input collection(s), the new data will be automatically transformed in order for the change to be reflected to the output collection.

Transformation Programs

Complex transformation processes are described by transformation programs, which are XML documents. Transformation programs are stored in the IS. Each transformation program can reference other transformation programs and use them as “black-box” components in the transformation process it defines.

Each transformation program consists of:

- One or more data input definitions. Each one defines the schema, language and type (record, ResultSet or collection) of the data that must be mapped to the particular input.

- One or more input variables. Each one of them is placeholder for an additional string value which must be passed to the transformation program at run-time.

- Exactly one data output definition, which contains the output data type (record, ResultSet or collection), schema and language.

- One or more transformation rule definitions.

Note: The name of the input or output schema must be given in the format SchemaName=SchemaURI, where SchemaName is the name of the schema and SchemaURI is the URI of its definition, e.g. DC=http://dublincore.org/schemas/xmls/simpledc20021212.xsd.

Transformation Rules

Transformation rules are the building block of transformation programs. Each transformation program always contains at least one transformation rule. Transformation rules describe simple transformations and execute in the order in which they are defined inside the transformation program. Usually the output of a transformation rule is the input of the next one. So, a transformation program can be thought of as a chain of transformation rules which work together in order to perform the complex transformation defined by the whole transformation program.

Each transformation rule consists of:

- One or more data input definitions. Each definition contains the schema, language, type (record, ResultSet, collection or input variable) and data reference of the input it describes. Each one of these elements (except for the 'type' element) can be either a literal value, or a reference to another value defined inside the transformation program (using XPath syntax).

- Exactly one data output, which can be:

- A definition that contains the output data type (record, ResultSet or collection), schema and language.

- A reference to the transformation program‘s output (using XPath syntax). This is the way to express that the output of this transformation rule will also be the output of the whole transformation program, so such a reference is only valid for the transformation program‘s final rule.

- The name of the underlying program to execute in order to do the transformation, using standard 'packageName.className' syntax.

A transformation rule can also be a reference to another transformation program. This way, whole transformation programs can be used as parts of the execution of another transformation program. The reference can me made using the unique id of the transformation program being referenced and a set of value assignments to its data inputs and variables.

Note: The name of the input or output schema must be given in the format SchemaName=SchemaURI, where SchemaName is the name of the schema and SchemaURI is the URI of its definition, e.g. DC=http://dublincore.org/schemas/xmls/simpledc20021212.xsd.

Variable fields inside data input/output definitions

Inside the definition of data inputs and outputs of transformation programs and transformation rules, any field except for 'Type' can be declared as a variable field. Just like inputs variables, variable fields get their values by run-time assignments. In order to declare an element as a variable field of its parent element, one needs to include 'isVariable=true' in the element's definition. When the caller invokes a broker operation in order to transform some metadata, he/she can provide a set of value assignments to the input variables and variable fields of the transformation program definition. But the caller has access only to the variables of the whole transformation program, not the internal transformation rules. However, transformation rules can also contain variable fields in their input/output definitions. Since the caller cannot explicitly assign values to them, such variable fields must contain an XPath expression as their value, which points to another element inside the transformation program that contains the value to be assigned. These references are resolved when each transformation rule is executed, so if, for example, a variable field of a transformation rule's input definition points to a variable field of the previous transformation rule's output definition, it is guaranteed that the referenced element's value will be there at the time of execution of the second transformation rule. It is important to note that every XPath expression should specify an absolute location inside the document, which basically means it should start with '/'.

There is a special case where the language and schema fields of a transformation program's data input definition can be automatically get values assigned to them, without requiring the caller to do so. This can happen when the type of the particular data input is set to collection. In this case, the Metadata Broker Service automatically retrieves the format of the metadata collection described by the ID that is given through the Reference field of the data input definition and assigns the actual schema descriptor and language identifier of the collection to the respective variable fields of the data input definition. If any of these fields already contain values, these values are compared with the ones retrieved from the metadata collection's profile, and if they are different the execution of the transformation program stops and an exception is thrown by the Metadata Broker service. Note that the automatic value assignment works only on data inputs of transformation programs and NOT on data inputs of individual transformation rules.

Programs

A program (not to be confused with transformation program) is the Java class which performs the actual transformation on the input data. A transformation rule is just a XML description of the interface (inputs and output) of a program.

There are no specific methods that the Java class of a program should define in order to be invokable from the Metadata Broker. Each program can define any number of methods, but when the transformation rule which references it is executed, the Metadata Broker service will use reflection in order to locate the correct method to call based on the input and output types defined in the transformation rule that initiates the call to the program's transformation method. The execution process is the following:

- A client invokes the Metadata Broker requesting the execution of a transformation program.

- For each transformation rule found in the transformation program:

- The Metadata Broker reads the schema, language and type of the transformation rule's inputs, as well as the actual payloads given as inputs. The output format descriptor is also read.

- Based on this information, the Metadata Broker constructs one or more DataSource and/or VariableType objects and a DataSink object, which are wrapper classes around the transformation rule's input and output descriptors. For each input of type 'Record', 'Collection' or 'ResultSet', a DataSource object is created, while a VariableType object is created for every input of type 'Variable'.

- The program to be invoked for the transformation is read from the transformation rule.

- The Metadata Broker uses reflection in order to locate the transformation method to be called inside the program. This is done through the input and output descriptors of the transformation rule, based on the following rules:

- If the transformation rule defines N inputs and one output (where N>=1), the method that will be called should take N+3 parameters.

- When the method is called, the first N parameters are the constructed DataSource or VariableType objects that wrap the actual inputs of the transformation rule.

- Parameter N+1 is the constructed DataSink object that wraps the actual data output of the transformation rule.

- Parameter N+2 is a BrokerStatistics object, which can be used for the logging of performance metrics during the transformation.

- Parameter N+3 is a SecurityManager object, which can be used for the handling of credentials and scoping if other services need to be invoked during the transformation.

The DataSource class is defined as:

public abstract class DataSource<T extends DataElement> {

/**

* Returns true or false, depending on whether there are more elements to be read from

* the data source or not.

* @return

*/

public abstract boolean hasNext();

/**

* Reads the next available element from the data source.

* @return the next element

*/

public abstract T getNext() throws Exception;

/**

* Returns the language of the source data

* @return the language

*/

public String getLanguage() {

return format.getLanguage();

}

/**

* Returns the schema of the source data, in

* '<NAME>=<URI>' format.

* @return the schema

*/

public String getSchema() {

return format.getSchema();

}

/**

* Returns the schema URI of the source data

* @return the schema URI

*/

public String getSchemaURI() {

return format.getSchemaURI();

}

/**

* Returns the schema name of the source data

* @return the schema name

*/

public String getSchemaName() {

return format.getSchemaName();

}

}

This base class is further specialized for different data source types (record, ResultSet or collection) in three subclasses, but the author of a program does not need to be aware of this, since the data access interface is the same for every data source. A program can read the next record from a data source by calling the getNext() method, and check if there are more records to be read by calling the hasNext() method. The type of object returned by getNext() depends on the actual type of input wrapped by the DataSource and can be a RecordDataElement, a ResultSetDataElement or a CollectionDataElement.

The DataSink class is defined as:

public abstract class DataSink<T extends DataElement> {

/**

* Writes an element to the DataSink.

* @param element the element to be written

*/

public void writeNext(T element) throws Exception;

/**

* Signals the end of writing.

*/

public abstract void finishedWriting() throws Exception;

/**

* Returns a handler to the written data.

* @return

*/

public abstract String getWrittenDataHandle() throws Exception;

/**

* Creates a new DataElement derived from a given source DataElement,

* with a different payload.

* @param source the source DataElement

* @param payload the new DataElement's payload

* @return

*/

public abstract T getNewDataElement(DataElement source, String payload);

/**

* Returns the language of the output data

* @return the language

*/

public String getLanguage() {

return format.getLanguage();

}

/**

* Returns the schema of the output data, in

* '<NAME>=<URI>' format.

* @return the schema

*/

public String getSchema() {

return format.getSchema();

}

/**

* Returns the schema URI of the output data

* @return the schema URI

*/

public String getSchemaURI() {

return format.getSchemaURI();

}

/**

* Returns the schema name of the output data

* @return the schema name

*/

public String getSchemaName() {

return format.getSchemaName();

}

}

Just like data sources, this base class is further specialized for different data sink types (record, ResultSet or collection) in three subclasses. A program can write a record to the data sink by calling the writeNext() method. When the program completes the whole writing process, it has to inform the data sink simply by calling the finishedWriting() method. The writeNext() method accepts an object whose type is a subclass of the DataElement class. The actual type of the object depends on the actual type of output wrapped by the DataSink and can be a RecordDataElement, a ResultSetDataElement or a CollectionDataElement. But how does the program construct such objects? The DataSink class offers the getNewDataElement(DataElement source, String payload) method, whose purpose is to construct a new data element compatible with the specific data sink. The 'source' parameter is the original DataElement (the one read from a DataSource) being transformed, and the 'payload' parameter is the transformed payload that will be stored inside the produced DataElement. Finally, a handle to the output data can be retrieved by calling the getWrittenDataHandle() method on the DataSink. The output data handle is the transformed record payload if the sink is a RecordDataSink, a ResultSet locator if the sink is a ResultSetDataSink or a metadata collection ID if the sink is a CollectionDataSink.

Generally speaking, the main logic in a program will be something like this:

- while (source.hasNext()) do the following:

- sourceElement = source.getNext();

- (transform sourceElement to produce 'transformedPayload')

- destElement = sink.getNewDataElement(sourceElement, transformedPayload);

- sink.writeNext(destElement);

- sink.finishedWriting();

Implementation Overview

The metadata broker consists of two components:

- The metadata broker service

The metadata broker service provides the functionality of the metadata broker in the form of a stateless service. In the case of a persistent transformation, the service creates a WS-Resource holding information about this transformation and registers for notifications concerning changes in the input collection(s). The created resources are not published and remain completely invisible to the caller.

The service exposes the following operations:

- transform(TransformationProgramID, params) -> String

This operation takes the ID of a transformation program stored in the IS and a set of transformation parameters. The referenced transformation program is executed using the provided parameters, which are just a set of value assignments to variables defined inside the transformation program. The metadata broker library contains a helper class for creating such a parameter set. - transformWithNewTP(TransformationProgram, params) -> String

This operation offers the same functionality as the previous one. However, in this case the first parameter is the full XML definition of a transformation program in string format and not the ID of a stored one. - findPossibleTransformationPrograms (InputDesc, OutputDesc) -> TransformationProgram[]

This operation takes the description of some input format (type, language and schema) as well as the description of a desired output format, and returns an array of transformation programs definitions that could be used in order to perform the required conversion. These transformation programs may not exist before invoking this operation. They are produced on the fly, by combining all the existing transformation programs which are compatible with each other, trying to synthesize more complex transformation programs. Of course, if there is already an existing transformation program which is applicable for the requested type of transformation, it is included in the results. If the output format is null, then the returned array contain all transformation programs that can be applied to the specified input format, producing any possible output format.

- transform(TransformationProgramID, params) -> String

- The metadata broker library

The metadata broker library contains the definitions of the DataSource, RecordDataSource, ResultSetDataSource, CollectionDataSource, DataSink, RecordDataSink, ResultSetDataSink, CollectionDataSink, DataElement, RecordDataElement, ResultSetDataElement, CollectionDataElement, VariableType, SecurityManager and BrokerStatistics Java classes. The following programs are also included in it:- Generic XSLT transformer (XSLT_Transformer): transforms a given record, ResultSet or metadata collection using a given XSLT definition. The output is the transformed record, ResultSet or metadata collection.

- Custom programs transforming metadata to RowSet format, used for feeding the various type of indices in the infrastructure:

- ES metadata to geo RowSet format (ES_2_geoRowset): transforms ES metadata to RowSets suitable for feeding a geospatial index. The transformation is done using a predefined XSLT (given as a parameter to the program) and then the indexType is also injected in every produced RowSet so that the index can find this information.

- Metadata to fulltext RowSet format (Metadata_2_ftsIndexRowset): transforms metadata of any type to RowSets suitable for feeding a full text index. The transformation is done using a predefined XSLT (given as a parameter to the program) and then some custom processing is done over the produced RowSets so that the indexType as well as the OIDs of the original metadata are injected in the RowSets.

- The transformation of metadata using any of the above programs, is a non-blocking operation. This means that the caller will not block until the transformation is completed, since the process of transforming a big ResultSet or collection may be quite time-consuming. For this purpose, each program spawns a new thread to perform the transformation process in the background, while the output data handle is returned to the caller immediately since it's created before the transformation begins. The only exception to the thread spawning mechanism is the transformation of single records. Such transformations are pretty fast, so there is no need for background processing.

- Each program is placed in a java package of its own, beginning with ‘org.gcube.metadatamanagement.metadatabrokerlibrary.programs’. However, this is just a convention followed for the default programs contained in the metadata broker library. There is no restriction on the package names of user-defined programs. In order for user-defined programs to be accessible by the Metadata Broker, they should be put in JAR files and copied to the ‘lib’ directory under the installation directory of gCore (or to any directory that belongs to the CLASSPATH environment variable).

Dependencies

- Metadata Broker Service

- jdk 1.5

- gCore

- Metadata Broker Library

- Metadata Manager Library

- Metadata Manager Stubs

- Metadata Broker Library

- jdk 1.5

- gCore

- ResultSet bundle

- Metadata Manager stubs

- Metadata Manager library

Usage Examples

The following examples show how some of the transformation programs contained in the metadata broker library can be used. For this purpose, the client-side code is shown, describing the necessary steps to invoke the operations of the metadata broker service. Furthermore, the full definition of the referenced programs and transformation programs is also given. These definitions can be used as the base for creating new programs and transformation programs by anyone who needs to do this.

Transforming a ResultSet using a XSLT

This is the XSLT_Transformer class (included in the metadata broker library), which performs the actual conversion:

package org.gcube.metadatamanagement.metadatabrokerlibrary.programs.XSLT_Transformer;

import java.io.StringReader;

import java.io.StringWriter;

import java.rmi.RemoteException;

import javax.xml.transform.OutputKeys;

import javax.xml.transform.Templates;

import javax.xml.transform.Transformer;

import javax.xml.transform.TransformerFactory;

import javax.xml.transform.stream.StreamResult;

import javax.xml.transform.stream.StreamSource;

import org.gcube.common.core.utils.logging.GCUBELog;

import org.gcube.metadatamanagement.metadatabrokerlibrary.datahandlers.DataElement;

import org.gcube.metadatamanagement.metadatabrokerlibrary.datahandlers.DataSink;

import org.gcube.metadatamanagement.metadatabrokerlibrary.datahandlers.DataSource;

import org.gcube.metadatamanagement.metadatabrokerlibrary.datahandlers.RecordDataSource;

import org.gcube.metadatamanagement.metadatabrokerlibrary.programs.VariableType;

import org.gcube.metadatamanagement.metadatabrokerlibrary.util.GenericResourceRetriever;

import org.gcube.metadatamanagement.metadatabrokerlibrary.util.SecurityManager;

import org.gcube.metadatamanagement.metadatabrokerlibrary.util.stats.BrokerStatistics;

import org.gcube.metadatamanagement.metadatabrokerlibrary.util.stats.BrokerStatisticsConstants;

public class XSLT_Transformer {

/** The Logger this class uses */

private static GCUBELog log = new GCUBELog(XSLT_Transformer.class);

public <T extends DataElement, S extends DataElement> void transform(final DataSource<T> source, final VariableType xslt, final DataSink<S> sink, final BrokerStatistics statistics, final SecurityManager secManager) throws RemoteException {

/* If the input is a single record, there is no point in spawning a new thread for the

* transformation because it will not last for a long time. In any other case, the

* transformation is executed in a new thread.

*/

if (source instanceof RecordDataSource) {

log.debug("Input type is 'record', transforming in current thread.");

doTransform(source, xslt, sink, statistics, secManager);

}

else {

log.debug("Input type is not 'record', spawning new thread.");

Thread t = new Thread() {

public void run() {

doTransform(source, xslt, sink, statistics, secManager);

}

};

/* Delegate this thread's credentials to the new thread, and start it */

secManager.delegateCredentialsAndScopeToThread(t);

t.start();

}

}

private <T extends DataElement, S extends DataElement> void doTransform(final DataSource<T> source, final VariableType xslt, final DataSink<S> sink, final BrokerStatistics statistics, final SecurityManager secManager) {

log.debug("Starting transformation, scope is: " + secManager.getScope());

/* Retrieve the XSLT from the IS and compile it so that the records will be transformed faster */

String xsltdef = null;

try {

statistics.startMeasuringValue(BrokerStatisticsConstants.STAT_TIMETORETRIEVEGR);

xsltdef = GenericResourceRetriever.retrieveGenericResource(xslt.getReference(), secManager);

statistics.doneMeasuringValue(BrokerStatisticsConstants.STAT_TIMETORETRIEVEGR);

}

catch (Exception e) {

log.error("XSLT_Transformer: Failed to retrieve the given XSLT from the IS (Generic Resource ID: " +

xslt.getReference() + ").", e);

return;

}

Templates compiledXSLT = null;

try {

TransformerFactory factory = TransformerFactory.newInstance();

compiledXSLT = factory.newTemplates(new StreamSource(new StringReader(xsltdef)));

} catch (Exception e) {

log.error("XSLT_Transformer: Failed to compile the XSLT with ID: " + xslt.getReference(), e);

}

/* Loop through each source element, transform it, and store it in the resulting entity */

statistics.startMeasuringValue(BrokerStatisticsConstants.STAT_TIMETOTRANSFORMALL);

while (source.hasNext()) {

T sourceElement = null;

S destElement = null;

try {

sourceElement = source.getNext();

} catch (Exception e) {

log.error("XSLT_Transformer: Failed to retrieve next element from DataSource. Aborting transformation", e);

return;

}

StringWriter output = new StringWriter();

try {

statistics.startMeasuringValue(BrokerStatisticsConstants.STAT_TIMETOTRANSFORMREC);

Transformer t = compiledXSLT.newTransformer();

t.setOutputProperty(OutputKeys.OMIT_XML_DECLARATION, "yes");

t.transform(new StreamSource(new StringReader(sourceElement.getPayload())), new StreamResult(output));

statistics.doneMeasuringValue(BrokerStatisticsConstants.STAT_TIMETOTRANSFORMREC);

} catch (Exception e) {

log.error("Failed to transform current element (ID = " + sourceElement.getID() + ":\n" +

sourceElement.getPayload() + "\nContinuing with the next element.");

continue;

}

try {

destElement = sink.getNewDataElement(sourceElement, output.toString());

sink.writeNext(destElement);

} catch (Exception e) {

log.error("Failed to store the transformed record to the data sink. Continuing with the next element.", e);

continue;

}

}

statistics.doneMeasuringValue(BrokerStatisticsConstants.STAT_TIMETOTRANSFORMALL);

/* Notify the data sink that there is no more data to be written */

try {

sink.finishedWriting();

} catch (Exception e) {

log.error("Failed to finalize writing of output data.", e);

}

}

}

The only transformation method that can be used externally (when this program is called by a transformation program) is:

public <T extends DataElement, S extends DataElement> void transform(final DataSource<T> source, final VariableType xslt, final DataSink<S> sink, final BrokerStatistics statistics, final SecurityManager secManager)

Taking a closer look at the declaration of this method, we can see that the first parameter is a DataSource, followed by a VariableType and then by a DataSink. This means that the transformation rule that wraps this program has two inputs. The first one is a data input and the second one is a variable. Finally, a BrokerStatistics object and a SecurityManager object are passed to the method so that the program has access to the Metadata Broker statistics and security engine. Note that since we cannot know (at design time) the type of input and output (they may be records, resultsets or collections), we have to use Java generics. The correct way to write a transformation method is to follow the pattern shown in this example, i.e. using two generic types: T as a placeholder for the actual input data type and S as a placeholder for the output data type.

First, the transformation method makes a check on the input data type. If the input is a single record, the transformation can be done immediately in the current thread. In any other case, a new thread must be spawned. In both cases, the doTransform() function is finally called, which is responsible for the actual transformation process. Pay attention to these two lines:

secManager.delegateCredentialsAndScopeToThread(t); t.start();

The first line uses the Metadata Broker's SecurityManager to delegate the scope and credentials of the current thread to the new thread. You should always do this when spawning a new thread inside a program, so that the new thread can successfully invoke other gCube services if needed. The second line starts the execution of the new thread. The important thing to note here is that scope and credentials delegation must be done before the new thread starts executing.

Now let's take a look at doTransform(). First of all, you can see these three lines of code:

statistics.startMeasuringValue(BrokerStatisticsConstants.STAT_TIMETORETRIEVEGR); xsltdef = GenericResourceRetriever.retrieveGenericResource(xslt.getReference(), secManager); statistics.doneMeasuringValue(BrokerStatisticsConstants.STAT_TIMETORETRIEVEGR);

This piece of code uses the class GenericResourceRetriever (part of the Metadata Broker Library) in order to retrieve the XML definition of a generic resource from the IS. XSLTs are stored as generic resources, so we can retrieve them in this way. The first and third line have to do with the Metadata Broker's statistics engine. The Metadata Broker keeps various performance statistics. One of them is the time required to retrieve a generic resource from the IS (since this is a common task for programs). So, what we want to do is to measure the duration of the retrieveGenericResource() call. This is done by placing this line inside a startMeasuringValue()/doneMeasuringValue() block. The parameter to both methods is the name of the performance metric being measured. All supported performance metrics are defined as constants in the BrokerStatisticsConstants class. After the XSLT has been retrieved, it is compiled so that it can be applied faster on XML documents.

The following piece of code is the main transformation loop:

statistics.startMeasuringValue(BrokerStatisticsConstants.STAT_TIMETOTRANSFORMALL);

while (source.hasNext()) {

T sourceElement = null;

S destElement = null;

try {

sourceElement = source.getNext();

} catch (Exception e) {

log.error("XSLT_Transformer: Failed to retrieve next element from DataSource. Aborting transformation", e);

return;

}

StringWriter output = new StringWriter();

try {

statistics.startMeasuringValue(BrokerStatisticsConstants.STAT_TIMETOTRANSFORMREC);

Transformer t = compiledXSLT.newTransformer();

t.setOutputProperty(OutputKeys.OMIT_XML_DECLARATION, "yes");

t.transform(new StreamSource(new StringReader(sourceElement.getPayload())), new StreamResult(output));

statistics.doneMeasuringValue(BrokerStatisticsConstants.STAT_TIMETOTRANSFORMREC);

} catch (Exception e) {

log.error("Failed to transform current element (ID = " + sourceElement.getID() + ":\n" +

sourceElement.getPayload() + "\nContinuing with the next element.");

continue;

}

try {

destElement = sink.getNewDataElement(sourceElement, output.toString());

sink.writeNext(destElement);

} catch (Exception e) {

log.error("Failed to store the transformed record to the data sink. Continuing with the next element.", e);

continue;

}

}

statistics.doneMeasuringValue(BrokerStatisticsConstants.STAT_TIMETOTRANSFORMALL);

First of all you can see that the whole loop is placed inside a performance statistics measuring block. The metric in this case is the time it takes to transform the whole input (i.e. all records). The loop itself is nothing special, just an iteration over the source data records, where the compiled XSLT is used to transform them to the desired format. Then, the produced records are sent to the data sink.

try {

sink.finishedWriting();

} catch (Exception e) {

log.error("Failed to finalize writing of output data.", e);

}

Finally, this piece of code informs the data sink that we are done producing output data.

The program analyzed above is suitable for XSLT-based tranformations on any type of input or output data. However, the transformation program that actually references the program declares specific input and output types. So, in this example we will see a transformation program that can be used to transform a ResultSet to another one, using the XSLT_Transformer program. Of course, other transformation programs can use the same program in order to transform collections or records.

This is the XML definition of the example transformation program:

<?xml version="1.0" encoding="UTF-8"?>

<Resource>

<ID>417a57f0-3c9a-11dd-8601-f86277958a2f</ID>

<Type>GenericResource</Type>

<Profile>

<Name>TP_GXSLT_RS2RS</Name>

<Description>This transformation program transforms a given ResultSet using a given XSLT, producing a new ResultSet.</Description>

<Body>

<TransformationProgram>

<Input name="TPInput">

<Schema isVariable="true"/>

<Language isVariable="true"/>

<Type>resultset</Type>

<Reference isVariable="true"/>

</Input>

<Variable name="XSLT"/>

<Output name="TPOutput">

<Schema isVariable="true"/>

<Language isVariable="true"/>

<Type>resultset</Type>

</Output>

<TransformationRule>

<Definition>

<Transformer>org.gcube.metadatamanagement.metadatabrokerlibrary.programs.XSLT_Transformer.XSLT_Transformer</Transformer>

<Input name="Rule1Input1">

<Schema isVariable="true"> //Input[@name='TPInput']/Schema </Schema>

<Language isVariable="true"> //Input[@name='TPInput']/Language </Language>

<Type>resultset</Type>

<Reference isVariable="true"> //Input[@name='TPInput']/Reference </Reference>

</Input>

<Input name="Rule1Input2">

<Schema/>

<Language/>

<Type>variable</Type>

<Reference isVariable="true"> //Variable[@name='XSLT'] </Reference>

</Input>

<Output name="TPRule1Output">

<Reference>//Output[@name='TPOutput']</Reference>

</Output>

</Definition>

</TransformationRule>

</TransformationProgram>

</Body>

</Profile>

</Resource>

In this example, the transformation program defined above is stored in the IS as a generic resource with ID=417a57f0-3c9a-11dd-8601-f86277958a2f. The locator of the input ResultSet that is going to be transformed is stored in a local file named input.xml, and the XSLT that will be used is stored as a generic resource with UniqueID=ed358e00-23f2-11dc-a35f-9c01d805f283 in the IS. The following code fragment reads the input record from the file, creates a set of parameters which are used in order to assign the input data and the XSLT ID to the respective transformation program variable inputs, and then invokes the transform operation of the metadata broker service. The result is written to the console. The URI of the remote service is given as a command-line argument.

public class Client {

public static void main(String[] args) {

try {

Transform transParams = new Transform();

transParams.setTransformationProgramID(args[1]);

TransformationParameter[] params = new TransformationParameter[6];

params[0] = new TransformationParameter();

params[0].setPathToVariable("//Input[@name='TPInput']/Schema");

params[0].setValue("Schema1=URI1");

params[1] = new TransformationParameter();

params[1].setPathToVariable("//Input[@name='TPInput']/Language");

params[1].setValue("en");

params[2] = new TransformationParameter();

params[2].setPathToVariable("//Input[@name='TPInput']/Reference");

params[2].setValue(readTextFile("input.xml"));

params[3] = new TransformationParameter();

params[3].setPathToVariable("//Output[@name='TPOutput']/Schema");

params[3].setValue("Schema2=URI2");

params[4] = new TransformationParameter();

params[4].setPathToVariable("//Output[@name='TPOutput']/Language");

params[4].setValue("en");

params[5] = new TransformationParameter();

params[5].setPathToVariable("//Variable[@name='XSLT']");

params[5].setValue("ed358e00-23f2-11dc-a35f-9c01d805f283");

transParams.setParameters(params);

/* Get the metadata broker porttype */

EndpointReferenceType endpoint = new EndpointReferenceType();

endpoint.setAddress(new Address(args[0]));

MetadataBrokerPortType broker = new MetadataBrokerServiceAddressingLocator().getMetadataBrokerPortTypePort(endpoint);

broker = GCUBERemotePortTypeContext.getProxy(broker, GCUBEScope.getScope("/gcube/devsec"));

/* Invoke the metadata broker */

String output = broker.transform(transParams);

System.out.println(output);

} catch (Exception e) {

e.printStackTrace();

System.exit(-1);

}

}

private static String readTextFile(String filename) throws IOException {

BufferedReader br = new BufferedReader(new FileReader(filename));

StringBuffer buf = new StringBuffer();

String tmp;

while ((tmp = br.readLine()) != null) {

buf.append(tmp + "\n");

}

br.close();

return buf.toString();

}

}

Using a transformation program within another transformation program

As stated before, whole transformation programs can be used as 'black-box' components inside another transformation program. This can be done by defining a transformation rule which describes the call to the second transformation program.

The transformation program that will be called from another transformation program in this example is defined below.

<TransformationProgram>

<Input name="TPInput">

<Schema>SCH1=http://schema1.xsd</Schema>

<Language>en</Language>

<Type>resultset</Type>

<Reference isVariable="true" />

</Input>

<Output name="TPOutput">

<Schema>SCH3=http://schema3.xsd</Schema>

<Language>en</Language>

<Type>resultset</Type>

</Output>

<TransformationRule>

<Definition>

<Transformer>org.gcube.program2</Transformer>

<Input name="Rule2Input">

<Schema isVariable="true">//Output[@name='TPRule1Output']/Definition/Schema</Schema>

<Language isVariable="true">//Output[@name='TPRule1Output']/Definition/Language</Language>

<Type>resultset</Type>

<Reference isVariable="true">//Output[@name='TPRule1Output']/Definition/Reference</Reference>

</Input>

<Output name="Rule2Output">

<Reference>//Output[@name='TPOutput']</Reference>

</Output>

</Definition>

</TransformationRule>

</TransformationProgram>

The input and output schemas and languages are predefined inside this transformation program, so the only thing that should be specified at run-time is the actual input data reference. Let's say that this transformation program is stored in the IS and its ID is 910e0710-f251-11db-88f9-f971eaf0d653.

The transformation program that uses the above transformation program is defined below.

<TransformationProgram>

<Input name="TPInput">

<Schema>SCH1=http://schema1.xsd</Schema>

<Language>en</Language>

<Type>resultset</Type>

<Reference isVariable="true" />

</Input>

<Variable name="var1"/>

<Output name="TPOutput">

<Schema>SCH2=http://schema2.xsd</Schema>

<Language>en</Language>

<Type>resultset</Type>

</Output>

<TransformationRule>

<Reference>

<Program>910e0710-f251-11db-88f9-f971eaf0d653</Program>

<Value isVariable="true" target="//Input[@name='TPInput']/Reference">//Input[@name='TPInput']/Reference</Value>

<Output name="Rule1Output" />

</Reference>

</TransformationRule>

<TransformationRule>

<Definition>

<Transformer>org.gcube.program1</Transformer>

<Input name="Rule2Input1">

<Schema isVariable="true">//Output[@name='TPRule1Output']/Definition/Schema</Schema>

<Language isVariable="true">//Output[@name='TPRule1Output']/Definition/Language</Language>

<Type>resultset</Type>

<Reference isVariable="true">//Output[@name='TPRule1Output']/Definition/Reference</Reference>

</Input>

<Input name="Rule2Input2">

<Schema />

<Language />

<Type>variable</Type>

<Reference isVariable="true"> //Variable[@name='var1'] </Reference>

</Input>

<Output name="Rule2Output">

<Reference>//Output[@name='TPOutput']</Reference>

</Output>

</Definition>

</TransformationRule>

</TransformationProgram>

The element that describes the call to the first transformation program is the first TransformationRule element. This element specifies the UniqueID of the transformation program to be called, as well as a mapping of values to the variable inputs of that transformation program. Since the first transformation program contains only one variable input (the input data reference), there is only one mapping in this example, described by a Value element. The target attribute of this element specifies the target element of the other transformation program whose value is to be set, and the element's content specifies the actual value to set. In this example, this is not a literal value but a reference to another element of the transformation program, where the value should be taken from. Specifically, we have specified that the first transformation program's input should be the input of the second transformation program. Since the value of the Value element is a XPath expression, the isVariable attribute is also set to true, meaning that the content should be interpreted as a reference to another element and not as a literal value. The output of the first transformation program becomes the output of the transformation rule that called it, and is named Rule1Output. This output is then used as the input of the next transformation rule.

Finding a set of transformation programs given a source and target metadata formats

This example demonstrates how one can get an array of transformation programs that could be used in order to transform metadata from a given source format to a given target format. The operation that can be used in order to accomplish this is 'findPossibleTransformationPrograms'. The caller must specify a source and target metadata format and the service searches for possible "chains" of existing transformation programs that could be used in order to carry out the transformation. There are three rules imposed by the Metadata Broker service:

- Only transformation programs with one data input are considered during the search

- Each transformation program can be used at most one time inside each chain of transformation programs (this is needed in order to avoid infinite loops)

- A transformation program that produces a collection as its output can only be the last one inside a chain of transformation programs

Each chain composed by the Metadata Broker service is converted to a transformation program, which "links" the individual transformation programs forming the chain. This transformation program contains a transformation rule for each transformation program in the chain. Each transformation rule describes a call to the corresponding transformation program. The result of the operation is an array of strings, where each string corresponds to a synthesized transformation program.

It is possible that some of the transformation programs included in a chain contain some input variables. For each found variable, the Metadata Broker service places a variable to the synthesized transformation program, and this variable is mapped to the original one. This way one can specify the values of the variables contained in every transformation program involved in the chain, by specifying the values of the corresponding variables of the synthesized transformation program. This mechanism is necessary because the individual transformation programs contained in the chain are not visible to the caller. The only entity that the caller sees is the synthesized transformation program that is responsible for calling the ones it is built from.

Consider the case where a transformation program whose output language is a variable is added to a chain. When the service searches for another transformation program to append to the chain after that one, it may find a transformation program whose input language is 'en' (English). Then, the value 'en' will be assigned to the variable field describing the previous transformation program's output language. The same happens if an output field (schema or language) of a transformation program contains a specific value and the corresponding input field of the next transformation program is a variable. But what happens if the two fields are both variables? In this case, an input variable is added to the synthesized transformation program. When the caller uses this transformation program, he/she will need to specify a value for this variable. That value will then be assigned automatically both to the output field of the first transformation program and to the input field of the second transformation program.

Now let's see how one can call the 'findPossibleTransformationPrograms' operation:

import org.apache.axis.message.addressing.Address;

import org.apache.axis.message.addressing.EndpointReferenceType;

import org.diligentproject.metadatamanagement.metadatabrokerlibrary.programs.TPIOType;

import org.diligentproject.metadatamanagement.metadatabrokerservice.stubs.FindPossibleTransformationProgramsResponse;

import org.diligentproject.metadatamanagement.metadatabrokerservice.stubs.MetadataBrokerPortType;

import org.diligentproject.metadatamanagement.metadatabrokerservice.stubs.FindPossibleTransformationPrograms;

import org.diligentproject.metadatamanagement.metadatabrokerservice.stubs.service.MetadataBrokerServiceAddressingLocator;

public class TestFindPossibleTPs {

public static void main(String[] args) {

try {

// Create endpoint reference to the service

EndpointReferenceType endpoint = new EndpointReferenceType();

endpoint.setAddress(new Address(args[0]));

MetadataBrokerPortType broker = new MetadataBrokerServiceAddressingLocator().getMetadataBrokerPortTypePort(endpoint);

// Create the IO format descriptors

TPIOType inFormat = TPIOType.fromParams(args[1], args[2], args[3], "");

TPIOType outFormat = TPIOType.fromParams(args[4], args[5], args[6], "");

// Prepare the invocation parameters

FindPossibleTransformationPrograms params = new FindPossibleTransformationPrograms();

params.setInputFormat(inFormat.toXMLString());

params.setOutputFormat(outFormat.toXMLString());

// Invoke the remote operation

FindPossibleTransformationProgramsResponse resp = broker.findPossibleTransformationPrograms(params);

String[] TPs = resp.getTransformationProgram();

for (String TP : TPs) {

System.out.println(TP);

System.out.println();

}

} catch (Exception e) {

e.printStackTrace();

}

}

}

This code fragment assumes the following:

- args[0] = the Metadata Broker service URI

- args[1] = the source format type ('resultset', 'collection' or 'record')

- args[2] = the source format language

- args[3] = the source format schema (in 'schemaName=schemaURI' format)

- args[4] = the target format type ('resultset', 'collection' or 'record')

- args[5] = the target format language

- args[6] = the target format schema (in 'schemaName=schemaURI' format)

First, an endpoint reference to the metadata broker service is created. Then, we have to create the source and target format descriptors. The remote operation accepts two strings describing the two metadata formats. These strings are nothing more that the serialized form of two TPIOType objects. The TPIOType class is the base class of the CollectionType, ResultSetType and RecordType classes. This class defines the static method fromParams which creates and returns an object describing a metadata format based on given values for the format's schema, language, type and data reference. The returned object will be an instance of the correct class (derived from TPIOType), based on the given value for the 'type' attribute. Here, the 'reference' attribute is not used because we are interested in the metadata format itself and not in the data it describes. After constructing the two objects, we get their serialized form by calling the toXMLString() method on them. The returned strings are the ones that must be passed to the remote operation.

Next, we invoke the remote operation and then we just print the returned transformation programs.

gCube Data Transformation Service

Introduction

The gCube Data Transformation service is responsible for transforming content and metadata among different formats and specifications. gDTS lies on top of Content and Metadata Management services. It interoperates with these components in order to retrieve information objects and store the transformed ones. Transformations can be performed offline and on demand on a single object or on a group of objects.

gDTS employs a variety of pluggable converters in order to transform digital objects between arbitrary content types, and takes advantage of extended information on the content types to achieve the selection of the appropriate conversion elements.

As already mentioned, the main functionality of the gCube Data Transformation Service is to convert digital objects from one content format to another. The conversions will be performed by transformation programs which either have been previously defined and stored or are composed on-the-fly during the transformation process. Every transformation program (except for those which are composed on-the-fly) is stored in the IS.

The gCube Data Transformation Service is presumed to offer a lot of benefits in many aspects of gCube. Presentation layer benefits from the production of alternative representations of multimedia documents. Generation of thumbnails, transformations of objects to specific formats that are required by some presentation applications and projection of multimedia files with variable quality/bitrate are just some examples of useful transformations over multimedia documents. In addition, as conversion tool for textual documents, it will offer online projection of documents in html format and moreover any other downloadable formats as pdf or ps. Annotation UI can be implemented more straightforward on selected logical groups of content types (e.g. images) without caring about the details of the content and the support offered by the browsers. Finally, by utilizing the functionality of Metadata Broker, homogenization of metadata with variable schemas can be achieved.

Concepts

Content Type

In gDTS the content type identification and notation conforms to the MIME type specification described in RFC 2045 and RFC 2046. This provides compliance with mainstream applications such as browsers, mail clients, etc. In this context, a document’s content type is defined by the media type, the subtype identifier plus a set of parameters, specified in an “attribute=value” notation. This extra information is exploited by data converters capable of interpreting it.

Some examples of content formats of information objects are:

- Mimetype=”image/png”, width=”500”, height=”500” that denotes that the object’s format is the well known portable network graphics and the image’s width and height is 500 pixel.

- Mimetype=”text/xml”, schema=”dc”, language=”en”, denotes that the object is an xml document with schema Dublin Core and language English.

Transformation Units

A transformation unit describes the way a program can be used in order to perform a transformation from one or more source content type to a target content type. The transformation unit determines the behaviour of each program by providing proper program parameters potentially drawn from the content type representation. Program parameters may contain string literals and/or ranges (via wildcards) in order to denote source content types. In addition, the wildcard ‘-’ can be used to force the presence of a program parameter in the content types set by the caller which uses this specific transformation unit. Furthermore, transformation units may reference other transformation units and use them as “black-box” components in a transformation process. Thus, each transformation unit is identified by the pair (transformation program id, transformation unit id).

Transformations' Graph

Through transformation units new content types and program capabilities are published to gDTS. The published information is stored in a graph we call transformations graph. The graph's nodes and edges correspond to content types and transformation units respectively. Using this graph we are able to find a path of transformation units so as to perform an object transformation from its content type (source) to a target content type.

Transformation Programs

A transformation program is an xml document describing one or more possible transformations from a source content type to a target content type. Each transformation program references to at most one program and it contains one or more transformation units for each possible transformation

Implementation Overview

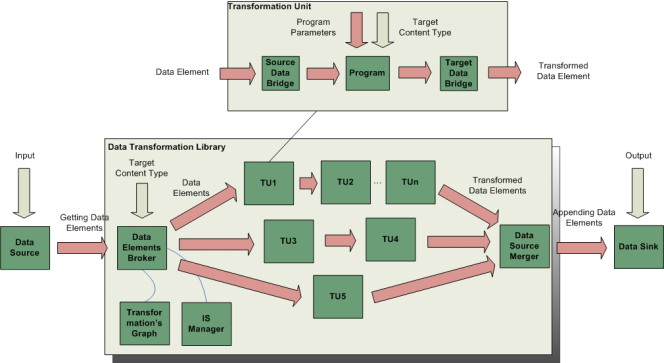

The gCube Data Transformation Service is primarily comprised by the Data Transformation Service component which basically implements the WS-Interface of the Service, the Data Transformation Library which carries out the basic functionality of the Service i.e. the selection of the appropriate conversion element and the execution of the transformation over the information objects, a set of data handlers which are responsible to fetch and return/store the information objects and finally the conversion elements “Programs” that perform the conversions.

Data Transformation Service

The Data Transformation Service component implements the WS-Interface of the Service. Basically, it is the entry point for the services that Data Transformation Library provides. It's main operation is to check the parameters of the invocation, instantiate the data handlers, invoke the appropriate method of Data Transformation Library and inform clients for any possible faults.

A Data Transformation Service's RI operates successfully over multiple scopes by keeping any necessary information for each scope independently.

Data Transformation Library

Inside the Data Transformation Library it is implemented the core functionality of gDTS. The Data Transformation Library contains variable packages which are responsible for different features of gDTS. The basic class of the Data Transformation Library is the DTSCore which is the class responsible to orchestrate the rest of the components. A DTSCore instance contains a transformations graph which is responsible to determine the transformation that will be performed (if the transformation program / unit is not explicitly set), as well as an information manager (IManager) which is the class responsible to fetch information about the transformation programs. The implementation of IManager that is currently used by the gDTS is the ISManager which fetches, publishes and queries transformation programs from the IS.

The following diagram depicts the operations applied by Data Transformation Library on data elements when a request for a transformation is made and a transformation unit has not been explicitly set.

For each data element the Data Elements Broker reads its content type and by utilizing the transformations graph, determines the proper Transformation Unit that is going to perform the transformation. Then the required Data Bridge and Program instances are created and the data element is appended to the source Data Bridge of the Transformation Unit. Then each object which has the same content type is also appended into the same Data Bridge. If another object comes from the Data Source which has a different content type, the Data Elements Broker uses again the Transformations Graph and a new Transformation Unit is instantiated. If the graph does not manage to find any applicable transformation unit for a data element, it is just ignored as well as the rest which have the same content type.

In the Transformation Unit then, the data elements are transformed one by one by the program and the result is appended into the target data bridge contained in the transformation unit. Then these objects are available to the Data Source Merger which reads in parallel objects from all the target data bridges and appends them into the Data Sink.

Apart from the core functionality, in the Data Transformation Library are also contained the interfaces of the Programs and Data Handlers which have to be adopted by any program or data handler implementation. Finally, report and query packages contain classes for the reporting and querying functionalities respectively.

Data Transformation Handlers

The gDTS has to perform some procedures in order to fetch and store content. These procedures are totally independent from the basic functionality of the gDTS which is to transform one or more objects into different content formats and they shall not affect it by any means. So whenever the gDTS is invoked, the caller-supplied data is automatically wrapped in a data source object. In a similar way, the output of the transformation is wrapped in a data sink object. The source and sink objects can then be used by the invoked java program in order to read each source object sequentially and write its transformed counterpart to the destination. This processing of data objects is done homogenously because of the abstraction provided by the data sources and data sinks, no matter what the nature of the original source and destination is.

The clients identify the appropriate data handler by its name in the input/output type parameter contained in each transform method of gDTS. Then, the service loads dynamically the java class of the data handler that corresponds to this type.

The available Data Handlers are:

Data Sources

| Data Source Name | Input Name | Input Value | Input Parameters | Description |

|---|---|---|---|---|

| CObjectDataSource | CObject | content object id | handleParts = "true|false", handleAlternativeRepresentations = "true|false" | Fetches one content object. |

| CollectionDataSource | Collection | content collection id | handleParts = "true|false", handleAlternativeRepresentations = "true|false", getElementsRS = "rsEPR" | Fetches all the digital objects that belong to a content collection. If getElementsRS parameter is set then only some specific objects will be fetched whose ids should be contained in ResultElementGeneric.RECORD_ID_NAME attribute of each element of rsEPR. |

| MCollectionDataSource | MCollection | metadata collection id | getElementsRS = "rsEPR" | Fetches all the metadata objects of a metadata collection. If getElementsRS parameter is set then this data source fetches some specific metadata objects of the collection. These objects are specified by setting their metadata object id in each result element as attribute with name ResultElementGeneric.RECORD_ID_NAME. |

| RSBlobDataSource | RSBlob | result set locator | NA | Gets as input content of a result set with blob elements. |

| FTPDataSource | FTP | host name | username, password, directory, port | Downloads content from an ftp server. |

| URIListDataSource | URIList | url | NA | Fetches content from urls that are contained in a file whose location is set as input value. |

Data Sinks

| Data Sink Name | Output Name | Output Value | Output Parameters | Description |

|---|---|---|---|---|

| CollectionDataSink | Collection | content collection id (optional) | "CollectionName", "isUserCollection", "isVirtual" (Create collection parameters) | Stores the result of the transformation into a content collection. If output value is not set a new collection is created |

| MCollectionDataSink | MCollection | NA | Metadata collection creation parameters | Stores the result of the transformation into a metadata collection. |

| AlterDataSink | Alternative | NA | "isWeakRepresentation", "rank", "representationID", "representationRole" | Stores transformed objects in CMS as alternative representations. |

| RSBlobDataSink | RSBlob | NA | NA | Puts data into a result set with blob elements. |

| RSXMLDataSink | RSXML | NA | NA | Puts (xml) data into a result set with xml elements. |

| FTPDataSink | FTP | host name | username, password, port, directory | Stores objects in an ftp server. |

Data Bridges

| Data Bridge Name | Parameters | Description |

|---|---|---|

| RSBlobDataBridge | NA | RSBlobDataBridge is used as a buffer of data elements. Utilizes RS in order to keep objects in the disk. |

| REFDataBridge | flowControled = "true|false", limit | Keeps references to data elements. If flow control is enabled a maximum number of #limit data elements can exist in the bridge. |

| FilterDataBridge | NA | Filters the contents of a Data Source by a content format. |

Data Transformation Programs

The available transformations that the gDTS can use reside externally to the service, as separate Java classes called Programs (not to be confused with ‘Transformation Programs’). Each program is an independent, self-describing entity that encapsulates the logic of the transformation process it performs. The gDTS loads these required programs dynamically as the execution proceeds and supplies them with the input data that must be transformed. Since the loading is done at run-time, extending the gDTS transformation capabilities by adding programs is a trivial task. The new program has to be written as a java class and referenced in the classpath variable, so that it can be located when required.

The gDTS provides helper functionality to simplify the creation of new programs. This functionality is exposed to the program author through a set of abstract java classes, which are included in the gCube Data Transformation Library.

The available Program implementations are:

| Name | Description |

|---|---|

| DocToTextTransformer | Extacts plain text from msword documents |

| ExcelToTextTransformer | Extacts plain text from ms-excel documents |

| FtsRowset_Transformer | Creates full text rowsets from xml documents |

| FwRowset_Transformer | Creates forward rowsets from xml documents |

| GeoRowset_Transformer | Creates geo rowsets from xml documents |

| ImageMagickWrapperTP | Currently is able to convert images from to any image type, create thumbnails, watermarking images. Any other operation of image magick library can be incorporated |

| PDFToJPEGTransformer | Creates jpeg images from a page of a pdf document |

| PDFToTextHTMLTransformer | Converts a pdf document to html or text |

| PPTToTextTransformer | Extacts plain text from powerpoint documents |

| TextToFtsRowset_Transformer | Creates full text rowsets from plain text |

| XSLT_Transformer | Applies an xslt to an xml document |

| Zipper | Zips single or multi part files |

Dependencies

- Data Transformation Service

- jdk 1.5

- gCore

- Data Transformation Library

- Data Transformation Library

- jdk 1.5

- Data Transformation Handlers

- jdk 1.5

- gCore

- Data Transformation Library

- Metadata Manager stubs

- Metadata Manager library

- Content Management Service stubs

- Collection Management Service stubs

- Content Management library

- Apache Commons NET library

- Data Transformation Programs

- jdk 1.5

- gCore

- Data Transformation Library

- Apache POI library

- Apache PDF BOX library

- ImageMagick software

- MEncoder software

Usage Examples

Creating full text rowsets from content collection

The first example demonstrates how it is possible to create full text rowsets from a content collection. In the input field of the request we set as input type the content collection data source input type which is Collection and as value the content collection id (see Data Sources). In the output field is specified that the result of the transformation will be appended into a result set with XML elements which is created by the data sink and returned in the response (see Data Sinks). Finally, the transformation procedure of DTS that is used in this example transformData is able to identify by itself the appropriate transformation units that will be used to transform the input data to the target content type text/xml, schemaURI="http://ftrowset.xsd". The target content type is specified in the respective request parameter.

import org.apache.axis.message.addressing.AttributedURI;

import org.apache.axis.message.addressing.EndpointReferenceType;

import org.gcube.common.core.contexts.GCUBERemotePortTypeContext;

import org.gcube.common.core.scope.GCUBEScope;

import org.gcube.datatransformation.datatransformationservice.stubs.ContentType;

import org.gcube.datatransformation.datatransformationservice.stubs.DataTransformationServicePortType;

import org.gcube.datatransformation.datatransformationservice.stubs.Input;

import org.gcube.datatransformation.datatransformationservice.stubs.Output;

import org.gcube.datatransformation.datatransformationservice.stubs.Parameter;

import org.gcube.datatransformation.datatransformationservice.stubs.TransformData;

import org.gcube.datatransformation.datatransformationservice.stubs.service.DataTransformationServiceAddressingLocator;

public class DTSClient_CreateFTRowsetFromContent {

public static void main(String[] args) throws Exception {

String dtsEndpoint = args[0];

String scope = args[1];

String inputCollectionID = args[2];

EndpointReferenceType endpoint = new EndpointReferenceType();

endpoint.setAddress(new AttributedURI(dtsEndpoint));

DataTransformationServicePortType dts = new DataTransformationServiceAddressingLocator().getDataTransformationServicePortTypePort(endpoint);

dts = GCUBERemotePortTypeContext.getProxy(dts, GCUBEScope.getScope(scope));

/* INPUT */

TransformData request = new TransformData();

Input input = new Input();

input.setInputType("Collection");

input.setInputValue(inputCollectionID);

request.setInput(input);

/* OUTPUT */

Output output = new Output();

output.setOutputType("RSXML");

request.setOutput(output);

/* TARGET CONTENT TYPE */

ContentType targetContentType = new ContentType();

targetContentType.setMimeType("text/xml");

Parameter contentTypeParameter = new Parameter();

contentTypeParameter.setName("schemaURI");

contentTypeParameter.setValue("http://ftrowset.xsd");

Parameter [] contentTypeParameters = {contentTypeParameter};

targetContentType.setParameters(contentTypeParameters);

request.setTargetContentType(targetContentType);

String rs = dts.transformData(request).getOutput();

System.out.println("The epr of the result set with the full text rowset is: \n"+rs);

}

}

Creating geo rowsets from a metadata collection

This example demonstrates how it is possible to create geo rowsets having as source a metadata collection. For this procedure we have to use a composite transformation unit identified by the (transformation program / tranformation unit) pair "$XSLT_GeoRowset_Composite_Transformer / 0". This transformation unit invokes the transformation units "XSLT_Transformer / 0" and "GeoRowset_Transformer / 0" (see Data Transformation Programs). The former transformation unit requires as program parameter an xslt that transforms the input metadata collection to a common coordinate schema while the latter is used to create the geo rowsets from the common schema and requires two program parameters (geoxslt and indexType).

import org.apache.axis.message.addressing.AttributedURI;

import org.apache.axis.message.addressing.EndpointReferenceType;

import org.gcube.common.core.contexts.GCUBERemotePortTypeContext;

import org.gcube.common.core.scope.GCUBEScope;

import org.gcube.datatransformation.datatransformationservice.stubs.ContentType;

import org.gcube.datatransformation.datatransformationservice.stubs.DataTransformationServicePortType;

import org.gcube.datatransformation.datatransformationservice.stubs.Input;

import org.gcube.datatransformation.datatransformationservice.stubs.Output;

import org.gcube.datatransformation.datatransformationservice.stubs.Parameter;

import org.gcube.datatransformation.datatransformationservice.stubs.TransformDataWithTransformationUnit;

import org.gcube.datatransformation.datatransformationservice.stubs.service.DataTransformationServiceAddressingLocator;

public class DTSClient_CreateGeoRowsetFromSourceColl {

public static void main(String[] args) throws Exception {

String dtsEndpoint = args[0];

String scope = args[1];

String inputMCollectionID = args[2];

String xslt = args[3];

String geoxslt = args[4];

String indexType = args[5];

String transformationProgramID = "$XSLT_GeoRowset_Composite_Transformer";

String transformationUnitID = "0";

EndpointReferenceType endpoint = new EndpointReferenceType();

endpoint.setAddress(new AttributedURI(dtsEndpoint));

DataTransformationServicePortType dts = new DataTransformationServiceAddressingLocator().getDataTransformationServicePortTypePort(endpoint);

dts = GCUBERemotePortTypeContext.getProxy(dts, GCUBEScope.getScope(scope));

TransformDataWithTransformationUnit request = new TransformDataWithTransformationUnit();

/* INPUT */

Input input = new Input();

input.setInputType("MCollection");

input.setInputValue(inputMCollectionID);

Input [] inputs = {input};

request.setInputs(inputs);

/* OUTPUT */

Output output = new Output();

output.setOutputType("RSXML");

request.setOutput(output);

/* TARGET CONTENT TYPE */

ContentType targetContentType = new ContentType();

targetContentType.setMimeType("text/xml");

Parameter contentTypeParameter = new Parameter();

contentTypeParameter.setName("schemaURI");

contentTypeParameter.setValue("http://georowset.xsd");

Parameter [] contentTypeParameters = {contentTypeParameter};

targetContentType.setParameters(contentTypeParameters);

request.setTargetContentType(targetContentType);

request.setTPID(transformationProgramID);

request.setTransformationUnitID(transformationUnitID);

/* PROGRAM PARAMETERS */

Parameter xsltParameter = new Parameter();

xsltParameter.setName("xslt");

xsltParameter.setValue(xslt);

Parameter geoxsltParameter = new Parameter();

geoxsltParameter.setName("geoxslt");

geoxsltParameter.setValue(geoxslt);

Parameter indexTypeParameter = new Parameter();

indexTypeParameter.setName("indexType");

indexTypeParameter.setValue(indexType);

Parameter[] tProgramUnboundParameters = {xsltParameter, geoxsltParameter, indexTypeParameter};

request.setTProgramUnboundParameters(tProgramUnboundParameters);

//request.setFilterSources(false);//Default is false

//request.setCreateReport(false);//Default is false

String rs = dts.transformDataWithTransformationUnit(request).getOutput();

System.out.println("The epr of the result set with the geo rowset is: \n"+rs);

}

}

Finding possible target content types from a source content type

This example demonstrates how we are able to find the possible content types that an object can be transformed by giving its source content type.

import org.apache.axis.message.addressing.AttributedURI;

import org.apache.axis.message.addressing.EndpointReferenceType;

import org.gcube.common.core.contexts.GCUBERemotePortTypeContext;

import org.gcube.common.core.scope.GCUBEScope;

import org.gcube.datatransformation.datatransformationservice.StubsToModelUtils;

import org.gcube.datatransformation.datatransformationservice.stubs.ContentType;

import org.gcube.datatransformation.datatransformationservice.stubs.DataTransformationServicePortType;

import org.gcube.datatransformation.datatransformationservice.stubs.FindAvailableTargetContentTypes;

import org.gcube.datatransformation.datatransformationservice.stubs.FindAvailableTargetContentTypesResponse;

import org.gcube.datatransformation.datatransformationservice.stubs.service.DataTransformationServiceAddressingLocator;

public class FindAvailableTargetContentFormatsClient {

public static void main(String[] args) throws Exception {

String dtsEndpoint = args[0];

String scope = args[1];

String sourceMimeType = args[2];

EndpointReferenceType endpoint = new EndpointReferenceType();

endpoint.setAddress(new AttributedURI(dtsEndpoint));

DataTransformationServicePortType dts = new DataTransformationServiceAddressingLocator().getDataTransformationServicePortTypePort(endpoint);

dts = GCUBERemotePortTypeContext.getProxy(dts, GCUBEScope.getScope(scope));

ContentType sourceContentType = new ContentType();

sourceContentType.setMimeType(sourceMimeType);

FindAvailableTargetContentTypes request = new FindAvailableTargetContentTypes();

request.setSourceContentType(sourceContentType);

FindAvailableTargetContentTypesResponse response = dts.findAvailableTargetContentTypes(request);

if(response==null || response.getTargetContentTypes()==null || response.getTargetContentTypes().getContentTypesArray()==null ||

response.getTargetContentTypes().getContentTypesArray().length==0 ){

System.out.println("No available target content types found");

}else{

for(ContentType targetContentFormat: response.getTargetContentTypes().getContentTypesArray()){

//Works only with lib and service's jar ;-)

System.out.println("TargetContentTypeFound: "+StubsToModelUtils.contentTypeFromStub(targetContentFormat).toString());

}

}

}

}

Finding applicable transformation units

This example demonstrates how it is possible to search for transformation units that are able to perform a transformation from a source to a target content type. In this example we are trying to find one or more transformation units that can transform a tiff image to png format with size 500x500px.

import org.apache.axis.message.addressing.AttributedURI;

import org.apache.axis.message.addressing.EndpointReferenceType;

import org.gcube.common.core.contexts.GCUBERemotePortTypeContext;

import org.gcube.common.core.scope.GCUBEScope;

import org.gcube.datatransformation.datatransformationservice.stubs.*;

import org.gcube.datatransformation.datatransformationservice.stubs.service.DataTransformationServiceAddressingLocator;

public class FindApplicableTransformationUnitsClient {

public static void main(String[] args) throws Exception {

String dtsEndpoint = args[0];

String scope = args[1];

EndpointReferenceType endpoint = new EndpointReferenceType();

endpoint.setAddress(new AttributedURI(dtsEndpoint));

DataTransformationServicePortType dts = new DataTransformationServiceAddressingLocator().getDataTransformationServicePortTypePort(endpoint);

dts = GCUBERemotePortTypeContext.getProxy(dts, GCUBEScope.getScope(scope));

FindApplicableTransformationUnits params = new FindApplicableTransformationUnits();

ContentType sourceContentType = new ContentType();

sourceContentType.setMimeType("image/tiff");

params.setSourceContentType(sourceContentType);

ContentType targetContentType = new ContentType();

targetContentType.setMimeType("image/png");

Parameter tparam1 = new Parameter();

tparam1.setName("width");

tparam1.setValue("500");

Parameter tparam2 = new Parameter();

tparam2.setName("height");

tparam2.setValue("500");

Parameter [] tParameters = {tparam1, tparam2};

targetContentType.setParameters(tParameters);

params.setTargetContentType(targetContentType);

FindApplicableTransformationUnitsResponse resp = dts.findApplicableTransformationUnits(params);

if(resp!=null){

for(TPAndTransformationUnit tpandtr: resp.getTPAndTransformationUnitIDs()){

System.out.println("TP: "+tpandtr.getTransformationProgramID()+", TR: "+tpandtr.getTransformationUnitID());

}

}else{

System.out.println("No applicable transformation units found...");

}

}

}